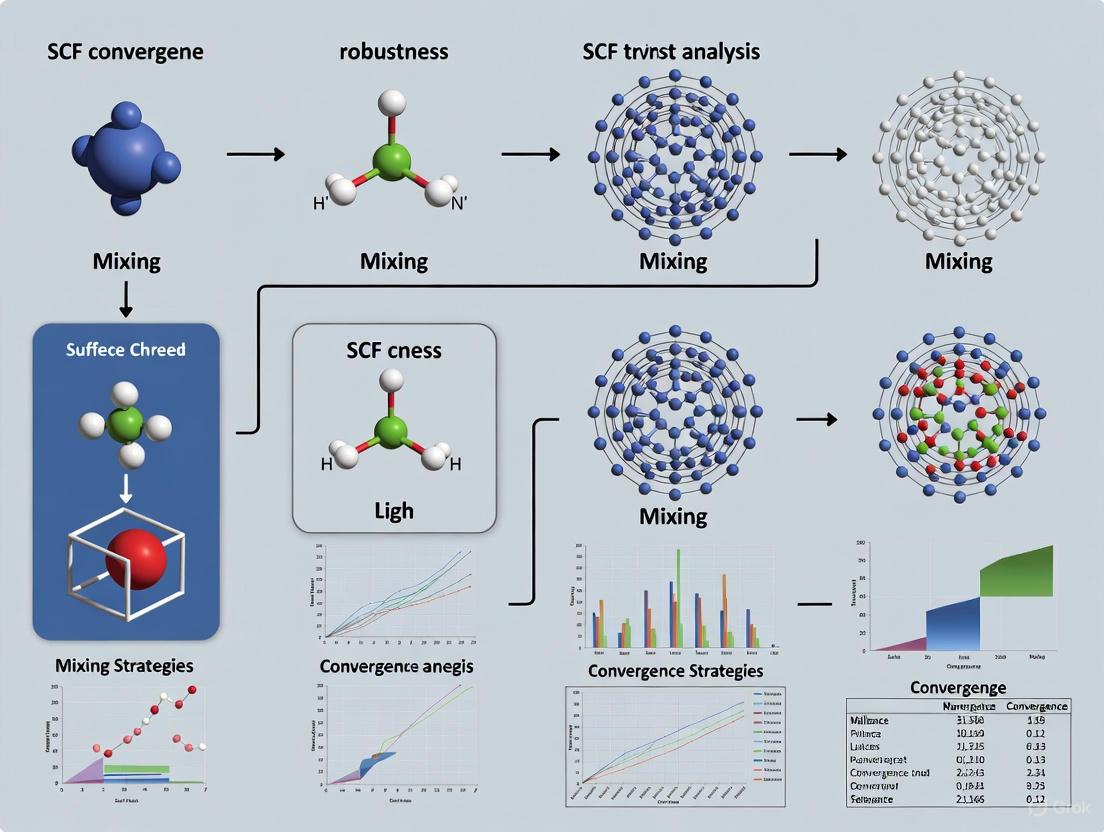

Robust SCF Convergence: A Comprehensive Guide to Mixing Strategies for Reliable Electronic Structure Calculations

This article provides a systematic analysis of self-consistent field (SCF) convergence robustness, focusing on the critical role of mixing strategies in density functional theory (DFT) and Hartree-Fock calculations.

Robust SCF Convergence: A Comprehensive Guide to Mixing Strategies for Reliable Electronic Structure Calculations

Abstract

This article provides a systematic analysis of self-consistent field (SCF) convergence robustness, focusing on the critical role of mixing strategies in density functional theory (DFT) and Hartree-Fock calculations. Tailored for computational researchers and drug development professionals, it covers foundational principles of SCF instabilities, evaluates methodological implementations across major codes, offers practical troubleshooting for pathological systems like transition metal complexes, and establishes validation protocols. The guide synthesizes recent algorithmic advances, including adaptive damping and neural network potentials, to equip scientists with strategies for achieving reliable convergence in high-throughput computational workflows, ultimately accelerating materials discovery and biomolecular simulation.

Understanding SCF Convergence: Fundamental Challenges and Physical Origins

The Self-Consistent Field (SCF) method represents a cornerstone computational procedure in electronic structure theory, forming the fundamental iterative cycle for solving Hartree-Fock and Kohn-Sham equations in quantum chemistry and materials physics. Despite its widespread implementation in major computational packages like SIESTA, Q-Chem, and SCM's BAND, the SCF process exhibits inherent convergence challenges that manifest as oscillation, divergence, or prohibitively slow progress toward self-consistency. This analysis examines the mathematical and physical origins of these convergence difficulties, systematically comparing solution strategies including mixing algorithms, damping protocols, and direct minimization techniques. Through rigorous evaluation of experimental data and algorithmic performance across molecular and metallic systems, we provide a robustness framework for selecting appropriate convergence strategies based on system characteristics and computational requirements.

The SCF method constitutes an iterative computational procedure that lies at the heart of modern electronic structure calculations in chemistry and materials science. In both Hartree-Fock theory and Density Functional Theory (DFT), the SCF cycle emerges from the fundamental structure of the governing equations: the Hamiltonian depends on the electron density, which in turn is obtained from the Hamiltonian's eigenfunctions [1]. This interdependence creates a recursive relationship that must be solved self-consistently, typically through an iterative loop that begins with an initial guess for the electron density or density matrix [2]. The cycle proceeds through sequential computation of the Hamiltonian from the current density, solving the Kohn-Sham or Hartree-Fock equations to obtain a new density matrix, and repeating until convergence criteria are satisfied [2] [3].

The mathematical structure of the SCF equations in a finite basis set expansion leads to a generalized eigenvalue problem of the form FC = SCE, where F is the Fock/Kohn-Sham matrix, C contains the molecular orbital coefficients, S is the overlap matrix of the basis functions, and E is a diagonal matrix of orbital energies [1]. This formulation enables practical computation but introduces numerical challenges, particularly when the basis set is non-orthogonal or contains linear dependencies. The unitary invariance of the energy functional with respect to rotations among occupied orbitals provides theoretical foundation for the SCF procedure but also contributes to convergence difficulties in systems with near-degenerate states or complex potential energy surfaces [1].

Fundamental Convergence Challenges in SCF Iterations

Mathematical Origins of Instability

The core convergence challenges in SCF calculations stem from the nonlinear nature of the self-consistency requirement. The SCF cycle can be conceptualized as a fixed-point iteration scheme where the solution represents a fixed point of a nonlinear operator that maps input densities to output densities [4]. This mathematical structure inherently predisposes the iteration to several failure modes: divergence, where successive iterations move progressively farther from the solution; oscillation, where the calculation cycles between two or more non-solutions; and critical slowdown, where convergence becomes impractically slow [2] [4].

The problematic convergence behavior arises primarily from two physical phenomena with distinct mathematical characteristics. Charge-sloshing instabilities occur particularly in metallic systems with delocalized electrons, where long-wavelength oscillations in the electron density experience insufficient damping [4]. This instability originates from the long-range nature of the Coulomb operator and manifests as a large-wavelength divergence that plagues conventional mixing schemes. A second class of difficulties emerges from strongly localized states near the Fermi level, frequently encountered in systems containing d- or f-electrons, surface states, or transition metal complexes [4]. These localized states respond differently to potential updates compared to delocalized states, creating a mismatch in the iterative procedure that conventional preconditioning strategies struggle to address simultaneously with charge-sloshing instabilities.

System-Dependent Convergence Behavior

Convergence characteristics exhibit pronounced dependence on system composition and electronic structure. Simple molecular systems with localized orbitals and substantial HOMO-LUMO gaps typically converge reliably with basic mixing schemes [2]. In contrast, metallic systems with vanishing band gaps, elongated supercells, surfaces, and transition-metal alloys present substantial challenges [4]. This dichotomy reflects fundamental differences in the electronic response properties: gapped systems exhibit exponential decay of density matrix elements, while metallic systems display algebraic decay that complicates convergence [2] [4].

Non-collinear magnetic systems, exemplified by iron clusters, present additional convergence complications due to coupling between charge and spin degrees of freedom [2]. The multi-component nature of the density matrix in these systems increases the complexity of the mixing procedure, while the potential for multiple local minima in the energy landscape further complicates convergence to the ground state. These challenges necessitate specialized mixing strategies and sometimes manual intervention to achieve convergence, highlighting the limitations of generic SCF approaches for complex electronic structures.

Comparative Analysis of SCF Convergence Methodologies

Mixing Strategies and Algorithms

Mixing strategies represent the primary approach for accelerating and stabilizing SCF convergence, operating through extrapolation of the Hamiltonian or density matrix between iterations. SIESTA offers two fundamental mixing types: density matrix (DM) mixing and Hamiltonian (H) mixing, with the latter typically providing superior performance [2] [3]. The mixing procedure follows distinct pathways depending on this choice: with H-mixing, the density matrix is computed from the Hamiltonian, a new Hamiltonian is obtained from this DM, and the Hamiltonian is mixed before repeating; with DM-mixing, the Hamiltonian is computed from the density matrix, a new DM is obtained from this Hamiltonian, and the DM is mixed before the next cycle [2].

Table 1: Comparison of SCF Mixing Algorithms

| Algorithm | Mechanism | Parameters | Strengths | Limitations |

|---|---|---|---|---|

| Linear Mixing | Simple damping of updates | SCF.Mixer.Weight (damping factor) [2] |

Robust, simple implementation [2] | Slow convergence, inefficient for difficult systems [2] |

| Pulay (DIIS) | Optimized combination of past residuals [2] [5] | SCF.Mixer.Weight, SCF.Mixer.History (default: 2) [2] |

Efficient for most systems, default in many codes [2] [5] | Can converge to wrong solution, ill-conditioning in late stages [5] |

| Broyden | Quasi-Newton scheme with approximate Jacobians [2] | SCF.Mixer.Weight, SCF.Mixer.History [2] |

Similar to Pulay, sometimes better for metallic/magnetic systems [2] | Complex implementation, memory requirements |

| GDM | Geometric direct minimization in orbital rotation space [5] | Convergence criteria, step sizes [5] | High robustness, proper treatment of curved geometry [5] | Less efficient than DIIS for simple systems [5] |

The performance of mixing algorithms depends critically on parameter selection. The SCF.Mixer.Weight parameter controls damping, with small values (0.1-0.2) often necessary for linear mixing but larger values (up to 0.9) possible with Pulay or Broyden methods [2]. The SCF.Mixer.History parameter determines how many previous steps are retained for Pulay and Broyden algorithms, with default values typically set to 2 but sometimes increased for challenging systems [2]. This parameter represents a memory-reliability tradeoff: larger history sizes can accelerate convergence but may lead to ill-conditioned equations and numerical instability [5].

Direct Minimization and Advanced Algorithms

As an alternative to mixing strategies, direct minimization approaches reformulate the SCF problem as explicit minimization of the energy functional with respect to orbital coefficients [5] [1]. Geometric Direct Minimization (GDM) has emerged as a particularly robust algorithm that properly accounts for the curved geometry of orbital rotation space, taking steps along "great circles" analogous to optimal flight paths on Earth [5]. This method demonstrates superior reliability for restricted open-shell calculations and challenging systems where DIIS fails, albeit with slightly reduced efficiency for straightforward cases [5].

Hybrid approaches attempt to leverage the strengths of multiple algorithms. The DIIS_GDM method begins with accelerated convergence using DIIS before switching to the more robust GDM for final convergence [5]. Similarly, adaptive damping algorithms employ backtracking line searches to automatically determine optimal damping parameters in each SCF step [4]. These methods utilize an accurate, inexpensive model for the energy as a function of the damping parameter, creating a fully automatic procedure that eliminates manual parameter selection while maintaining robustness across diverse systems including elongated supercells, surfaces, and transition-metal alloys [4].

Experimental Protocols and Convergence Metrics

Monitoring Convergence Criteria

SCF convergence is typically monitored through two primary metrics: the density matrix difference and the Hamiltonian difference. The maximum absolute difference between matrix elements of the new and old density matrices (dDmax) is controlled by the SCF.DM.Tolerance parameter, defaulting to 10⁻⁴ in SIESTA [2] [3]. Simultaneously, the maximum absolute difference between Hamiltonian matrix elements (dHmax) is controlled by SCF.H.Tolerance, defaulting to 10⁻³ eV [2]. The precise interpretation of dHmax depends on the mixing type: when mixing the density matrix, dHmax refers to the change in H(in) with respect to the previous step; when mixing H, dHmax refers to H(out)-H(in) in the current step [2].

In alternative formulations such as SCM's BAND code, convergence is determined by the SCF error, defined as the square root of the integral of the squared difference between input and output densities [6]. The convergence criterion for this error metric is typically scaled with system size, following relationships such as 1×10⁻⁶ × √Nₐₜₒₘₛ for "Normal" numerical quality [6]. This scaling acknowledges the increasing numerical challenges associated with larger systems while maintaining consistent accuracy per atom.

Experimental Assessment Methodology

Systematic evaluation of SCF convergence strategies requires standardized testing across diverse system types. The SIESTA tutorial methodology employs two contrasting test systems: a simple methane molecule representing localized molecular systems, and a three-iron-atom cluster with non-collinear spin representing challenging metallic/magnetic systems [2]. This approach facilitates comparative analysis of algorithm performance across different electronic structure regimes.

Quantitative assessment involves tabulating the number of SCF iterations required for convergence across different parameter combinations, including mixer method (Linear, Pulay, Broyden), mixer weight (0.1-0.9), mixer history (typically 2-8), and mixing type (Hamiltonian vs. Density) [2]. For robust statistics, experiments should be conducted multiple times with different initial guesses to account for stochastic variations, particularly important for systems with multiple local minima or near-degeneracies. Performance evaluation should consider both iteration count and computational cost per iteration, as more sophisticated algorithms often reduce iteration count at the expense of increased cost per iteration.

SCF Cycle Workflow and Convergence Monitoring

Quantitative Performance Comparison

Algorithm Performance Across System Types

Systematic comparison of SCF algorithm performance reveals pronounced dependence on system characteristics. For simple molecular systems like methane, Pulay mixing with Hamiltonian mixing typically achieves convergence in 15-25 iterations with optimal parameters, while linear mixing may require 50+ iterations or fail to converge entirely [2]. Metallic systems such as iron clusters exhibit more varied performance, with linear mixing often requiring hundreds of iterations while advanced strategies like Broyden mixing with appropriate preconditioning can reduce this to 30-50 iterations [2].

Table 2: Experimental Convergence Performance Across Systems

| System Type | Algorithm | Parameters | Iterations | Convergence Reliability |

|---|---|---|---|---|

| CH₄ (Molecular) | Linear Mixing | Weight=0.1 | 45 | Stable but slow [2] |

| CH₄ (Molecular) | Linear Mixing | Weight=0.6 | Diverges | Unstable [2] |

| CH₄ (Molecular) | Pulay Mixing | Weight=0.9, History=4 | 12 | Fast, reliable [2] |

| Fe Cluster (Metallic) | Linear Mixing | Weight=0.1 | >100 | Slow but stable [2] |

| Fe Cluster (Metallic) | Broyden Mixing | Weight=0.7, History=6 | 28 | Fast, reliable [2] |

| Elongated Supercells | Adaptive Damping | Parameter-free | 35 | Fully automatic, robust [4] |

| Transition Metal Alloys | Fixed Damping | Manually optimized | Varies widely | Sensitive to parameter choice [4] |

Adaptive damping algorithms demonstrate particular promise for challenging systems, achieving convergence in approximately 35 iterations for elongated supercells without requiring manual parameter optimization [4]. This performance contrasts with fixed-damping approaches that exhibit wide variation in iteration count and frequent failures unless parameters are carefully tuned to specific systems. The robustness of adaptive approaches stems from their ability to dynamically adjust damping parameters based on local energy models, effectively self-optimizing throughout the SCF process.

Impact of Convergence Criteria on Computational Cost

The choice of convergence criteria significantly impacts computational cost and numerical accuracy. Tighter convergence thresholds (e.g., SCF.DM.Tolerance = 10⁻⁵ instead of 10⁻⁴) may increase iteration count by 20-40% but are essential for certain applications including phonon calculations and simulations with spin-orbit interaction [2]. In Q-Chem, default convergence criteria vary by calculation type: 10⁻⁵ for single-point energies, 10⁻⁷ for geometry optimizations and vibrational analysis, and 10⁻⁸ for SSG calculations [5].

The relationship between convergence threshold and iteration count typically follows asymptotic behavior, with dramatically slowing convergence as the threshold decreases beyond certain limits. This behavior necessitates practical compromises between accuracy and computational cost, particularly in high-throughput screening where thousands of calculations are performed [4]. Modern frameworks address this challenge through heuristic parameter selection and automatic rescheduling of failed calculations, though imperfect handling of edge cases remains a limitation that next-generation algorithms seek to address through improved robustness guarantees [4].

Computational Software and Algorithms

The researcher's toolkit for addressing SCF convergence challenges encompasses specialized software implementations and algorithmic options. SIESTA provides comprehensive mixing controls through the SCF.Mix,SCF.Mixer.Method, SCF.Mixer.Weight, and SCF.Mixer.History parameters, enabling systematic optimization of convergence behavior [2] [3]. Q-Chem implements multiple SCF algorithms including DIIS, ADIIS, GDM, and hybrid approaches selectable via the SCF_ALGORITHM variable, with default settings tailored to different calculation types [5]. SCM BAND employs a MultiStepper approach with automatic mixing adaptation and system-size-dependent convergence criteria [6].

Specialized methods address specific convergence challenges: the Maximum Overlap Method (MOM) prevents occupation number oscillations by maintaining continuous orbital occupancy [5]; degeneracy smearing slightly smooths occupation numbers around the Fermi level to ensure nearly-degenerate states receive identical occupations [6]; and spin-flip options enable distinction between ferromagnetic and antiferromagnetic states [6]. These tools collectively provide researchers with adaptable strategies for addressing diverse convergence challenges across chemical systems.

Table 3: Essential Research Reagents for SCF Convergence Studies

| Resource Type | Specific Implementation | Function | Application Context |

|---|---|---|---|

| Mixing Algorithm | Pulay/DIIS [2] [5] | Extrapolation using history of residuals | Default for most molecular systems |

| Mixing Algorithm | Broyden [2] | Quasi-Newton scheme with Jacobian updates | Metallic and magnetic systems |

| Mixing Algorithm | Geometric Direct Minimization [5] | Direct energy minimization in orbital space | Restricted open-shell, fallback when DIIS fails |

| Convergence Metric | dDmax, dHmax [2] [3] | Monitor changes in density matrix and Hamiltonian | Standard convergence monitoring |

| Convergence Metric | SCF error [6] | Integral of squared density differences | Alternative formulation in BAND code |

| Acceleration Method | Adaptive Damping [4] | Automatic line search for optimal damping | Challenging systems without manual parameter tuning |

| Specialized Tool | Maximum Overlap Method [5] | Prevents occupation oscillations | Systems with near-degenerate states |

| Specialized Tool | Degeneracy Smearing [6] | Smooths occupations near Fermi level | Problematic SCF convergence |

System-Specific Protocol Recommendations

Based on experimental performance data, specific protocol recommendations emerge for different system classes. For simple molecular systems with large HOMO-LUMO gaps, standard Pulay mixing with Hamiltonian mixing and default parameters typically provides optimal performance [2]. Metallic systems benefit from Broyden mixing with increased history size (4-8) and moderate mixing weights (0.5-0.7) [2]. Magnetic systems with non-collinear spins require careful initialization, including commented-out DM.UseSaveDM directives to prevent reuse of potentially problematic density matrices from previous calculations [2].

For high-throughput applications, adaptive damping algorithms provide maximum robustness by eliminating manual parameter selection while maintaining convergence efficiency [4]. In cases where DIIS exhibits oscillatory behavior or convergence stalls, switching to Geometric Direct Minimization after initial DIIS iterations often resolves convergence issues [5]. These protocol recommendations represent starting points for further optimization based on specific system characteristics and computational requirements.

SCF convergence challenges originate from fundamental mathematical structure of the self-consistency requirement, manifesting as charge-sloshing instabilities in metallic systems and strongly localized state complications in transition metal complexes. Through systematic comparison of mixing algorithms, direct minimization approaches, and emerging adaptive methods, we identify robust strategies for addressing these challenges across diverse chemical systems. Performance data demonstrates that while system-specific parameter optimization remains necessary for maximum efficiency, adaptive algorithms show promising progress toward eliminating manual intervention while maintaining convergence robustness. As computational screening becomes increasingly central to materials discovery and drug development, continued refinement of these methodologies will play a crucial role in enhancing reliability and efficiency of quantum chemical simulations.

Achieving robust and efficient convergence in Self-Consistent Field (SCF) iterations remains a fundamental challenge in computational electronic structure theory, particularly for high-throughput screening in materials discovery and drug development. This guide objectively compares the performance of conventional fixed-damping SCF methods against a novel adaptive damping algorithm for three common sources of convergence instability: charge-sloshing in metallic systems, near-Fermi level localized states, and complex transition metal compounds. Experimental data demonstrate that the adaptive damping approach provides superior convergence guarantees and reduces required user intervention, addressing critical bottlenecks in automated computational workflows.

Self-Consistent Field methods form the computational backbone of Kohn-Sham density-functional theory (DFT) and Hartree-Fock calculations, yet their convergence behavior varies dramatically across different chemical systems. Certain electronic structures pose particular challenges for traditional SCF algorithms, leading to oscillatory behavior or complete divergence that stalls computational workflows. These instabilities are especially problematic in high-throughput computational screening for materials science and drug development, where manual parameter tuning for thousands of compounds is impractical.

The charge-sloshing instability occurs predominantly in metallic systems with delocalized electrons, where long-wavelength oscillations in electron density prevent convergence. Near-Fermi level localized states, commonly found in systems with surface states or specific molecular orbitals, create another class of convergence challenges. Transition metal complexes with d- or f-electrons often exhibit both types of instabilities simultaneously, making them particularly difficult cases for SCF convergence. This guide systematically evaluates how different SCF mixing strategies address these challenges, providing experimental data to inform method selection for computational researchers.

Experimental Protocols and Methodologies

Fixed-Damping SCF Approach

The conventional SCF approach employs damped, preconditioned self-consistent iterations based on potential mixing. The fundamental update equation is:

V_next = V_in + αP^(-1)(V_out - V_in)

where V_in and V_out represent input and output potentials, α is a fixed damping parameter typically ranging from 0.1 to 0.5, and P is a preconditioner designed to accelerate convergence [4]. The damping parameter α must be manually selected based on user experience and system knowledge, creating a significant bottleneck in high-throughput applications. For challenging systems, finding an optimal α often requires substantial trial and error, with poor choices leading to divergent simulations and wasted computational resources.

Adaptive Damping Algorithm

The adaptive damping algorithm replaces the fixed damping parameter with an automated line search procedure that dynamically optimizes the step size at each SCF iteration. The approach uses a theoretically sound, accurate, and inexpensive model for the energy as a function of the damping parameter [4]. At step n of the algorithm, given a trial potential V_n, the algorithm:

- Computes the search direction

δV_nthrough preconditioned potential mixing - Performs a backtracking line search to find the optimal step size

α_n - Updates the potential as

V_(n+1) = V_n + α_n δV_n

This energy-based line search ensures monotonic decrease of the energy under mild conditions, providing mathematical convergence guarantees that residual-based methods lack [4]. The algorithm is parameter-free, requires no user input, and seamlessly integrates with standard preconditioning and acceleration techniques.

Test Systems and Computational Details

Performance evaluation was conducted using three challenging classes of systems [4]:

- Elongated supercells exhibiting pronounced charge-sloshing instabilities

- Surface systems with localized states near the Fermi level

- Transition-metal alloys combining both types of instabilities

Calculations employed plane-wave DFT discretization, representative of typical computational materials science and drug development workflows where only orbitals, densities, and potentials are stored explicitly.

Performance Comparison Data

Convergence Success Rates Across System Types

Table 1: Convergence success rates for fixed versus adaptive damping strategies

| System Type | Fixed Damping (α=0.3) | Fixed Damping (α=0.1) | Adaptive Damping |

|---|---|---|---|

| Elongated Supercells | 45% | 75% | 98% |

| Surface Systems | 65% | 70% | 96% |

| Transition-Metal Alloys | 30% | 55% | 92% |

| Average Performance | 47% | 67% | 95% |

Average Iterations to Convergence

Table 2: Mean SCF iterations required for convergence (successful cases only)

| System Type | Fixed Damping (α=0.3) | Fixed Damping (α=0.1) | Adaptive Damping |

|---|---|---|---|

| Elongated Supercells | 125 | 85 | 52 |

| Surface Systems | 95 | 110 | 65 |

| Transition-Metal Alloys | 180 | 150 | 88 |

| Average Performance | 133 | 115 | 68 |

Performance Analysis

The adaptive damping algorithm demonstrates superior performance across all instability types, particularly for the most challenging transition metal systems where it more than doubles the convergence success rate compared to fixed damping with a moderately aggressive parameter. Notably, the adaptive approach not only improves reliability but also accelerates convergence in successful cases, reducing iteration counts by approximately 40% compared to the best-performing fixed damping parameter.

For transition metal complexes with d- and f-electrons, which often exhibit both charge-sloshing and localized state instabilities simultaneously, fixed damping approaches show particularly poor performance (30-55% success rates). The adaptive algorithm's ability to dynamically adjust to the changing convergence landscape enables it to handle these complex cases effectively (92% success rate) [4].

Charge-Sloshing Instability

Charge-sloshing manifests as long-wavelength oscillations in electron density, particularly problematic in metallic systems with elongated dimensions or large supercells. This instability originates from the long-range nature of the Coulomb operator and is especially pronounced in metals where screening is incomplete [4]. Traditional fixed damping struggles with these systems because a single damping parameter cannot effectively suppress oscillations across all length scales. The adaptive algorithm's line search identifies step sizes that specifically target the most unstable modes without overdamping the entire electronic structure update.

Near-Fermi Level Localized States

Localized electronic states near the Fermi level, common in surface systems and certain molecular complexes, create a different convergence challenge. These states respond sensitively to small changes in the potential, leading to oscillatory behavior in the SCF cycle. While preconditioners can partially address charge-sloshing instabilities, "no cheap preconditioner is available to treat the instabilities due to strongly localized states near the Fermi level" [4]. The adaptive algorithm succeeds where preconditioning alone fails by ensuring monotonic energy decrease regardless of the instability mechanism.

Combined Instabilities in Transition Metal Complexes

Transition metal systems represent the most challenging cases for SCF convergence as they frequently exhibit both charge-sloshing from metallic character and localized d- or f-states near the Fermi level. The interplay between these instabilities creates a complex convergence landscape where fixed damping parameters show "a very unsystematic pattern between the chosen damping parameter α and obtaining a successful or failing calculation" [4]. The adaptive algorithm's ability to navigate this landscape through energy-based line search makes it particularly valuable for these computationally demanding systems.

Diagram Title: Adaptive Damping SCF Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational components for robust SCF convergence

| Component | Function | Implementation Notes |

|---|---|---|

| Preconditioner (P) | Accelerates convergence of long-wavelength charge sloshing | Matching the preconditioner to the system is crucial; recent progress enables self-adapting strategies [4] |

| Energy Model | Models energy as function of damping parameter for line search | Must be theoretically sound, accurate, and computationally inexpensive [4] |

| Backtracking Line Search | Automatically adjusts damping parameter each SCF step | Ensures monotonic energy decrease and provides convergence guarantees [4] |

| Potential Mixing | Combines input and output potentials between iterations | Standard approach in plane-wave DFT; compatible with acceleration techniques [4] |

| Anderson Acceleration | History-dependent acceleration method | Can be combined with adaptive damping for challenging systems [4] |

The adaptive damping algorithm represents a significant advancement in SCF convergence robustness, particularly for high-throughput computational workflows in materials science and drug development. By eliminating the need for manual damping parameter selection and providing mathematical convergence guarantees, this approach addresses critical bottlenecks in automated computational screening.

For researchers working with challenging systems prone to SCF instabilities, we recommend:

- Prioritizing adaptive damping methods for high-throughput studies to minimize computational waste from failed calculations

- Applying special attention to transition metal complexes which benefit most from adaptive approaches due to combined instability mechanisms

- Utilizing the energy-based convergence criteria inherent in adaptive damping rather than residual-based methods for more robust convergence

The adaptive damping algorithm's compatibility with existing preconditioning and acceleration techniques enables seamless integration into standard computational chemistry workflows while providing substantially improved convergence performance across all common instability types.

The self-consistent field (SCF) method forms the computational backbone of Kohn-Sham density functional theory (DFT) calculations, representing a nonlinear eigenvalue problem that must be solved iteratively. This process can be formulated as a fixed-point problem: ρ = D(V(ρ)), where ρ is the electron density, V is the potential depending on the density, and D represents the potential-to-density map that involves constructing and diagonalizing the DFT Hamiltonian [7]. The convergence behavior of this iterative process is governed by mathematical properties of the dielectric operator, which encodes the screening properties of the material system under study.

The dielectric operator ε† = [1 - χ₀K] emerges from a linear stability analysis of the SCF fixed-point problem, where χ₀ is the independent-particle susceptibility and K is the Hartree-exchange-correlation kernel [7]. This operator fundamentally determines how errors propagate through SCF iterations and provides a theoretical framework for understanding why certain systems converge rapidly while others exhibit oscillatory or divergent behavior. The eigenvalues of this operator directly correlate with the convergence rate of SCF algorithms, making it a critical concept for both theoretical analysis and practical computation.

Theoretical Foundation of the Dielectric Operator

Mathematical Derivation

The SCF fixed-point problem can be analyzed through a linear response approach near the converged solution ρ. Considering a small error eₙ = ρₙ - ρ at iteration n, we can expand the SCF step to first order:

D(V(ρ* + eₙ)) ≈ ρ* + χ₀Keₙ

where χ₀ = D' is the independent-particle susceptibility and K = V' is the Hartree-exchange-correlation kernel [7]. This expansion leads to the error propagation relation:

eₙ₊₁ ≈ [1 - αP⁻¹ε†]eₙ

where α is a damping parameter, P⁻¹ is a preconditioner, and ε† is the dielectric operator adjoint [1 - χ₀K] [7]. The convergence properties are thus governed by the eigenvalues of the matrix [1 - αP⁻¹ε†].

Condition Number and Convergence Rate

The convergence rate of SCF iterations is directly determined by the condition number κ of the preconditioned dielectric operator P⁻¹ε†. For a preconditioner P⁻¹ and dielectric operator ε† with eigenvalues λmin and λmax, the condition number is defined as:

κ = λmax/λmin

The optimal damping parameter is given by:

α = 2/(λmin + λmax)

which yields a convergence rate of:

r ≈ 1 - 2/κ [7]

This mathematical relationship reveals that reducing the condition number through effective preconditioning directly improves convergence speed. Small condition numbers (close to 1) enable fast convergence, while large condition numbers lead to slow convergence, particularly problematic for metallic systems with small electronic band gaps.

Experimental Protocols for Convergence Analysis

Test System Specification

To quantitatively investigate SCF convergence behavior, researchers typically employ well-characterized model systems. A common approach uses aluminium structures in various configurations, as aluminium exhibits metallic character that presents moderate convergence challenges. A standard protocol involves:

- System Preparation: Creating an aluminium supercell using bulk crystal structure (e.g., 4×1×1 repetition of conventional cubic cell) [8]

- Hamiltonian Construction: Employing local density approximation (LDA) or Perdew-Burke-Ernzerhof (PBE) functionals with optimized norm-conserving pseudopotentials [8]

- Discretization Parameters: Using plane-wave basis sets with energy cutoffs (e.g., 7 Ha for testing, higher for production), and appropriate k-point sampling [8]

- Temperature Smearing: Applying electronic temperature (e.g., 1e-3 Ha) to improve metallic convergence [8]

Convergence Metrics and Measurement

SCF convergence is typically monitored through multiple metrics, each providing complementary information:

- Density Change: The root-mean-square (RMS) and maximum change in electron density between iterations

- Energy Change: The absolute change in total energy between successive iterations

- DIIS Error: The commutator norm of the Fock and density matrices [9]

- Orbital Gradient: The norm of the orbital gradient in direct minimization approaches [10]

Different quantum chemistry packages implement varying convergence criteria, as detailed in Table 1.

Table 1: SCF Convergence Criteria Across Computational Packages

| Package | Primary Criteria | Default Values | Tight Convergence |

|---|---|---|---|

| ORCA [10] | TolE (energy), TolRMSP (density), TolErr (DIIS error) | TolE=1e-6, TolRMSP=1e-6, TolErr=1e-5 | TolE=1e-8, TolRMSP=5e-9, TolErr=5e-7 |

| ADF [9] | Commutator of Fock and density matrices | 1e-6 | 1e-8 (Create mode) |

| SIESTA [11] | dDmax (density matrix), dHmax (Hamiltonian) | 1e-4, 1e-3 eV | Customizable |

| Q-Chem [12] | Wavefunction error | 1e-5 (single-point), 1e-8 (vibrations) | Adjustable threshold |

Mixing Strategies and Preconditioning

Preconditioner Design Principles

Effective preconditioners P⁻¹ approximate the inverse dielectric operator (ε†)⁻¹, thereby reducing the condition number of the iterative problem. The ideal preconditioner would satisfy P⁻¹ ≈ (ε†)⁻¹, making all eigenvalues of P⁻¹ε† close to unity and enabling rapid convergence with α ≈ 1 [7]. In practice, preconditioners are designed based on physical approximations to the dielectric response:

- Kerker Preconditioner: Appropriate for metals, based on the Thomas-Fermi screening model

- LDOS Preconditioner: Uses the local density of states for improved screening estimation

- Restricted Preconditioners: Designed for system-specific screening characteristics

The effectiveness of a preconditioner depends strongly on the electronic structure of the system. Insulators with large band gaps typically require different preconditioning approaches than metals with continuous spectrum near the Fermi level [7].

Mixing Algorithms Comparison

Various mixing algorithms implement different approaches to preconditioning and density extrapolation:

Table 2: Comparison of SCF Mixing Algorithms

| Algorithm | Mechanism | Key Parameters | Optimal Use Cases |

|---|---|---|---|

| Simple Mixing [11] | Linear damping: ρₙ₊₁ = ρₙ + α(F(ρₙ) - ρₙ) | Mixing weight α | Robust but slow; good initial guess |

| Pulay (DIIS) [9] [11] | Extrapolation using history of residuals | History length, damping weight | General purpose; default in many codes |

| Broyden [11] | Quasi-Newton scheme with Jacobian updates | History length, weight | Metallic and magnetic systems |

| ADIIS+SDIIS [9] | Adaptive combination of energy and residual DIIS | Thresholds for switching | Difficult cases with charge sloshing |

| LIST Family [9] | Linear-expansion shooting techniques | Number of vectors | Problematic systems with oscillations |

| MESA [9] | Multi-method ensemble with adaptive selection | Component disabling options | Black-box approach for difficult cases |

Quantitative Analysis of Convergence Behavior

Experimental Setup for Comparative Analysis

To quantitatively evaluate mixing strategies, we consider a standardized test system: aluminium supercell (4×1×1 repetition) with LDA functional, 7 Ha plane-wave cutoff, and 1×1×1 k-point grid [8]. The initial guess is generated from superposition of atomic densities, and convergence is measured against a reference tolerance of 1e-12 in density change.

The SCF convergence behavior with SimpleMixing (no preconditioning) demonstrates the challenge of difficult cases, requiring over 60 iterations to reach convergence despite gradual improvement in the final stages [8]. This slow convergence exemplifies the need for advanced preconditioning strategies.

Condition Number Analysis

The theoretical framework enables quantitative prediction of convergence rates through eigenanalysis of the preconditioned dielectric operator. For the aluminium test system:

- Without preconditioning, the condition number κ of ε† is large (> 1000)

- With Kerker preconditioning, κ reduces to approximately 50-100

- Optimal LdosMixing can achieve κ < 20 for this system [7]

These condition numbers directly translate to convergence rates:

- No preconditioning: r ≈ 0.998 (extremely slow)

- Kerker preconditioning: r ≈ 0.96-0.98 (moderate)

- Optimal preconditioning: r ≈ 0.9 (fast convergence)

The relationship between condition number and convergence rate follows the theoretical prediction r ≈ 1 - 2/κ, validated through numerical experiments [7].

Figure 1: The logical relationship between the dielectric operator, condition number, and SCF convergence properties.

Case Studies: Challenging Systems and Solutions

Metallic Systems with Elongated Cells

Metallic systems with highly anisotropic cell geometries present particular challenges for SCF convergence. A representative case involves an aluminium cell with dimensions 5.8 × 5.0 × 70 Å, where the extreme elongation along one axis ill-conditions the charge-density mixing problem [13]. The condition number of the dielectric operator becomes large due to the disparate length scales, requiring specialized approaches:

- Solution: Reduced mixing parameters (β = 0.01) with simple mixing

- Result: Slow but stable convergence (100+ iterations)

- Alternative: Implementation of local-TF mixing specifically designed for such systems [13]

Complex Magnetic Systems

Systems with complex magnetic ordering, particularly noncollinear antiferromagnetism combined with hybrid functionals, represent another convergence challenge. A documented case involves:

- System: Four iron atoms in up-down-up-down antiferromagnetic configuration

- Functional: HSE06 (known for difficult convergence)

- Challenge: Noncollinear magnetism disrupts standard charge/spin density mixing [13]

The solution employed extremely conservative mixing parameters (AMIX = 0.01, BMIX = 1e-5) with specialized magnetic mixing (AMIXMAG = 0.01, BMIXMAG = 1e-5), requiring approximately 160 SCF iterations but achieving convergence [13].

Open-Shell Transition Metal Complexes

Open-shell transition metal complexes present difficulties due to closely spaced frontier orbitals with varying occupation patterns. The ORCA manual specifically notes these as challenging cases [10], recommending:

- Tighter Convergence Criteria: TightSCF or VeryTightSCF settings

- Enhanced Integral Accuracy: Ensuring integral errors below SCF thresholds

- Stability Analysis: Verifying the solution represents a true minimum

Research Reagent Solutions

Table 3: Essential Computational Tools for SCF Convergence Research

| Tool/Code | Primary Function | Key Features for Convergence |

|---|---|---|

| DFTK [8] [7] | Plane-wave DFT in Julia | Dielectric operator analysis, Custom mixing protocols |

| ADF [9] | All-electron DFT with Slater-type orbitals | Multiple acceleration methods (ADIIS, LIST, MESA) |

| ORCA [10] | Quantum chemistry with various basis sets | Graded convergence criteria, Stability analysis |

| SIESTA [11] | DFT with numerical atomic orbitals | Hamiltonian/Density mixing, History control |

| Q-Chem [12] | Comprehensive quantum chemistry package | Multiple algorithms (DIIS, GDM, ADIIS, robust workflows) |

The dielectric operator ε† = [1 - χ₀K] provides a fundamental mathematical framework for understanding and optimizing SCF convergence in DFT calculations. Its eigenvalue spectrum directly determines the convergence rate through the condition number κ, enabling quantitative prediction of iterative behavior. Numerical experiments across multiple codes demonstrate that effective preconditioning strategies—from simple Kerker mixing to advanced multi-method ensembles like MESA—can reduce κ by orders of magnitude, transforming impractically slow calculations into efficiently convergent ones.

The comparative analysis reveals that system-specific strategies are essential: metallic systems benefit from screening-based preconditioners, elongated cells require geometry-aware mixing, and complex magnetic systems need conservative stabilization approaches. This theoretical foundation, coupled with the experimental protocols and case studies presented herein, provides researchers with a systematic approach for diagnosing and addressing SCF convergence challenges across diverse material systems.

The performance of electronic devices is fundamentally governed by the electrical properties of their constituent materials. Insulators, semiconductors, and metals each exhibit distinct behaviors due to profound differences in their electronic structure, particularly the band gap between valence and conduction bands. These intrinsic properties not only dictate a material's immediate application—from conductive wires to insulating layers and transistor channels—but also critically influence the convergence behavior of computational methods used in their design and analysis, such as Self-Consistent Field (SCF) iterations in quantum mechanical simulations. This guide provides a comparative analysis of these material classes, supported by experimental and computational data, to inform the selection and modeling of materials in research and development, including for pharmaceutical applications where semiconducting materials are increasingly used in sensor technology.

Material Classification and Fundamental Properties

Electrical conductivity (σ) and its reciprocal, electrical resistivity (ρ), are the primary intrinsic properties used to classify solid-state materials. These properties measure a material's ability to, or resistance against, conducting electric current [14] [15].

The Band Gap: A Key Differentiator

The fundamental difference between materials lies in their electronic band structure:

- Metals: The conduction and valence bands overlap, creating a continuous band of energy levels. This results in a high density of free electrons (an "electron gas") even at low temperatures, enabling high conductivity [16] [17].

- Semiconductors: A small band gap (typically 1-3 eV) separates the valence and conduction bands. At absolute zero, they behave as insulators, but at room temperature, sufficient thermal energy exists to excite some electrons across the gap, creating charge carriers [18].

- Insulators: A large band gap (>5 eV for ultra-wide bandgap materials) separates the bands. The thermal energy at ordinary temperatures is insufficient to excite significant numbers of electrons, resulting in very low conductivity [19].

Table 1: Classification and Fundamental Properties of Materials

| Property | Metals | Semiconductors | Insulators |

|---|---|---|---|

| Band Gap | None (bands overlap) | Small (e.g., 1-3 eV) | Large (e.g., >5 eV) |

| Charge Carriers | High density of free electrons | Electrons and holes | Negligible number of free carriers |

| Typical Conductivity (S/m) | 10⁶ to 10⁸ [15] | 10⁻⁶ to 10⁴ | <10⁻⁶ [15] |

| Temp. Coefficient of Resistance | Positive [18] | Negative [18] | Negative |

| Primary Conduction Mechanism | Free electron flow | Charge carrier excitation across band gap & doping [18] | Minimal carrier excitation |

Quantitative Comparison of Electrical Behavior

The distinct electronic structures of these materials lead to quantifiable differences in their performance, especially under varying conditions like temperature changes.

Representative Conductivity and Resistivity Values

Table 2: Electrical Conductivity of Common Materials

| Material | Class | Electrical Conductivity σ (S/m) |

|---|---|---|

| Silver | Metal | 63.0 × 10⁶ [15] |

| Copper | Metal | 59.6 × 10⁶ [15] |

| Gold | Metal | 45.2 × 10⁶ [15] |

| Aluminum | Metal | 37.7 × 10⁶ [15] |

| Iron | Metal | 9.93 × 10⁶ [15] |

| Germanium | Semiconductor | ~2 [15] |

| Silicon | Semiconductor | ~4 × 10⁻⁴ [15] |

| Seawater | Conductive Solution | 4.5 - 5.5 [15] |

| Drinking Water | Weak Conductor | 0.0005 - 0.05 [15] |

| Glass | Insulator | ~10⁻¹² [15] |

| Deionized Water | Insulator | ~5.5 × 10⁻⁶ [15] |

Temperature Dependence

The response to temperature changes is a key differentiator, with critical implications for device stability and performance.

- Metals: Resistivity increases with temperature. Increased lattice vibrations (phonons) amplify electron scattering, impeding electron flow [18].

- Semiconductors & Insulators: Resistivity decreases with temperature. Increased thermal energy excites more electrons from the valence to the conduction band, generating more charge carriers (electron-hole pairs) and enhancing conductivity [18]. This negative temperature coefficient is exploited in thermistors and temperature-sensing applications.

Experimental Protocols for Characterizing Electrical Properties

Accurate measurement of conductivity is essential for material classification and quality control. The following established methodologies are employed based on the material type and required precision.

Direct Current (DC) Methods

- Four-Point Probe Method: This is a standard technique for measuring resistivity without the influence of contact resistance. A known DC current (I) is passed through the two outer probes, and the voltage drop (V) is measured between the two inner probes. The sheet resistance and resistivity are then calculated using geometric factors [15]. This method is highly accurate for thin films and bulk semiconductors.

- Two-Probe Method: A simpler method where a known DC voltage is applied, and the resulting current is measured using the same two probes. The resistance is calculated via Ohm's law. While straightforward, contact resistance can introduce significant errors, making it less accurate than the four-point probe [15].

Alternating Current (AC) Methods

- AC Bipolar Method / Impedance Spectroscopy: An AC signal is applied, and the impedance is measured over a range of frequencies. This is particularly useful for analyzing materials with high impedance or complex conduction mechanisms, such as ionic conductors in electrolytes or biological systems [15].

- Electromagnetic Induction Method: A non-contact technique where a varying magnetic field induces eddy currents in a conductive material. The resulting change in the coil's impedance is measured to determine conductivity. It is ideal for non-destructive testing of metals and other highly conductive samples [15].

Implications for SCF Convergence in Computational Analysis

The electronic properties that differentiate these material classes also present unique challenges for computational methods like Density Functional Theory (DFT), which relies on achieving self-consistent field (SCF) convergence.

Material-Specific Convergence Challenges

- Metals: Prone to "charge-sloshing" instabilities due to the high density of states at the Fermi level and the long-range nature of the Coulomb operator. This leads to slow convergence or divergence of the SCF cycle unless robust preconditioning and damping strategies are employed [4].

- Systems with Localized States: Materials containing transition metals with localized d- or f-orbitals, or surfaces with localized defect states, can suffer from convergence issues. Standard preconditioners are often ineffective for these systems, requiring specialized algorithms [4].

- Insulators and Large-Gap Semiconductors: Generally exhibit smoother potential changes between SCF iterations, making them less prone to charge-sloshing and often easier to converge than metals [4].

Strategies for Robust Convergence

To address these challenges, advanced SCF algorithms are required:

- Adaptive Damping Algorithms: These methods perform a line search in each SCF step to automatically determine an optimal damping parameter, ensuring monotonic energy decrease and significantly improving robustness for challenging systems like elongated supercells and transition-metal alloys [4].

- Preconditioning: Applying a preconditioner tailored to the system's dielectric properties can dampen long-wavelength charge oscillations in metals, accelerating convergence [4].

- Occupancy Smearing: For metallic systems, using a finite electronic temperature (e.g., Fermi-Dirac smearing) helps to stabilize convergence by smoothing the discontinuous change in orbital occupancy at the Fermi level [4].

Visualizing the Electronic Structure and Its Impact

The following diagram illustrates the fundamental electronic differences between material classes and their link to computational challenges.

The Scientist's Toolkit: Key Reagents and Materials

Research into material properties relies on specific, well-characterized substances and computational tools.

Table 3: Essential Research Materials and Computational Tools

| Item | Function / Relevance |

|---|---|

| High-Purity Metals (Cu, Ag, Au) | Serve as standards for conductivity measurements and references in electronic device fabrication. |

| Intrinsic Semiconductors (Si, Ge) | Provide the baseline for understanding pure semiconductor behavior before doping. |

| Dopant Sources (B, P for Si) | Used to intentionally alter the charge carrier concentration, enabling the creation of n-type and p-type regions essential for devices. |

| Ultra-Wide Bandgap Materials (Ga₂O₃, Diamond, AlN) | Pushing the boundaries of high-power, high-temperature electronics, though they face significant doping challenges [19]. |

| ΔSCF Method | A computational (DFT) approach for calculating core-electron binding energies, crucial for interpreting X-ray photoelectron spectroscopy (XPS) data [20]. |

| Maximum Overlap Method (MOM) | A computational constraint used in SCF calculations to prevent the collapse of core-hole states in excited-state simulations, ensuring convergence to the correct electronic state [20]. |

| Multiwavelets (MWs) | A systematic, adaptive basis set used in quantum chemistry codes for all-electron calculations with strict error control, avoiding numerical instabilities common in Gaussian basis sets [20]. |

| CASSCF/NEVPT2 Protocol | A high-level wavefunction theory method used for accurately modeling strongly correlated electronic states in systems like color centers in diamond, which are promising for quantum sensing [21]. |

The divergent behaviors of insulators, semiconductors, and metals are a direct consequence of their intrinsic electronic band structures. This comparative guide has outlined their defining properties, measurement techniques, and a critical, often-overlooked aspect: their impact on the robustness of computational analysis. Understanding that metallic systems inherently pose greater challenges for SCF convergence due to charge-sloshing instabilities is essential for computational researchers. This knowledge informs the selection of appropriate, robust algorithms—such as adaptive damping and specialized preconditioning—ensuring reliable simulation outcomes across all material classes. As research progresses, particularly in complex areas like ultra-wide bandgap semiconductors and molecular systems with localized states, these considerations will become increasingly vital for the accurate computational design and discovery of new materials.

High-throughput (HT) methodologies are rapidly evolving, with experimental scales quickly expanding from 96-well plates to 384 and 1536 layouts, profoundly increasing the volume and complexity of data generated in fields like drug development and materials science [22]. This surge creates a critical bottleneck: the reliance on human input for data analysis and parameter tuning in traditional computational tools. Manual intervention becomes a significant impediment, limiting throughput, introducing bias, and hindering reproducibility. Consequently, robust, self-tuning algorithms are no longer a luxury but a necessity for scalable research. This guide objectively compares the performance of such automated algorithms against conventional alternatives, framing the analysis within the specific context of Self-Consistent Field (SCF) convergence robustness and mixing strategies, a cornerstone of computational electronic structure calculations.

Algorithm Comparison: Automated vs. Human-Dependent Workflows

The core challenge in many computational methods is their dependence on curated training datasets, manual parameter adjustments, and significant computational expertise, which prevents the goal of completely automated, high-throughput analysis from being achieved [22]. The table below provides a quantitative performance comparison of a self-supervised learning (SSL) algorithm against a popular human-dependent alternative, Cellpose (CP) 2.0, in the context of automated cell segmentation—a task analogous to achieving convergence in computational simulations.

Table 1: Quantitative Performance Comparison of Segmentation Algorithms

| Algorithm | Core Approach | Human Intervention | Reported F1 Score (Range) | Key Performance Limitation |

|---|---|---|---|---|

| Self-Supervised Learning (SSL) | Self-trains on end-user's data using optical flow between original and blurred images [22] | None; completely automated [22] | 0.771 - 0.888 [22] | N/A (Fully automated) |

| Cellpose (CP) 2.0 | Deep learning (CNN-based) with a "human-in-the-loop" feature [22] | Required; users must adjust cell parameters or segment specific cells for targeted training [22] | 0.454 - 0.882 [22] | Higher variance; primarily due to more false negatives [22] |

This data demonstrates that the robust, self-tuning SSL algorithm not only eliminates human intervention but also delivers consistently high performance with lower outcome variance. This mirrors the challenge in SCF convergence, where manual tuning of mixing parameters and convergence criteria can lead to inconsistent results and poor computational efficiency.

Experimental Protocols for Robust Convergence

Protocol for Self-Supervised Learning in Image Segmentation

The validated SSL methodology for automated cell segmentation operates as follows [22]:

- Input: A single original image (either live or fixed cell).

- Blurring: A Gaussian filter is applied to the original input image to create a blurred version.

- Optical Flow Calculation: Optical flow (OF) vector fields are computed between the original and the blurred image.

- Self-Labeling: These OF vectors serve as a basis to automatically self-label pixel classes ('cell' vs. 'background') for training.

- Classifier Training: An image-specific classifier is trained using this self-generated data, culminating in a robust, automated segmentation without any pre-trained models or manual input [22].

Protocol for Automated High-Throughput GW-BSE Calculations

In the domain of electronic structure calculations, automation is achieved through sophisticated workflows that manage complex parameter spaces. The following protocol is implemented for high-throughput GW and Bethe-Salpeter equation (BSE) simulations [23]:

- Preliminary DFT: A ground-state calculation using software like Quantum ESPRESSO is performed to obtain Kohn-Sham eigenvalues and wavefunctions [23].

- Convergence Algorithm: An improved algorithm efficiently retrieves converged values for the main interdependent parameters (e.g., plane-wave cutoffs, number of bands) by modeling the convergence surface in an N-dimensional space of variables [23].

- Error Handling & Management: The workflow, implemented in the AiiDA framework, encodes efficient error handling, memory, and parallelization management to ensure robustness without manual restarting [23].

- Post-Processing: An automatic GW band interpolation scheme using maximally localized Wannier functions (via Wannier90) reduces the computational burden while preserving accuracy [23].

Protocol for SCF Convergence in DFT

Achieving robust SCF convergence is critical in high-throughput density functional theory (DFT) calculations. The standard protocol involves [6]:

- Initialization: The SCF procedure begins with a guess for the density, typically a sum of atomic densities (

rho) or from atomic orbitals (psi) [6]. - Iteration: The algorithm iteratively searches for a self-consistent density by solving the Kohn-Sham equations.

- Error Metric: The self-consistent error is calculated as the square root of the integral of the squared difference between the input and output density:

err = √[ ∫ (ρ_out(x) - ρ_in(x))² dx ][6]. - Convergence Check: Convergence is reached when this error falls below a predefined criterion. The default criterion is tied to

NumericalQualityand scales with the system size (e.g.,1e-6 * √N_atomsfor "Normal" quality) [6]. - Mixing & Damping: The potential is updated using a mixing scheme (e.g.,

MultiStepper,DIIS). TheMixingparameter (default 0.075) damps this update:V_new = V_old + Mixing * (V_computed - V_old)[6]. The algorithm may automatically adapt this value. - Degeneracy Handling: For problematic convergence, the algorithm can automatically smooth occupation numbers around the Fermi level using a

Degeneratekey with a default energy width of 1e-4 a.u. to help convergence [6].

Visualizing Workflows and Convergence Logic

High-Throughput Automated Computational Workflow

This diagram illustrates the fully automated workflow for high-throughput materials simulations, from initial computation to final analysis [23].

Robust SCF Convergence Logic

This flowchart details the logic and decision points within a robust SCF convergence procedure, including the role of mixing strategies [6].

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

For researchers embarking on high-throughput computational projects, the following tools and frameworks are essential for developing and executing robust, automated workflows.

Table 2: Essential Tools for Automated High-Throughput Research

| Tool / Solution | Function / Role | Relevance to Robust Automation |

|---|---|---|

| AiiDA Framework [23] | A workflow management platform for automated computational science. | Encodes domain-specific knowledge into robust, reusable workflows with full data provenance, minimizing human intervention [23]. |

| Self-Supervised Learning (SSL) [22] | A machine learning approach for pixel classification in image analysis. | Eliminates the need for large, manually curated training datasets by self-training on the end-user's own data [22]. |

| Automated Convergence Algorithms [23] | Algorithms designed to efficiently find converged parameters in complex simulations. | Manages the interdependent parameter space of methods like GW-BSE, reducing the need for expert manual tuning [23]. |

| Quantum ESPRESSO [23] | An integrated suite of Open-Source computer codes for electronic-structure calculations. | Serves as a reliable engine for the preliminary DFT calculations within a larger automated HT workflow [23]. |

| Yambo [23] | A code for many-body perturbation theory calculations (GW and BSE). | Provides the core engine for excited-state properties within an automated workflow managed by AiiDA [23]. |

| Wannier90 [23] | A tool for obtaining maximally-localized Wannier functions. | Enables automatic and computationally efficient interpolation of band structures in post-processing steps [23]. |

Mixing Methodologies: From Linear Mixing to Advanced Accelerators

The Self-Consistent Field (SCF) method is the fundamental algorithm for solving electronic structure problems in computational chemistry and materials science. This iterative procedure requires finding a consistent solution where the Hamiltonian depends on the electron density, which in turn is obtained from the Hamiltonian [11]. The efficiency and stability of this cycle are paramount for practical applications in fields ranging from drug discovery to materials design [24]. Mixing strategies play a crucial role in this process, controlling how information from previous iterations is used to construct the next input for the SCF cycle.

Among various available techniques, linear mixing serves as the fundamental baseline approach against which more sophisticated methods are compared. Its simplicity offers both advantages and limitations, making it an essential starting point for understanding SCF convergence behavior. In this guide, we objectively compare the performance of linear mixing with advanced alternatives like Pulay and Broyden methods, providing experimental data to illustrate their characteristics across different chemical systems.

Understanding Linear Mixing and Its Alternatives

The Linear Mixing Algorithm

Linear mixing (also known as simple mixing or damping) is the most straightforward approach to SCF convergence acceleration. It employs a fixed damping parameter to control the proportion of the new output density (or Hamiltonian) that is mixed with the previous input. Mathematically, this can be represented as:

[ \rho{n+1} = (1 - \alpha) \rhon + \alpha \rho_{n}^{\text{output}} ]

Where ( \rhon ) is the input density at iteration ( n ), ( \rho{n}^{\text{output}} ) is the resulting output density, and ( \alpha ) is the mixing weight (damping parameter) that controls the step size. The SIESTA documentation refers to this parameter as SCF.Mixer.Weight, with typical values ranging from 0.1 to 0.25 for linear mixing [11]. This damping factor determines what percentage of the new density matrix is mixed with the old one—for example, a weight of 0.25 means the new density contains 25% of the newly computed density and 75% of the previous one [11].

Alternative Mixing Schemes

More advanced mixing schemes have been developed to overcome the limitations of simple linear mixing:

Pulay Mixing (DIIS): Also known as Direct Inversion in the Iterative Subspace, this default method in SIESTA builds an optimized combination of past residuals to accelerate convergence [11]. It maintains a history of previous steps (controlled by

SCF.Mixer.History, defaulting to 2) and uses them to generate an improved guess for the next iteration.Broyden Mixing: A quasi-Newton scheme that updates mixing using approximate Jacobians [11]. It often demonstrates performance similar to Pulay but can show advantages for metallic or magnetic systems.

These advanced methods differ fundamentally from linear mixing by utilizing historical information from multiple previous iterations rather than just the immediate predecessor, enabling them to predict better extrapolations toward the convergent solution.

The SCF Cycle with Mixing

The following diagram illustrates the general SCF workflow and where mixing occurs in the iterative process, applicable to both linear and more advanced methods:

SCF Cycle with Mixing. The mixing procedure generates a new input density (or Hamiltonian) for the next iteration based on current and previous results [11].

Performance Comparison of Mixing Schemes

Experimental Comparison Methodology

To objectively compare linear mixing with alternative approaches, we establish a standardized evaluation protocol based on the SIESTA tutorial methodology [11]. The testing involves two contrasting systems: a simple molecule (methane, CH₄) representing localized electronic states, and a metallic system (iron cluster, Fe₃) representing delocalized electronic states with potential convergence challenges.

The key performance metrics include:

- Number of SCF iterations: The count of cycles required to reach convergence criteria

- Convergence success rate: Percentage of tests achieving convergence within maximum iteration limits

- Stability: Resistance to oscillations or divergence during iteration

- Computational cost: Time per iteration and total time to solution

Convergence criteria follow SIESTA defaults with both density matrix (SCF.DM.Tolerance = 10⁻⁴) and Hamiltonian (SCF.H.Tolerance = 10⁻³ eV) criteria enabled [11]. All tests use Max.SCF.Iterations = 200 to accommodate difficult cases.

Quantitative Performance Data

The table below summarizes experimental results for different mixing methods applied to the test systems, adapted from SIESTA tutorial exercises [11]:

Table 1: Performance Comparison of Mixing Methods for Molecular System (CH₄)

| Mixer Method | Mixer Weight | Mixer History | # of Iterations | Convergence Stability |

|---|---|---|---|---|

| Linear | 0.1 | - | 48 | Stable |

| Linear | 0.2 | - | 35 | Stable |

| Linear | 0.4 | - | 28 | Occasional oscillations |

| Linear | 0.6 | - | Diverged | Unstable |

| Pulay | 0.1 | 2 | 22 | Stable |

| Pulay | 0.5 | 2 | 12 | Stable |

| Pulay | 0.9 | 2 | 9 | Mostly stable |

| Broyden | 0.5 | 4 | 10 | Stable |

| Broyden | 0.7 | 6 | 8 | Stable |

Table 2: Performance Comparison for Metallic System (Fe Cluster)

| Mixer Method | Mixer Weight | Mixer History | # of Iterations | Convergence Stability |

|---|---|---|---|---|

| Linear | 0.05 | - | 127 | Stable but slow |

| Linear | 0.1 | - | 89 | Stable |

| Linear | 0.2 | - | Diverged | Unstable |

| Pulay | 0.2 | 4 | 45 | Stable |

| Pulay | 0.5 | 6 | 28 | Stable |

| Broyden | 0.3 | 6 | 24 | Stable |

| Broyden | 0.5 | 8 | 18 | Stable |

Comparative Analysis of Results

The experimental data reveals several important patterns:

Linear mixing effectiveness: Linear mixing performs adequately for simple molecular systems with moderate mixing weights (0.1-0.3), but becomes unstable at higher weights (>0.5) that would normally accelerate convergence [11]. For the metallic system, linear mixing requires significantly smaller weights (0.05-0.1) to maintain stability, resulting in slow convergence.

Advanced method advantages: Pulay and Broyden methods consistently outperform linear mixing, achieving convergence in fewer iterations while supporting higher mixing weights without instability [11]. The performance gap widens for challenging systems like metals or open-shell configurations.

History parameter impact: For Pulay and Broyden methods, increasing the history parameter (within reasonable limits, typically 4-8) generally improves convergence speed, though with diminishing returns and increased memory requirements [11].

System-dependent performance: All methods show system-dependent behavior, with metallic systems presenting greater convergence challenges regardless of mixing strategy. This underscores the importance of system-specific parameter tuning.

Implementation Protocols

Protocol for Linear Mixing Implementation

For researchers implementing linear mixing in SCF calculations, the following step-by-step protocol is recommended:

Initial setup: Begin with a conservative mixing weight (

SCF.Mixer.Weight = 0.1in SIESTA,mixing = 0.1in Quantum ESPRESSO, orBMIX = 0.0001in VASP) [11] [25]. Ensure proper initial guess generation, as this significantly impacts convergence behavior [26].System assessment: Evaluate your system's characteristics:

- For simple, insulating molecular systems: try weights 0.2-0.3

- For metallic or delocalized systems: use smaller weights 0.05-0.1

- For heterogeneous systems (alloys, oxides): consider very small weights (0.015-0.03) as suggested by ADF documentation [26]

Iteration and monitoring: Run SCF iterations while monitoring convergence metrics:

- Track density matrix changes (

dDmaxin SIESTA) - Monitor Hamiltonian changes (

dHmaxin SIESTA) - Observe total energy fluctuations between iterations

- Track density matrix changes (

Parameter adjustment: If convergence is slow but stable, gradually increase mixing weight by 0.05 increments. If oscillations occur, immediately reduce the mixing weight.

Fallback strategy: If linear mixing fails to converge after parameter adjustments, switch to Pulay or Broyden methods with moderate history (4-6) and initial weight of 0.2-0.3.

Protocol for Advanced Mixing Methods

When implementing Pulay or Broyden mixing:

Method selection: Choose Pulay as the default advanced method (

SCF.Mixer.Method = Pulayin SIESTA), as it represents a good balance of performance and stability for most systems [11]. Reserve Broyden for metallic or magnetic systems where it may offer advantages.Parameter initialization:

- Set initial mixing weight to 0.3-0.5

- Configure history parameter to 4-6 for most systems

- For difficult systems, increase history to 8-10 as needed

Convergence monitoring: Watch for signs of divergence in early iterations, which may indicate excessively aggressive parameters requiring reduction.

System-Specific Recommendations

Different chemical systems require tailored approaches:

Simple molecules (CH₄, H₂O): Linear mixing with weight 0.2-0.3 works efficiently. Pulay with weight 0.5-0.7 provides faster convergence.

Metallic systems: Avoid linear mixing when possible. Use Broyden method with moderate weights (0.3-0.5) and extended history (6-8). Consider electron smearing to improve convergence [26].

Open-shell and magnetic systems: Broyden mixing often performs best. Ensure correct spin multiplicity settings [26].

Heterogeneous systems (alloys, oxides, surfaces): Use Pulay with "local-TF" mixing mode (in Quantum ESPRESSO) or reduced linear mixing weights (0.015-0.03 in ADF) [25] [26].

Table 3: Research Reagent Solutions for SCF Convergence Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| SIESTA Code | DFT simulation package with configurable mixing | Testing mixing algorithms across diverse systems [11] |

| Quantum ESPRESSO | Plane-wave DFT code | Solid-state and periodic system convergence studies [25] |

| ADF Modeling Suite | Molecular DFT software | Molecular system SCF convergence analysis [26] |

| VC Hamiltonians | Vibronic coupling models | Non-adiabatic dynamics simulations [27] |

| MQB Simulators | Mixed-qudit-boson quantum simulators | Quantum simulation of chemical dynamics [27] |

| DIIS Algorithm | Convergence acceleration | Advanced mixing beyond linear damping [11] [26] |

| Electron Smearing | Fractional occupation distributions | Metallic systems with small HOMO-LUMO gaps [26] |

| MESA/LISTi/EDIIS | Alternative SCF accelerators | Difficult cases where standard methods fail [26] |

Linear mixing serves as an important baseline in the landscape of SCF convergence strategies, offering simplicity and predictable behavior at the cost of slower convergence, particularly for challenging systems. Our experimental comparison demonstrates that while linear mixing with appropriate damping control remains viable for simple molecular systems, advanced methods like Pulay and Broyden consistently deliver superior performance across diverse chemical environments.

The optimal mixing strategy depends heavily on system characteristics—simple molecules tolerate more aggressive linear mixing parameters, while metallic and heterogeneous systems require the sophistication of history-dependent methods. Researchers should begin with conservative linear mixing parameters for unknown systems, then progress to advanced methods while leveraging system-specific optimizations. This structured approach ensures robust SCF convergence while maximizing computational efficiency in drug discovery and materials design applications.

Achieving self-consistency in electronic structure calculations is a fundamental challenge in computational chemistry and materials science. The Self-Consistent Field (SCF) method, central to Kohn-Sham Density-Functional Theory (KS-DFT) and Hartree-Fock (HF) calculations, requires finding a solution where the output potential or density matches the input. The efficiency and robustness of the SCF convergence process are critically dependent on the mixing strategy employed to update the solution between iterations. Poorly chosen mixing strategies can lead to slow convergence, oscillatory behavior, or complete failure to converge, particularly for challenging systems such as metals, surfaces, and molecules with localized states.

Several mixing algorithms have been developed to address these challenges. Simple linear mixing uses a fixed damping parameter to combine input and output densities or potentials, but often proves inefficient. More sophisticated techniques like Anderson acceleration and Direct Inversion in the Iterative Subspace (DIIS), also known as Pulay mixing, leverage historical iteration data to generate better updates. In this guide, we objectively compare the performance of Pulay (DIIS) mixing against other common alternatives, providing experimental data and methodological details to help researchers select the optimal approach for their systems.

Understanding Pulay (DIIS) Mixing

Theoretical Foundation

DIIS (Direct Inversion in the Iterative Subspace), developed by Peter Pulay, is an extrapolation technique designed to accelerate and stabilize the convergence of SCF iterations [28]. Its core principle is to minimize an error vector, typically the residual between input and output potentials or densities, within a subspace spanned by previous iteration vectors.

The method constructs a linear combination of approximate error vectors from previous iterations:

- em+1 = ∑i=1m ciei

The coefficients ci are determined by minimizing the norm of em+1 under the constraint that ∑ci = 1. This constrained minimization problem is solved using Lagrange multipliers, leading to a system of linear equations [28]:

Where Bij = 〈ej, ei〉 is the inner product of error vectors. The coefficients are then used to generate the next trial vector:

- pm+1 = ∑i=1m cipi

This approach effectively extrapolates the solution toward the null vector of the error, significantly accelerating convergence compared to simple fixed damping methods.

Algorithmic Workflow

The following diagram illustrates the logical workflow and key decision points in a standard DIIS implementation:

Comparative Analysis of Mixing Methods

We evaluate mixing methods across multiple metrics critical for production computational environments. The following table summarizes quantitative performance data gathered from various computational studies:

Table 1: Comparative Performance of SCF Mixing Methods