Optimizing Mixing Parameters for SCF Stability: A Guide for Biomedical Researchers and Drug Developers

This article provides a comprehensive exploration of how mixing parameters critically influence Stem Cell Fraction (SCF) stability, a key factor in developing predictable stem cell therapies.

Optimizing Mixing Parameters for SCF Stability: A Guide for Biomedical Researchers and Drug Developers

Abstract

This article provides a comprehensive exploration of how mixing parameters critically influence Stem Cell Fraction (SCF) stability, a key factor in developing predictable stem cell therapies. It covers foundational concepts of SCF quantification and stability, details computational and experimental methodologies for parameter optimization, addresses common troubleshooting scenarios, and validates approaches through comparative analysis of predictive models. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes current knowledge to enhance the biomanufacturing and therapeutic application of mesenchymal stem cells.

Understanding SCF Stability and the Critical Role of Mixing Parameters

Defining Stem Cell Fraction (SCF) and Its Impact on Therapeutic Outcomes

Stem Cell Fraction (SCF) represents the proportion of true stem cells within a heterogeneous cell population, serving as a critical quality attribute for cell-based therapies. This technical guide examines SCF's fundamental role in determining the efficacy, potency, and reproducibility of stem cell treatments. Emerging evidence indicates that SCF values exhibit significant inter-donor variation, ranging from 7% to 77% in mesenchymal stem cell (MSC) preparations, directly influencing therapeutic predictability. Within the context of mixing parameter effects on SCF stability, this review explores how culture conditions, donor characteristics, and biomanufacturing processes impact SCF maintenance. For researchers and drug development professionals, we provide comprehensive experimental protocols for SCF quantification, detailed analytical frameworks for stability assessment, and standardized methodologies to enhance clinical translation of stem cell therapies.

Definition and Biological Significance

Stem Cell Fraction (SCF) refers to the precise proportion of functional stem cells within a heterogeneous, mixed-cell population intended for therapeutic applications [1]. Unlike homogenous pharmaceutical compounds, stem cell preparations constitute complex mixtures of stem cells, committed progenitor cells, and differentiated cells, each with distinct regenerative capacities [1]. The SCF represents the biologically active component responsible for the therapeutic effects observed in stem cell-based treatments, including self-renewal, multi-lineage differentiation, and tissue regeneration [2] [3].

The concept of SCF has emerged as a fundamental parameter in regenerative medicine due to its direct correlation with treatment outcomes. Stem cells are defined by their unique capabilities for self-renewal (the ability to divide and produce identical copies of themselves) and differentiation (the ability to develop into specialized cell types) [3]. These characteristics make them promising candidates for repairing and regenerating damaged tissues and organs across numerous clinical applications, from neurodegenerative disorders to cardiovascular regeneration [2] [3].

The Critical Need for SCF Quantification

A persistent limitation in the development of predictable stem cell therapies (SCTs) has been the inability to accurately determine optimal stem cell dosing [1] [4]. Unlike pharmaceutical and biopharmaceutical medicines with precisely quantifiable active ingredients, stem cell therapies have lacked validated methodologies for accurate stem cell quantification [1]. This deficiency has resulted in unpredictable, difficult-to-compare, and poorly reproducible outcomes in clinical trials and approved stem cell treatments [1].

The current minimal criteria for defining human MSCs—plastic adherence, specific surface marker expression (CD105, CD73, CD90), and tri-lineage differentiation potential—fail to quantify the actual stem cell fraction within these heterogeneous populations [1]. Consequently, phenotypically similar MSC preparations from different tissue sources may demonstrate markedly different growth kinetics and therapeutic efficacy based on variations in their SCF composition [1]. This understanding has driven the development of novel quantification methodologies like Kinetic Stem Cell (KSC) counting, which provides the reproducible, accurate determination of SCF needed for standardized therapies [1] [4].

Methodologies for SCF Quantification

Current Limitations in Stem Cell Characterization

Traditional approaches to stem cell characterization rely heavily on surface marker expression through flow cytometry and functional differentiation assays toward osteogenic, adipogenic, and chondrogenic lineages [1] [5]. While these methods confirm mesenchymal lineage, they provide limited information about the actual functional stem cell frequency within a population. The absence of a specific definitive marker for MSCs further complicates accurate SCF determination [1]. Research has demonstrated that MSC populations meeting standard characterization criteria can exhibit vastly different functional capacities based on donor source and culture history [5].

Flow cytometry-based immunophenotyping, while useful for quality control, cannot distinguish between stem cells and committed progenitors with similar surface markers [1]. Similarly, differentiation assays demonstrate potential rather than quantify the frequency of cells capable of initiating and sustaining tissue regeneration. These limitations have highlighted the need for more sophisticated quantitative approaches to measure the actual functional stem cell component in therapeutic preparations [5].

Kinetic Stem Cell (KSC) Counting Methodology

KSC counting represents a novel computational approach that enables routine, reproducible, and accurate determination of SCF in heterogeneous tissue cell populations [1] [4]. This methodology employs a computer simulation system that analyzes cell culture kinetic data to determine the functional stem cell fraction based on proliferative capacity and self-renewal characteristics.

The experimental workflow for KSC counting involves several critical steps:

- Serial Cell Culture: Cells are cultured in triplicate in standardized conditions and serially passaged at consistent intervals (e.g., transfer of 1/20 of total recovered cells every 72 hours) [1].

- Viability Assessment: At each passage, both live and dead cells are counted manually using trypan blue exclusion and hemocytometer methods [1].

- Data Collection: Cumulative population doubling (CPD) and dead cell fraction data are collected throughout the entire serial culture period until no cells can be detected [1].

- Computational Analysis: Live-cell and dead-cell count data are input into specialized software (TORTOISE Test) to derive the initial SCF of the cell preparation [1].

- Stability Determination: The SCF half-life (SCFHL) during subsequent serial culture is determined using complementary software (RABBIT Count) to assess population stability [1] [4].

This methodology revealed for the first time that SCF within human alveolar bone-derived MSC (aBMSC) preparations varies significantly among donors, ranging from 7% to 77% (ANOVA p < 0.0001) [1] [4].

Limiting Dilution Assays for Functional Assessment

Limiting dilution techniques provide a functional quantitative measure of stem cell frequency based on differentiation potential rather than proliferative capacity [5]. This approach is particularly valuable for assessing specific lineage commitment potential and detecting changes in stem cell quality across passages and donors.

The experimental protocol involves:

- Cell Plating: MSCs are plated at progressively decreasing densities (e.g., 1000, 500, 250, 125, 63, and 32 cells/well) across multiple wells (typically 48 wells/dilution) [5].

- Differentiation Induction: After cell adhesion, expansion media is replaced with lineage-specific differentiation media (e.g., adipogenic differentiation media) [5].

- Media Maintenance: Differentiation media is supplemented regularly (every 3-5 days) throughout the differentiation period (typically 21 days) [5].

- Staining and Analysis: Plates are fixed and stained with lineage-specific dyes (e.g., Oil Red O for adipogenesis), and wells containing at least one differentiated cell are scored positive [5].

- Frequency Calculation: Precursor frequencies are determined by plotting the fraction of non-responding wells against cell dilution on a semi-logarithmic plot. The inverse of the cell dose corresponding to 37% non-responding wells represents the precursor frequency [5].

This technique has revealed substantial donor-dependent variations in adipogenic potential, with precursor frequencies ranging from 1 in 76 cells to 1 in 2035 cells at later passages [5].

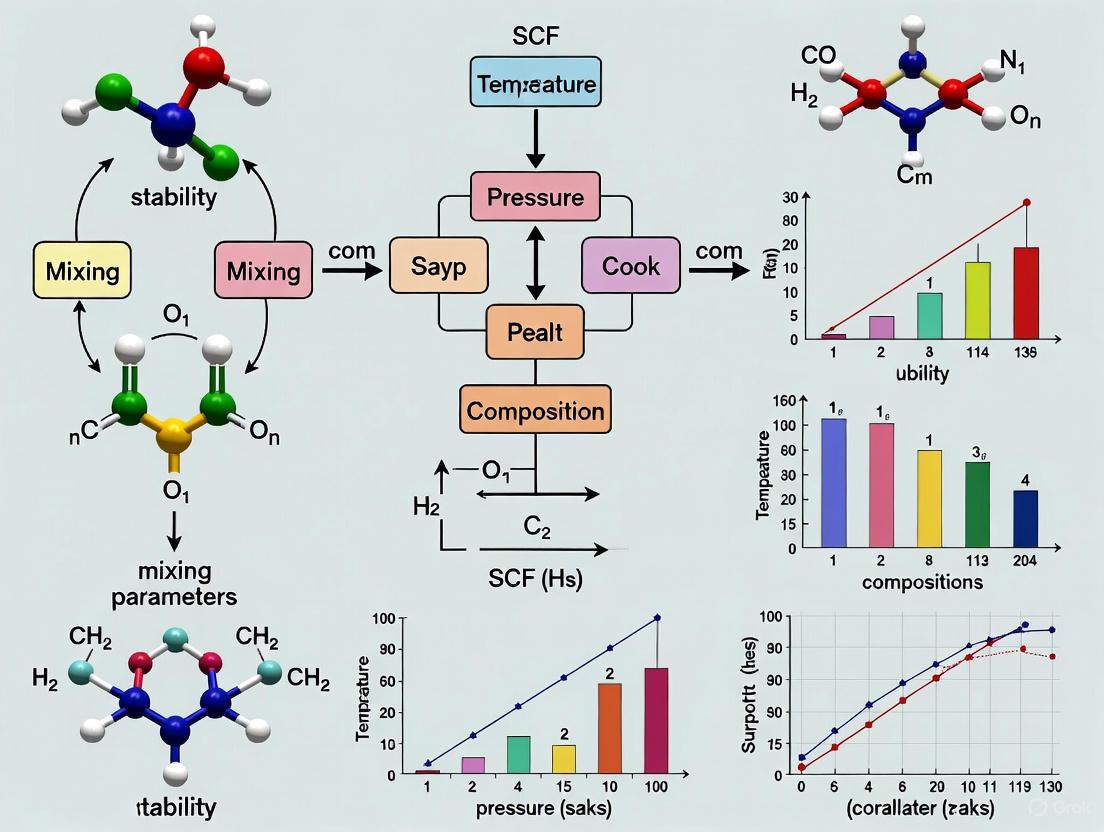

The following diagram illustrates the experimental workflow for SCF quantification using the KSC counting method:

Factors Influencing SCF and Stability

Donor-Dependent Variations in SCF

Research has consistently demonstrated that SCF values exhibit substantial inter-donor variation, highlighting the significant impact of biological individuality on stem cell quality. A comprehensive analysis of oral-derived human alveolar bone MSC (aBMSC) preparations from eight patients revealed SCF values ranging from 7% to 77%, with statistical significance (ANOVA p < 0.0001) [1] [4]. This remarkable variation underscores that donor-specific biological factors profoundly influence the initial stem cell composition of therapeutic preparations.

The stability of SCF during serial culture also shows considerable inter-donor variation, with some patient-derived cell preparations maintaining stable SCF levels while others demonstrate significant decay [1] [4]. This stability profile directly impacts the potential for clinical-scale expansion, as only preparations with sufficient SCF stability support long-term net expansion of stem cells needed for therapeutic applications [1]. These findings have crucial implications for biomanufacturing processes and quality control in clinical translation.

Passage-Induced Changes and Culture Parameters

Serial passaging exerts profound effects on SCF and overall stem cell quality, with specific culture parameters significantly influencing stability outcomes. Studies investigating MSCs from multiple donors at passages 3, 5, and 7 demonstrated passage-dependent alterations in differentiation capacity, clonogenicity, and cell size [5]. Notably, these changes exhibited donor-specific patterns, with some MSC preparations maintaining adipogenic precursor frequency through passages (~1 in 76 cells), while others showed dramatic decreases (1 in 2035 cells by passage 7) [5].

The culture environment parameters, including seeding density, media composition, and passage schedule, collectively function as critical "mixing parameters" that influence SCF stability. Research indicates that the transfer schedule during serial culture (e.g., 1/20 of total recovered cells every 72 hours) directly impacts the maintenance of stem cell fractions [1]. Additionally, the progressive increase in cell diameter observed with serial passaging correlates strongly with decreased clonogenicity, suggesting a relationship between cellular enlargement and functional attenuation [5].

Table 1: Factors Influencing SCF Stability and Therapeutic Potential

| Factor Category | Specific Parameters | Impact on SCF | Experimental Evidence |

|---|---|---|---|

| Donor Characteristics | Age, Health Status, Genetic Background | Significant inter-donor variation (7-77% SCF) | ANOVA p < 0.0001 for donor-dependent SCF variation [1] |

| Culture Conditions | Seeding Density, Media Composition, Passage Schedule | Influences SCF half-life and stability during expansion | Serial culture with standardized passage protocols [1] |

| Passage Number | Early vs. Late Passage | Decreased differentiation potential and clonogenicity at higher passages | Adipogenic precursor frequency decrease from 1/76 to 1/2035 cells [5] |

| Cell Size | Diameter Measurements | Inverse correlation between cell size and clonogenicity | Increased cell diameter with passage correlates with reduced CFU [5] |

Feedback Regulation Mechanisms

Biological feedback loops represent intrinsic "mixing parameters" that significantly influence SCF dynamics in both normal and pathological contexts. Mathematical modeling of feedback regulation in bladder cancer xenografts has revealed that specific types of feedback loops can promote cancer stem cell (CSC) enrichment and consequent therapy resistance [6]. These models demonstrate that negative feedback on CSC division rate or positive feedback on differentiated cell death rate can drive CSC enrichment, with the extent of enrichment determined by CSC death rate, self-renewal probability, and feedback strength [6].

In healthy tissue systems, feedback mechanisms help maintain homeostatic balance between stem cell self-renewal and differentiation. The breakdown or alteration of these regulatory loops in therapeutic expansion systems or disease states can significantly impact SCF stability. Research suggests that feedback mediators secreted by either stem cells or differentiated cells can establish communication networks that influence population dynamics [6]. Understanding these native regulatory mechanisms provides valuable insights for developing culture systems that better maintain therapeutic SCF during biomanufacturing.

Impact of SCF on Therapeutic Outcomes

SCF as a Determinant of Treatment Efficacy

The Stem Cell Fraction directly influences therapeutic potency and treatment outcomes across various clinical applications. As stem cells function as "living drugs" with the capacity to sense environmental cues, home to injury sites, and integrate into tissues, the proportion of functionally competent stem cells determines the magnitude of therapeutic effect [3]. The mechanisms through which stem cells exert their benefits—including differentiation into specific cell types, paracrine signaling, immunomodulation, and anti-apoptotic effects—all depend on the presence of biologically active stem cells [3].

In clinical contexts, inadequate SCF in therapeutic preparations correlates with reduced efficacy and unpredictable outcomes. For hematopoietic stem cell transplantation (HSCT)—the prototypical stem cell therapy success—effectiveness relies directly on the ability of donor-derived stem cells to engraft, self-renew, and reconstitute the immune and hematopoietic systems [3]. Similarly, the emerging success of MSC therapies for conditions like steroid-refractory acute graft-versus-host disease (SR-aGVHD) depends on the administration of sufficient functional stem cells to modulate immune responses and mitigate inflammation [7].

SCF in Cancer Therapy Resistance

In oncology contexts, Cancer Stem Cell Fraction serves as a critical determinant of treatment response and disease progression. CSCs possess intrinsic protective properties, including higher expression of drug-efflux pumps, enhanced DNA-repair capacity, and better protection against reactive oxygen species, making them less responsive to conventional treatments [6]. Consequently, tumors with high CSC fractions demonstrate reduced sensitivity to chemotherapy, even in the absence of resistance-inducing mutations [6].

Research in patient-derived bladder cancer mouse xenografts has demonstrated that CSC enrichment occurs during successive chemotherapy cycles, directly correlating with diminished response to subsequent treatment cycles [6]. This phenomenon represents a form of non-genetic drug resistance where the proportional increase in treatment-resistant CSCs drives therapeutic failure. Mathematical models incorporating feedback regulation mechanisms have shown that both negative feedback on CSC division rate and positive feedback on differentiated cell death rate can promote CSC enrichment, highlighting how biological "mixing parameters" influence therapeutic outcomes through SCF modulation [6].

Table 2: SCF Impact Across Therapeutic Applications

| Application Area | SCF Role | Clinical Consequences | Evidence |

|---|---|---|---|

| Regenerative Medicine | Determines engraftment potential and tissue regeneration capacity | Higher SCF correlates with improved structural and functional outcomes | MSC preparations with varying SCF show different bone regeneration capacity [1] |

| Cancer Therapy | CSC fraction influences chemotherapy sensitivity | Enrichment of CSCs during treatment correlates with therapy resistance | Increased CSC fraction in bladder cancer xenografts reduces chemotherapy response [6] |

| Immunomodulation | Proportion of functional MSCs determines immunomodulatory potency | Sufficient SCF required for effective GVHD treatment | MSC therapy (Ryoncil) approved for pediatric steroid-refractory acute GVHD [7] |

| Biomanufacturing | SCF stability affects expansion potential and batch consistency | Unstable SCF leads to variable product quality and potency | Inter-donor variation in SCF stability impacts clinical-scale expansion [1] [4] |

Analytical Framework for SCF Stability

Mathematical Modeling of SCF Dynamics

Mathematical approaches provide powerful tools for understanding SCF dynamics and predicting behavior under various conditions. Fractal-fractional order modeling has been employed to study stem cell-chemotherapy combinations for cancer, offering insights into how different therapeutic parameters influence cellular dynamics [8]. These models capture the inherent complexities and memory effects in biological systems, potentially leading to more accurate predictions of treatment outcomes based on SCF parameters [8].

Ordinary differential equation models have been particularly valuable for analyzing how feedback regulatory loops influence SCF in hierarchically structured cell populations [6]. These models enable researchers to define general characteristics of feedback loops that can promote stem cell enrichment, guiding experimental screening for specific feedback mediators [6]. The analytical framework derived from these models reveals that negative feedback on stem cell division rate or positive feedback on differentiated cell death rate can lead to CSC enrichment, with the extent determined by CSC death rate, self-renewal probability, and feedback strength [6].

Quality Control and Standardization Approaches

The integration of SCF quantification into quality control protocols represents a critical advancement for standardizing stem cell therapies. Kinetic Stem Cell counting methodologies offer a standardized approach to quantify the functional stem cell component, moving beyond mere phenotypic characterization to functional assessment [1] [4]. Implementing such quantitative measures during manufacturing enables batch consistency and ensures that therapeutic products contain sufficient stem cell fractions for clinical efficacy.

For regulatory compliance and clinical translation, establishing reference standards for SCF across different stem cell types and applications becomes essential. The variability in functional properties of stem cells based on tissue source, donor age, health status, and production protocols currently compromises potency, consistency, and clinical reproducibility [3]. Comprehensive quantitative assessment using SCF quantification, coupled with traditional quality measures, provides a more robust framework for ensuring product quality and predicting therapeutic performance.

The following diagram illustrates the key signaling pathways and feedback mechanisms that regulate SCF dynamics:

Research Reagent Solutions for SCF Analysis

Table 3: Essential Research Reagents for SCF Quantification Studies

| Reagent/Category | Specific Examples | Function in SCF Research | Application Notes |

|---|---|---|---|

| Cell Culture Media | α-MEM with FBS (16.5%), MEMα with 15% FBS, Adipogenic Differentiation Media (Miltenyi Biotec) | Supports expansion and differentiation of MSC populations | Serum lot consistency critical for reproducible results [5] |

| Characterization Antibodies | CD73 (BV421), CD90 (FITC), CD105 (PE), CD29-APC, Isotype controls | Immunophenotyping for MSC marker expression | Confirms mesenchymal lineage but doesn't quantify SCF [1] [5] |

| Cell Staining Reagents | Trypan Blue, Crystal Violet, Oil Red O, Formalin | Viability assessment, colony visualization, lipid droplet staining | Oil Red O extraction enables spectrophotometric quantification [5] |

| Enzymatic Dissociation | 0.25% Trypsin/EDTA | Cell harvesting and passage | Standardized digestion protocols ensure consistent cell yields [5] |

| Specialized Software | TORTOISE Test, RABBIT Count | Computational SCF quantification and stability analysis | Determines initial SCF and SCF half-life from culture kinetics [1] [4] |

| Cryopreservation Media | 5% DMSO, 30% FBS, Pen/Strep | Long-term storage of cell stocks | Maintains viability and functionality across passages [5] |

The precise definition and quantification of Stem Cell Fraction represents a transformative approach to standardizing and improving stem cell-based therapies. As research continues to elucidate the complex relationships between SCF, culture parameters, and therapeutic outcomes, the integration of SCF quantification into routine biomanufacturing and quality control protocols will become increasingly essential. The development of novel computational methodologies like KSC counting provides the analytical framework needed to advance from qualitative descriptions to quantitative assessments of stem cell products.

Future directions in SCF research should focus on establishing correlative relationships between SCF values and specific clinical outcomes across different therapeutic applications. Additionally, investigating how various "mixing parameters"—including biochemical, biophysical, and cultural factors—influence SCF stability will enable the design of optimized biomanufacturing processes. The integration of mathematical modeling with experimental validation offers promising pathways for predicting SCF behavior under different conditions and intervention strategies.

As the field progresses toward more personalized stem cell therapies, the ability to accurately quantify and maintain therapeutic SCF will be paramount for ensuring consistent, safe, and effective treatments. By embracing these quantitative approaches, researchers and drug development professionals can overcome current limitations in stem cell therapy standardization and fully realize the potential of regenerative medicine.

The Challenge of Batch-to-Batch Variation in Cell Preparations

Batch-to-batch variation in cell preparations represents a critical challenge in biomedical research and therapeutic development, particularly within the context of mixing parameter effects on SCF stability research. This variation introduces unwanted experimental noise that can compromise data reproducibility, obscure genuine biological signals, and potentially lead to misleading scientific conclusions. In the pharmaceutical industry, such variability directly impacts regulatory approval processes and therapeutic outcomes for cell-based therapies by affecting both product quality and consistency [9]. The complex interplay between biological systems and manufacturing processes creates multiple potential sources of variation, necessitating comprehensive strategies for identification, measurement, and control.

The fundamental challenge lies in distinguishing biologically significant changes from technical artifacts introduced during cell preparation. This is particularly crucial when studying subtle system responses, such as those investigated in SCF stability research, where mixing parameters during cell culture and preparation can significantly influence experimental outcomes. As single-cell technologies have advanced, researchers have developed increasingly sophisticated methods to quantify these effects, with algorithms like MELD (Manifold Estimation of Latent Dynamics) specifically designed to quantify the effect of experimental perturbations at the single-cell level by modeling the cellular transcriptomic state space as a smooth, low-dimensional manifold [10]. This technical guide examines the sources, impacts, and mitigation strategies for batch-to-batch variation, with particular emphasis on implications for SCF stability research and drug development applications.

Batch-to-batch variation in cell preparations stems from multiple interconnected factors spanning biological, procedural, and measurement domains:

Cellular Variation Factors: The health and state of cells used as biofactories constitute a major source of variation. Cell passage number significantly influences consistency, with higher passage numbers (typically above 20) leading to alterations in morphology, slower growth, reduced protein expression, and poorer transfection efficiency. Furthermore, older cells may perform post-translational modifications of proteins differently, directly impacting the functionality of the resulting cell preparations [9]. The cell culture media composition also introduces variability, particularly when serum or reagents are sourced from different manufacturers or lots, potentially compromising cellular health and subsequent experimental outcomes.

Process-Induced Variations: The expansion conditions during bioreactor culture introduce multiple potential variation sources. Mixing parameters within bioreactors, including impeller-driven fluid dynamics, affect aeration, heat transfer, and mass transfer, creating mechanical stresses that influence cellular health [9]. Post-expansion processing steps contribute additional variability, with cell lysis efficiency, endonuclease treatment duration, and clarification filter selection all impacting the final cell preparation quality and consistency. Chromatography-based purification methods, while effective, present challenges in separating full and empty viral capsids in vector production due to minimal charge differences [9].

Measurement and Analytical Variations: Historical methods like plaque assays for viral titer determination introduce human error through manual plaque counting, while more advanced techniques such as electron microscopy and mass spectrometry, though sensitive, suffer from being costly, labor-intensive, and low-throughput [9]. The absence of orthogonal analytical methods for cross-verification further compounds measurement uncertainties, particularly for critical quality attributes like the ratio of full to empty capsids in viral vector preparations.

Impact on Research and Therapeutics

The consequences of uncontrolled batch-to-batch variation extend across the research and development continuum:

Research Reproducibility: Batch effects introduce technical noise that can dilute genuine biological signals, reducing statistical power and potentially leading to false conclusions. In severe cases where batch effects correlate with experimental outcomes, they can drive irreproducible findings that undermine research validity [11]. Single-cell RNA sequencing studies are particularly vulnerable, as batch effects can be confounded with biological signals, especially in longitudinal studies where technical variables may affect outcomes in the same manner as time-varying exposures [11].

Therapeutic Development Implications: For cell-based therapies, batch variation directly impacts product safety, efficacy, and regulatory compliance. Regulatory agencies require demonstration of process consistency and product quality, with single-cell clonality often necessary to limit process variability [9]. Variations in vector potency or quality attributes can significantly affect clinical outcomes, particularly when using low-potency preparations that may compromise therapeutic effectiveness [9].

Table 1: Quantitative Impact of Batch Variation Control Measures

| Control Measure | Key Parameter | Impact/Improvement | Reference Application |

|---|---|---|---|

| Passage Number Control | Keep between 5-20 passages | Maintains morphology, growth rates, and transfection efficiency | Viral vector manufacturing [9] |

| Organic Fertilizer Combination | 20-40% substitution of mineral fertilizers | 20-30% increase in microbial biomass; 25-40% yield increase in crops | Soil health optimization [12] |

| Integrated Fertilization | Organic-mineral combination | 110.6% increase in soil organic carbon; 59.2% increase in nitrogen | Field trials in rice systems [12] |

| Producer Cell Line Establishment | Integrated host cell genes | Addresses slow proliferation and low transfection efficiency | Viral vector manufacturing [9] |

Measurement and Analytical Approaches

Quantitative Assessment Methods

Accurately measuring batch-to-batch variation requires orthogonal analytical approaches targeting different quality attributes:

Viral Titer Determination: While traditional plaque assays provide a basic assessment, they are susceptible to operator bias in plaque counting. Advanced approaches implement automated image acquisition and analysis systems to improve objectivity. Alternatively, flow cytometry-based methods utilizing primary anti-viral antibodies and fluorescently labeled secondary antibodies offer more quantitative assessment, as does rt-qPCR (real-time quantitative PCR) for genetic quantification [9].

Vector Composition Analysis: Density gradient centrifugation historically served as the standard for analyzing viral vector composition by physically separating full particles from partial and empty capsids, though it is time-consuming. Electron microscopy and mass spectrometry provide highly sensitive alternatives but face limitations in cost and throughput. Emerging techniques like UV absorbance and anion exchange chromatography offer promising alternatives for determining the ratio of full to empty capsids [9].

Potency Assessment: Beyond titer and composition, potency measurements critical for therapeutic applications include determining genome copy number in host cells using methods like dPCR (digital PCR), which provides absolute quantification of viral vector genomes [9].

Statistical and Computational Methods

Statistical approaches are essential for distinguishing batch effects from biological signals:

Stability Testing Protocols: In pharmaceutical stability testing, Analysis of Covariance (ANCOVA) tests statistical differences between slopes and intercepts of regression lines from multiple batches, using time as a covariate. A significance level of 0.25 serves as the criterion for pooling batch data, with models including Common Intercept Common Slope (CICS), Separate Intercept Separate Slope (SISS), and Separate Intercept Common Slope (SICS) guiding shelf-life estimation [13].

Single-Cell Analysis Algorithms: For single-cell data, the MELD algorithm quantifies perturbation effects across the transcriptomic space by estimating sample-associated relative likelihoods. This approach models the cellular transcriptomic state space as a manifold and uses graph signal processing to calculate a kernel density estimate for each sample over the graph, identifying cell populations specifically affected by a perturbation [10]. Complementary vertex frequency clustering (VFC) then extracts populations of cells similarly affected between conditions at the granularity matching the perturbation response [10].

Table 2: Analytical Methods for Assessing Batch Variation

| Method Category | Specific Techniques | Key Applications | Advantages/Limitations |

|---|---|---|---|

| Titer Measurement | Plaque assay, rt-qPCR, Flow cytometry | Viral vector quantification | Plaque assay: inexpensive but subjective; rt-qPCR: objective and quantitative [9] |

| Composition Analysis | Density gradient centrifugation, Electron microscopy, Mass spectrometry, UV absorbance | Full/empty capsid ratio | EM/MS: sensitive but costly/low throughput; UV: less costly alternative [9] |

| Stability Modeling | ANCOVA, CICS/SISS/SICS models | Shelf-life estimation, Batch pooling | Statistical rigor for regulatory compliance; requires multiple batches [13] |

| scRNA-seq Analysis | MELD algorithm, Vertex Frequency Clustering | Perturbation quantification at single-cell level | Identifies diffuse perturbation responses; avoids discrete clustering artifacts [10] |

Experimental Protocols for Variation Control

Cell Culture and Preparation Protocol

This protocol outlines standardized procedures for minimizing batch-to-batch variation in cell preparations for research applications, particularly relevant to SCF stability studies:

Cell Bank Management: Maintain detailed records of cell passage numbers, strictly limiting usage to between passages 5-20 to prevent phenotypic drift. Implement comprehensive cell banking systems with standardized freezing media and controlled-rate freezing protocols to ensure consistency across experimental batches. Regularly authenticate cell lines through STR profiling and mycoplasma testing to maintain lineage integrity [9].

Culture Conditions Standardization: Source cell culture media, serum, and reagents from qualified suppliers with strict lot-to-lot consistency testing. Pre-test critical components like serum for compatibility and performance before full-scale use. Maintain detailed records of all media components and preparation dates. Implement standardized thawing procedures with defined seeding densities and culture vessel formats to minimize procedural variability [9].

Bioreactor Expansion Control: For scaled-up production, carefully optimize and document bioreactor parameters including mixing speed, aeration rates, temperature, and pH control. Monitor cell density and viability throughout the expansion process, establishing predetermined criteria for harvesting. Implement standardized cell dissociation methods with defined enzyme concentrations, incubation times, and neutralization procedures [9].

Transfection and Vector Production Protocol

Specific procedures for viral vector production relevant to gene therapy applications and cell engineering:

Transfection Standardization: Employ consistent transfection methodologies (e.g., PEI, calcium phosphate, or electroporation) with rigorously optimized parameters including DNA:reagent ratios, cell density at transfection, and incubation conditions. For producer cell lines, validate single-cell clonality and maintain comprehensive documentation for regulatory compliance [9].

Harvest and Clarification: Implement standardized cell lysis protocols with controlled freeze-thaw cycles or chemical lysis methods applied consistently across batches. Establish predetermined endonuclease treatment conditions (concentration, duration, temperature) to reduce contaminating DNA, with potential repetition if necessary. Optimize clarification filters to maximize viral vector recovery while maintaining consistency between batches [9].

Purification and Analysis: Employ chromatography-based purification methods with carefully controlled binding and elution conditions. Develop standardized analytical workflows incorporating orthogonal methods (e.g., combination of UV absorbance and anion exchange chromatography) for assessing critical quality attributes. Establish comprehensive documentation practices tracking all process parameters and quality control measurements for each batch [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Batch Variation Control

| Reagent/Material | Function/Purpose | Critical Quality Controls |

|---|---|---|

| Cell Culture Media | Provides nutrients for cell growth and maintenance | Component sourcing consistency; Performance testing; Endotoxin levels [9] |

| Serum/Lot | Supplies growth factors and adhesion proteins | Lot-to-lot testing; Mycoplasma screening; Virus inactivation [9] |

| Transfection Reagents | Facilitates nucleic acid delivery into cells | Purity; Activity testing; Storage conditions; Preparation consistency [9] |

| Endonucleases | Degrades contaminating DNA in vector preps | Activity assays; Purity; Concentration verification; Host cell DNA removal [9] |

| Chromatography Resins | Purifies viral vectors from contaminants | Binding capacity; Ligand density; Lot consistency; Cleaning validation [9] |

| Reference Standards | Calibrates analytical measurements | Purity; Potency; Stability; Traceability to international standards [9] |

Visualization of Batch Variation Analysis Workflow

The following diagram illustrates the integrated workflow for identifying, measuring, and mitigating batch-to-batch variation in cell preparations:

Batch Variation Analysis Workflow

This workflow demonstrates the systematic approach required to address batch-to-batch variation, connecting variation sources to specific assessment methods and corresponding mitigation strategies, ultimately leading to improved experimental reproducibility.

Mitigation Strategies and Best Practices

Process Control and Standardization

Implementing robust process controls constitutes the foundation for minimizing batch-to-batch variation:

Cellular Material Management: Establish cell banking systems with comprehensive documentation of passage numbers, doubling times, and morphological characteristics. Strictly limit working cell bank usage to passages 5-20 to maintain phenotypic stability and consistent performance [9]. Implement routine authentication testing to confirm cell line identity and screen for contamination.

Process Parameter Optimization: Identify and control critical process parameters (CPPs) that significantly impact product quality attributes. For bioreactor cultures, this includes mixing parameters, dissolved oxygen, pH, and feeding strategies. Design structured design of experiments (DoE) approaches to characterize parameter interactions and establish proven acceptable ranges for each critical parameter [9].

Raw Material Control: Implement rigorous raw material qualification programs with particular emphasis on biologically-derived components like serum, which can exhibit significant lot-to-lot variability. Establish pre-defined acceptance criteria for critical reagents and conduct thorough testing before implementation in manufacturing processes. Maintain adequate inventory of qualified materials to support extended campaign lengths [9].

Analytical and Computational Approaches

Advanced analytical and computational methods provide essential tools for variation control:

Orthogonal Analytics: Implement multiple analytical methods for critical quality attributes to compensate for limitations of individual techniques. For viral vector characterization, this might include combining density gradient centrifugation with UV absorbance and anion exchange chromatography to accurately determine the ratio of full to empty capsids [9]. Establish reference standards for method qualification and system suitability testing.

Stability Modeling: Apply statistical stability testing following ICH Q1E guidelines, using Analysis of Covariance (ANCOVA) to test differences between batch regression lines with time as a covariate. Employ a significance level of 0.25 for pooling decisions, selecting appropriate models (CICS, SISS, or SICS) based on statistical testing outcomes [13].

Perturbation Quantification: For single-cell studies, implement algorithms like MELD to quantify experimental perturbation effects across the transcriptomic space, using graph signal processing to estimate sample-associated relative likelihoods. This approach helps distinguish genuine biological responses from batch effects by modeling cellular states as a manifold and identifying cell populations specifically affected by experimental conditions [10].

Addressing batch-to-batch variation in cell preparations requires a systematic, multifaceted approach spanning biological understanding, process control, and advanced analytics. Within the context of SCF stability research, where mixing parameters may significantly influence system behavior, controlling these variations becomes particularly critical for generating reliable, interpretable data. The strategies outlined in this technical guide—from standardized protocols and orthogonal analytics to computational perturbation quantification—provide a framework for minimizing variability and enhancing research reproducibility. As cell-based therapies and sophisticated research models continue to advance, the implementation of these robust approaches to batch variation control will remain essential for both basic research translation and therapeutic development success.

The Self-Consistent Field (SCF) method is the fundamental algorithm for determining electronic structure configurations in computational chemistry, forming the basis for both Hartree-Fock and Density Functional Theory (DFT) calculations [14]. This iterative procedure solves the Kohn-Sham equations self-consistently, where the Hamiltonian depends on the electron density, which in turn is obtained from the Hamiltonian [15]. The cycle continues until convergence criteria are met, typically monitored through changes in the density matrix (dDmax) or Hamiltonian matrix (dHmax) [15].

SCF stability refers to whether the obtained solution represents a true local minimum or a saddle point in the electronic energy landscape [16] [17]. The stability analysis evaluates the electronic Hessian with respect to orbital rotations, with negative eigenvalues indicating an unstable saddle point solution [17]. Such instabilities frequently occur in systems with stretched bonds, where symmetric initial guesses may prevent finding the correct unrestricted solution, or in systems with small HOMO-LUMO gaps, localized open-shell configurations, and transition state structures [17] [14].

Mixing parameters serve as crucial control levers in managing SCF stability by determining how information from previous iterations is incorporated to generate new guesses for the density or Hamiltonian matrices [15] [18]. Proper adjustment of these parameters can transform divergent or oscillating SCF behavior into stable, rapid convergence, making them essential tools for computational chemists studying challenging molecular systems.

Theoretical Foundation of Mixing Methods

The SCF Cycle and Convergence Challenges

The SCF cycle follows a well-defined iterative process [15]:

- Initialization: Start with an initial guess for the electron density or density matrix

- Hamiltonian Construction: Compute the Hamiltonian based on the current density

- Equation Solving: Solve the Kohn-Sham equations to obtain new wavefunctions

- Density Update: Construct a new electron density from the wavefunctions

- Convergence Check: Evaluate whether convergence criteria are met

- Mixing Application: If not converged, apply mixing schemes to generate new input density

The fundamental challenge arises from the fact that the output density ((n{\text{out}})) typically differs from the input density ((n{\text{in}})) in early iterations [18]. The density residual, (n{\text{out}} - n{\text{in}}), must be driven to zero for a self-consistent solution, but naive updates often lead to divergence or oscillatory behavior, particularly in systems with complex electronic structures [18].

Mathematical Formulation of Density Mixing

Density mixing addresses this challenge through systematic updates of the form: [ n{\text{in}}^{(k+1)} = n{\text{in}}^{(k)} + \delta n^{(k)} ] where the density change (\delta n^{(k)}) is determined by the mixing algorithm [18].

In linear mixing, the simplest approach, the update follows: [ n{\text{in}}^{(k+1)} = (1-\alpha) n{\text{in}}^{(k)} + \alpha n_{\text{out}}^{(k)} ] where (\alpha) is the mixing parameter controlling aggressiveness (typically 0.05-0.25) [18]. While robust, this method converges slowly for complex systems.

More advanced methods like Pulay (DIIS) and Broyden schemes utilize historical information, constructing the new density as an optimized combination of previous iterations [15] [14]. These methods significantly accelerate convergence but require careful parameterization to maintain stability.

The following diagram illustrates the SCF cycle with integrated mixing:

Mixing Methodologies and Algorithms

Linear Mixing

Linear mixing represents the most fundamental approach, where the new input density is a simple weighted average of the previous input and output densities [18]. The mixing parameter (\alpha) (referred to as SCF.Mixer.Weight in SIESTA) controls the step size [15]. Small values (0.05-0.15) provide stability but slow convergence, while larger values (0.25-0.4) accelerate convergence but risk divergence [15]. This method is robust but inefficient for difficult systems, particularly those with metallic character or small HOMO-LUMO gaps [15].

Pulay (DIIS) Mixing

Pulay mixing, also known as Direct Inversion in the Iterative Subspace (DIIS), is the default in many quantum chemistry codes like SIESTA [15]. This method builds an optimized combination of past residuals to accelerate convergence [15]. Key parameters include:

SCF.Mixer.History: Controls how many previous steps are stored (default is 2)SCF.Mixer.Weight: Provides damping for stabilitySCF.DIIS.N: Number of expansion vectors (default ~10)

Pulay mixing typically outperforms linear mixing for most systems but requires more memory to store historical data [15].

Broyden Mixing

Broyden's method implements a quasi-Newton scheme that updates approximate Jacobians to improve convergence [15]. It often demonstrates similar performance to Pulay mixing but can be superior for metallic systems and magnetic materials [15] [18]. The method is particularly effective for systems where the dielectric response is challenging to capture with simpler schemes.

Dielectric Preconditioning

For metallic systems with long-range density oscillations, dielectric preconditioning significantly enhances stability [18]. This approach approximates the inverse dielectric matrix using the Thomas-Fermi screening wavevector:

[ n{\text{in}}^{(k+1)}(\vec{G}) = n{\text{in}}^{(k)}(\vec{G}) + \frac{\alpha|\vec{G}|^2}{|\vec{G}|^2 + |G0|^2} \left(n{\text{out}}^{(k)}(\vec{G}) - n_{\text{in}}^{(k)}(\vec{G})\right) ]

where (\vec{G}) represents reciprocal lattice vectors and (G_0) is the screening parameter [18]. This scheme effectively damps long-wavelength charge sloshing in metals, which is a common source of convergence difficulties.

Table 1: Comparison of SCF Mixing Methods

| Method | Key Parameters | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Linear Mixing | Mixer.Weight (0.05-0.25) |

High stability, simple implementation | Slow convergence | Small molecules, initial attempts |

| Pulay (DIIS) | Mixer.History (2-8), Mixer.Weight (0.1-0.3) |

Fast convergence for most systems | Memory intensive | General purpose, molecular systems |

| Broyden | Mixer.History, Mixer.Weight |

Excellent for metallic/magnetic systems | Complex implementation | Transition metals, magnetic materials |

| Dielectric Preconditioning | BMIX, AMIN (VASP) |

Controls charge sloshing in metals | System-specific parameters | Metallic systems, small-gap semiconductors |

Experimental Protocols for Parameter Optimization

Stability Analysis Procedure

Before optimizing mixing parameters, performing an SCF stability analysis is essential [17]. The following protocol determines whether a converged solution represents a true minimum:

- Converge the SCF calculation using standard parameters

- Activate stability analysis using

STABPerform true(ORCA) or equivalent keyword [17] - Configure analysis parameters:

STABNRoots: Number of lowest eigenpairs to seek (typically 3-5)STABRTol: Convergence tolerance for residuals (default 0.0001)- Orbital window settings to focus on relevant orbitals [17]

- Execute analysis and examine eigenvalues:

- All positive: Stable solution

- Negative eigenvalues: Unstable solution [17]

- If unstable, restart SCF with new guess orbitals or modified mixing parameters [17]

Systematic Parameter Screening

For method development, systematic screening of mixing parameters provides optimal convergence characteristics:

- Select a representative test system with electronic structure similar to target applications

- Define parameter ranges based on method requirements:

- Linear mixing: α = 0.05, 0.1, 0.2, 0.3, 0.4, 0.5

- Pulay/Broyden: History = 2, 4, 6, 8 with weights 0.1-0.9

- Execute SCF calculations for each parameter combination

- Record convergence metrics:

- Number of iterations to convergence

- Occurrence of divergence or oscillation

- Final energy difference from reference

- Identify optimal parameters balancing speed and reliability

Table 2: Exemplary SCF Convergence Data for Methane System (SIESTA)

| Mixing Method | Mixer Weight | Mixer History | SCF Iterations | Convergence Quality |

|---|---|---|---|---|

| Linear (Density) | 0.1 | 1 | 45 | Stable but slow |

| Linear (Density) | 0.2 | 1 | 38 | Stable |

| Linear (Density) | 0.4 | 1 | 27 | Minor oscillations |

| Linear (Hamiltonian) | 0.1 | 1 | 41 | Stable but slow |

| Pulay (Density) | 0.1 | 4 | 22 | Stable |

| Pulay (Density) | 0.5 | 4 | 15 | Stable, efficient |

| Pulay (Density) | 0.9 | 4 | 11 | Occasional divergence |

| Broyden (Hamiltonian) | 0.3 | 6 | 13 | Fast, reliable |

Protocol for Challenging Systems

For difficult cases (metals, magnetic materials, stretched bonds), a specialized protocol enhances convergence likelihood:

Initial stabilization phase:

- Use linear mixing with small weight (0.05-0.1)

- Enable electron smearing (0.1-0.3 eV) for metallic systems [14]

- Perform 20-30 SCF iterations

Aggressive convergence phase:

- Switch to Pulay or Broyden mixing

- Increase mixing weight to 0.3-0.5

- Use larger history (6-8 for Broyden)

Final refinement:

- Disable smearing if enabled

- Tighten convergence criteria

- Verify stability of final solution [17]

The following workflow illustrates the parameter optimization process:

Case Studies and Experimental Data

Molecular System: Methane Calculation

The methane molecule represents a typical simple system where SCF convergence is generally straightforward but sensitive to parameter choices. Experimental data from SIESTA tutorials demonstrates the effect of different mixing schemes [15]:

With default parameters (Hamiltonian mixing, Pulay method, weight=0.25), methane SCF convergence typically requires 15-20 iterations. However, suboptimal parameter choices significantly impact efficiency:

- Overly aggressive mixing (weight=0.8) causes oscillation and failure to converge within 50 iterations

- Excessively conservative mixing (weight=0.05) achieves convergence but requires 45+ iterations

- Linear mixing consistently requires 35-50% more iterations than Pulay mixing

The optimal balance for methane was found with Pulay mixing, weight=0.5, and history=4, converging in 11-15 iterations consistently [15].

Metallic System: Iron Cluster

A three-atom iron cluster in a non-collinear spin configuration exemplifies challenging metallic and magnetic systems [15]. Initial calculations with linear mixing (weight=0.1) required over 100 iterations for convergence [15].

Parameter optimization revealed:

- Broyden mixing significantly outperformed Pulay, reducing iterations by 40%

- Optimal history of 6-8 steps was crucial for magnetic systems

- Intermediate mixing weights (0.3-0.4) provided the best stability-efficiency balance

- Hamiltonian mixing generally outperformed density mixing for this system

The final optimized parameters reduced SCF iterations to 25-35, representing a 3-4× improvement over initial parameters [15].

Stretched Bond System: Hydrogen Molecule

The stretched H₂ molecule (1.4 Å bond length) demonstrates the restricted-to-unrestricted instability problem [17]. With symmetric initial guesses, the SCF converges to a restricted solution that stability analysis reveals as unstable [17].

Implementation of stability analysis with STABPerform true identifies negative eigenvalues in the electronic Hessian, indicating an unstable saddle point [17]. Restarting the calculation with STABRestartUHFifUnstable true automatically generates a new unrestricted guess that converges to the correct unrestricted solution [17].

This case highlights how mixing parameters alone cannot overcome fundamental instabilities from inappropriate initial guesses, necessitating stability analysis for challenging electronic structures.

Computational Software and Modules

Table 3: Research Reagent Solutions for SCF Stability Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| ORCA SCF Stability Module | Performs formal stability analysis of converged solutions | Identifying restricted/unstable solutions in molecular systems [17] |

| SIESTA Mixing Methods | Implements linear, Pulay, Broyden mixing schemes | Parameter optimization for molecular and periodic systems [15] |

| VASP DIIS Routines | Density mixing with dielectric preconditioning | Metallic systems with charge sloshing challenges [18] |

| ADF SCF Accelerators | MESA, LISTi, EDIIS convergence algorithms | Problematic systems with d-/f-elements and open-shell configurations [14] |

| Custom Scripting | Automated parameter screening and analysis | High-throughput optimization of mixing parameters |

Diagnostic and Analysis Tools

Beyond the core SCF algorithms, several diagnostic tools are essential for effective stability research:

- Convergence Monitors: Tracking dDmax and dHmax across iterations to identify oscillatory behavior [15]

- Stability Analysis Utilities: Calculating the lowest eigenvalues of the electronic Hessian [17]

- Orbital Visualization: Plotting molecular orbitals to verify physical reasonableness of solutions [17]

- Density Analysis: Examining charge density differences to identify problematic regions

Mixing parameters represent powerful control levers for SCF stability, with method selection and parameter optimization dramatically impacting convergence behavior across diverse chemical systems. The empirical evidence demonstrates that systematic approach to mixing parameter selection can reduce SCF iterations by factors of 3-4× for challenging systems while transforming divergent calculations into stable, convergent solutions.

Future research directions in mixing parameter optimization include:

- System-specific parameter databases linking chemical features to optimal mixing schemes

- Machine learning approaches for predicting optimal parameters based on molecular descriptors

- Adaptive mixing algorithms that automatically adjust parameters during SCF cycles

- Improved stability analysis integrated directly into SCF iterations rather than as post-convergence check

Within the broader thesis of SCF stability research, mixing parameters emerge as practical experimental knops that directly manipulate the convergence pathway, complementing theoretical advances in electronic structure method development. Their proper application remains essential for robust quantum chemical simulations across drug discovery, materials design, and fundamental chemical research.

A major challenge in the development of predictable stem cell therapies (SCTs) is the determination of optimal stem cell dosages. Unlike pharmaceutical agents, which can be accurately dosed, SCT lacks established methodologies for the precise quantification of therapeutic stem cells. This leads to clinical outcomes that are difficult to predict, compare, and reproduce [4] [1]. The inherent heterogeneity of mesenchymal stem cell (MSC) populations, which are mixtures of stem cells, committed progenitor cells, and differentiated cells, further confounds this issue. A critical missing piece has been the inability to identify the specific stem cell fraction (SCF) within these mixed populations, as a specific MSC marker for this purpose is lacking [1]. This paper explores how a novel computational methodology, Kinetic Stem Cell (KSC) counting, has been used to quantify the SCF and its stability in human MSC preparations, providing a significant step toward the clinical translation of cell therapies. The stability of the SCF during serial culture—a process controlled by underlying biophysical "mixing parameters" that dictate cell fate decisions—is crucial for the clinical-scale expansion and biomanufacturing of MSCs [4] [1] [19].

Core Principles of SCF Quantification and Stability

The Heterogeneity Problem in MSC Therapies

The field has historically relied on minimal criteria to define MSCs: adherence to plastic, specific surface marker expression (CD105, CD73, CD90), and tri-lineage differentiation potential. However, these criteria do not address the heterogeneity of MSC preparations. Populations from diverse tissue sources are, in fact, heterogeneous mixtures of stem cells, committed progenitor cells, and terminally differentiated cells [1]. Consequently, phenotypically similar MSC populations can exhibit vastly different functional behaviors in terms of growth kinetics and therapeutic efficacy, largely due to differences in their specific SCF.

Kinetic Stem Cell (KSC) Counting: A Novel Computational Approach

KSC counting is a computational simulation method designed to overcome the limitation of specific stem cell markers. It provides a routine, reproducible, and accurate determination of the SCF within heterogeneous tissue cell populations [1]. The method leverages data from serial cell culture to computationally simulate and quantify the proportion of true stem cells based on their unique kinetic behavior—specifically, their capacity for self-renewal and population expansion over time.

Defining SCF Stability in Culture

SCF stability refers to the maintenance of the stem cell fraction during the serial passaging of cells in culture. A stable SCF is essential for achieving predictable and scalable net expansion of stem cells for clinical applications. Instability, characterized by a rapid decline in the SCF, can lead to inconsistent therapeutic products. The KSC counting methodology allows for the quantification of this stability by determining the SCF half-life (SCF~HL~), a metric that describes the rate at of the stem cell fraction decays with each passage [1].

Experimental Quantification of SCF Stability

Cell Sourcing and Preparation

In the foundational study, human alveolar bone-derived MSC (aBMSC) preparations were isolated from eight patients undergoing routine oral surgical procedures [1]. Alveolar bone marrow tissue samples were processed and resuspended in complete culture medium (CCM). The isolates were cultured, and non-adherent cells were removed after five days. Adherent cells were harvested, expanded, and cryopreserved to establish the aBMSC strains used for subsequent analysis [1]. The table below summarizes the donor demographics and initial immunophenotypic characterization.

Table 1: Donor Demographics and Surface Marker Expression for aBMSC Preparations

| Patient | Sex | Age | CD73 (%) | CD90 (%) | CD105 (%) |

|---|---|---|---|---|---|

| 1 | F | 90 | 99.66 | 98.39 | 98.96 |

| 2 | M | 56 | 99.81 | 99.86 | 99.38 |

| 3 | F | 78 | 99.92 | 99.80 | 99.29 |

| 4 | F | 62 | 99.77 | 99.80 | 99.10 |

| 5 | M | 61 | 99.62 | 99.84 | 99.80 |

| 6 | F | 49 | 99.92 | 99.94 | 99.91 |

| 7 | F | 25 | 99.54 | 99.65 | 99.73 |

| 8 | M | 43 | 99.94 | 99.95 | 99.86 |

Serial Cell Culture Protocol

The core experimental protocol for KSC counting involves long-term serial passage of cells [1]:

- Initiation of Culture: Cryopreserved aBMSC strains are thawed and expanded for one passage. Triplicate serial cultures are then initiated in 6-well plates, seeding 1 × 10^5^ cells per well in complete culture medium.

- Passaging Schedule: Cells are passaged every 72 hours on a consistent schedule. At each passage, 1/20th of the total recovered live cells are transferred to a new well to continue the culture.

- Cell Viability Counting: At every passage, the numbers of live and dead cells are determined manually using a hemocytometer and trypan blue dye-exclusion.

- Culture Endpoint: Serial culture is continued until no cells can be detected, providing a complete growth curve from exponential expansion to senescence.

KSC Counting Data Analysis

The data collected from serial culture is processed computationally [1]:

- Data Input: Live- and dead-cell counts from triplicate serial cultures are used to calculate cumulative population doubling (CPD) and dead cell fraction data over the entire culture period.

- SCF Determination: The CPD and dead cell fraction data are input into the TORTOISE Test software (version 2.0). This software uses computational simulations to derive the initial SCF of each aBMSC strain.

- SCF Stability Determination: The RABBIT Count software (version 1.0) is then used to determine the SCF half-life (SCF~HL~) of each strain during serial culture, providing a quantitative measure of SCF stability. Reported mean SCF and SCF~HL~ values are typically based on 10 independent computer simulations.

The following diagram illustrates the complete experimental and computational workflow.

Key Findings on SCF Stability

Significant Inter-Donor Variation in Initial SCF

The application of KSC counting to aBMSC preparations from eight patients revealed, for the first time, a striking degree of inter-donor variation in the initial stem cell fraction. The SCF within these heterogeneous populations differed significantly among donors, with quantified values ranging from as low as 7% to as high as 77% (ANOVA p < 0.0001) [4] [1]. This finding underscores that conventional adherence to plastic and surface marker expression does not guarantee a high or consistent proportion of functional stem cells between different patient samples.

Differential SCF Stability During Serial Culture

The study further uncovered that the stability of the SCF during serial culture also exhibited a high degree of inter-donor variation. Some patient-derived aBMSC preparations demonstrated sufficient stability to support the long-term net expansion of stem cells, while others did not [4]. This suggests that the underlying "mixing parameters"—the molecular and biophysical rules governing cell fate decisions like self-renewal versus differentiation—vary significantly between individuals. The quantified SCF stability data is summarized in the table below.

Table 2: Quantified SCF and Stability Metrics from aBMSC Preparations

| Finding | Metric | Range / Outcome | Significance |

|---|---|---|---|

| Initial Stem Cell Fraction (SCF) | Percentage of true stem cells in initial population | 7% to 77% (varies significantly, ANOVA p < 0.0001) [4] [1] | Highlights donor-dependent quality of MSC preparations, not discernible via standard surface marker analysis. |

| SCF Stability (in Serial Culture) | SCF Half-Life (SCF~HL~) and net expansion potential | High inter-donor variation; some preparations stable, others not [4] [1] | Critical for biomanufacturing; identifies donor cells capable of long-term expansion. |

| Comparison to Other MSCs | SCF behavior in serial culture | Unlike other MSC sources (e.g., bone marrow, adipose), some aBMSCs show stability [1] | Suggests tissue-specific and donor-specific differences in stem cell population dynamics. |

Implications for Biomanufacturing and Therapy

Advancing Clinical-Scale Expansion

The quantification of SCF stability has direct and profound implications for the biomanufacturing of MSCs for clinical use. By identifying donor cell preparations with a high initial SCF and sufficient SCF stability, manufacturers can select optimal starting material for production. This enables a more predictable and efficient expansion process, ensuring that the final therapeutic product contains a sufficient dose of functional stem cells to elicit the desired clinical effect [4].

Toward Predictable and Standardized Dosing

The ability to quantify the SCF is a critical step toward transforming stem cell therapy from an unpredictable intervention into a standardized, dosable biological medicine. KSC counting provides a potential quality control metric that can be used to potency-assay MSC batches, correlating the number of kinetic stem cells with clinical outcomes. This can facilitate more effective and predictable results in clinical trials and ultimately in routine treatments employing SCT [4] [1].

Table 3: Key Research Reagents and Solutions for SCF Quantification Studies

| Item | Function / Application | Example from Protocol |

|---|---|---|

| Alveolar Bone Marrow | Source tissue for deriving patient-specific mesenchymal stem cell (MSC) preparations. | 0.5 cc marrow aspirate obtained from a 2mm core of alveolar bone [1]. |

| Complete Culture Medium (CCM) | Supports the growth and maintenance of MSC populations in culture. | MEMα supplemented with 15% FBS, 1% antibiotic antimycotic, 1% L-glutamine, 1% L ascorbic acid 2-phosphate [1]. |

| Trypan Blue Dye | Vital stain used to distinguish and manually count live (exclude dye) and dead (take up dye) cells. | Used with a hemocytometer for cell viability counting at each serial passage [1]. |

| Flow Cytometry Antibodies | Used to confirm that cell preparations meet minimal immunophenotypic criteria for MSCs. | Anti-CD73, CD90, and CD105 antibodies for positive marker expression [1]. |

| TORTOISE Test Software | Computational tool that analyzes serial culture data to determine the initial Stem Cell Fraction (SCF). | Input: CPD and dead cell fraction data. Output: Initial SCF value [1]. |

| RABBIT Count Software | Computational tool that determines the stability of the SCF during extended serial culture. | Calculates the SCF Half-Life (SCF~HL~) [1]. |

The introduction of Kinetic Stem Cell counting represents a paradigm shift in our ability to quantify the stem cell fraction and its stability within clinically relevant MSC preparations. The findings of significant inter-donor variation in both the initial SCF and its cultural stability underscore the critical need for such quantitative measures in advanced biomanufacturing. By framing this within the context of mixing parameter research, it becomes clear that understanding and controlling the parameters that govern SCF stability is essential for the development of predictable, efficacious, and standardized stem cell therapies. The integration of KSC counting as a potency assay holds the promise of significantly improving the success and reliability of clinical trials and future regenerative medicine treatments.

Inter-Donor Variability in SCF and Its Implications for Process Design

Stem cell fraction (SCF) constitutes the critical functional component within heterogeneous mesenchymal stem cell (MSC) preparations, directly influencing the efficacy and predictability of stem cell therapies (SCTs). A significant challenge in biomanufacturing is the inherent inter-donor variability of SCF, which introduces substantial uncertainty in process outcomes and final product quality. This technical guide examines the quantitative extent of this variability and its impact on process design, framed within broader research on how mixing parameters and process controls affect SCF stability. We present detailed methodologies for SCF quantification, data analysis, and strategic frameworks to mitigate variability, providing researchers and drug development professionals with the tools to design more robust and reliable manufacturing processes for cell therapies.

The development of predictable stem cell therapies is critically limited by the inability to accurately dose the therapeutic agent—the stem cells themselves [20]. Unlike pharmaceutical compounds, heterogeneous MSC populations are complex mixtures of stem cells, committed progenitor cells, and differentiated cells [20]. The stem cell fraction within these mixtures is the primary determinant of therapeutic potency, yet its proportion can vary dramatically between individual donors. This inter-donor variability presents a fundamental obstacle to standardizing cell therapy manufacturing and achieving consistent clinical outcomes.

Current minimal criteria for defining MSCs—plastic adherence, specific surface marker expression, and tri-lineage differentiation potential—do not provide a quantitative measure of the SCF [20]. Furthermore, while surface markers like CD34 are sometimes used as quasi-critical quality attributes, they are not necessarily key determinants of function [21]. The functional potency of a cell population can vary significantly even among units with similar phenotypic characteristics [21]. This gap between characterization and function underscores the necessity of directly quantifying the SCF and understanding its behavior during bioprocessing. The stability of the SCF during serial culture—a common practice for cell expansion in manufacturing—is not guaranteed and shows significant inter-donor variation, impacting the long-term net expansion of stem cells [20]. This guide explores the implications of this variability for process design and outlines strategies to achieve consistent product quality.

Quantitative Evidence of Inter-Donor SCF Variability

The scale of inter-donor variability has been quantitatively demonstrated in studies of oral-derived human alveolar bone MSC (aBMSC) preparations. Using Kinetic Stem Cell (KSC) counting, a computational simulation method that determines the SCF within heterogeneous cell populations, researchers analyzed aBMSCs from eight different donors [20].

Table 1: Inter-Donor Variability in Initial Stem Cell Fraction (SCF) of aBMSCs

| Donor | Initial SCF (%) | Statistical Significance (ANOVA) |

|---|---|---|

| 1 | 7 | |

| 2 | 22 | |

| 3 | 26 | |

| 4 | 32 | p < 0.0001 |

| 5 | 42 | |

| 6 | 58 | |

| 7 | 69 | |

| 8 | 77 |

The data reveals that the initial SCF within these clinically relevant cell preparations can range from as low as 7% to as high as 77%, a difference of more than an order of magnitude [20]. This variation was statistically significant, highlighting that donor-specific biological factors profoundly influence the initial quality of the cell product.

Furthermore, inter-donor variation is not limited to the initial SCF but also extends to the stability of the SCF during serial culture, a critical parameter for manufacturing scale-up. The SCF half-life (SCF~HL~), which measures the rate at of the stem cell fraction decays during passaging, also differs significantly among donors [20]. Some donor preparations exhibit sufficient stability to support long-term net stem cell expansion, while others do not, directly impacting the feasibility and yield of a manufacturing run.

Implications for Bioprocess Design and Development

The substantial inter-donor variability in SCF has profound implications for the design and control of bioprocesses in cell therapy manufacturing. A process optimized for a cell population with a 77% SCF may fail entirely or yield a sub-therapeutic product when applied to a population with a 7% SCF.

Impact on Critical Process Parameters and Final Product Quality

The starting number of target stem cells is a critical factor in process success. Too few cells can lead to process failure or poor economic return, while too many can alter growth or transformation kinetics [21]. As shown in the cord blood banking experience, incoming products exhibit a wide, natural distribution in key attributes like Total Nucleated Cell (TNC) count and volume [21]. If unaccounted for, this variability propagates through the manufacturing process, leading to inconsistent final product quality and unpredictable clinical performance. The three primary strategies to reduce this variability are selection, automation of the design space, and rejection [21].

Table 2: Strategies for Managing Donor Variability in Process Design

| Strategy | Description | Application Example |

|---|---|---|

| Selection | Pre-screening incoming donor material based on critical quality attributes (CQAs) correlated to functional outcome. | Selecting cord blood units with high TNC or CD34+ cell counts [21]. Using donor age (younger donors fare better) as a selection criterion [21]. |

| Automation of the Design Space | Employing Quality by Design (QbD) to understand and control how input variability and process parameters interact to affect the Target Quality Product Profile (TQPP). | Using QbD to test how centrifugation speeds, cell densities, and donor variability impact the TQPP and lock optimal parameters into an automated control space [21]. |

| Rejection | Discarding donor material that does not meet pre-defined specifications for CQAs, even if its functional impact is not fully known. | Acknowledging that characterization alone does not guarantee function and that some complex cell systems will be unsuitable for processing [21]. |

The Workflow for Managing SCF Variability

The following diagram illustrates a comprehensive workflow for analyzing and managing inter-donor SCF variability in process design, from cell isolation to process optimization.

Experimental Protocols for SCF Analysis

A rigorous, data-driven approach to process design requires robust experimental methods to quantify SCF and its stability. The following protocol details the KSC counting method.

Kinetic Stem Cell (KSC) Counting Protocol

Objective: To determine the initial Stem Cell Fraction (SCF) and the SCF half-life (SCF~HL~) of a heterogeneous MSC-containing population during serial culture.

Materials and Reagents:

- Source Tissue: Human alveolar bone marrow tissue (or other MSC source).

- Complete Culture Medium (CCM): MEMα supplemented with 15% FBS, 1% antibiotic-antimycotic, 1% L-glutamine, and 1% L-ascorbic acid 2-phosphate [20].

- Enzymes: Trypsin-EDTA for cell harvesting.

- Staining Antibodies: For flow cytometry characterization (e.g., CD73-BV421, CD90-FITC, CD105-PE) and corresponding isotype controls [20].

- Software: TORTOISE Test (v2.0) and RABBIT Count (v1.0) for KSC counting simulations [20].

Methodology:

- Cell Isolation and Culture: Resuspend and centrifuge alveolar bone marrow tissue in MEMα. Plate the cell pellet in CCM in T-25 flasks. After 5 days, remove non-adherent cells and change medium every three days thereafter [20].

- Serial Cell Culture: Perform adherent serial cell cultures in triplicate in 6-well plates. Seed a defined number of cells (e.g., 1 x 10^5 cells/well). Passage the cells on a fixed schedule (e.g., transfer 1/20 of the total recovered live cells every 72 hours). Continue serial culture until no cells can be detected [20].

- Data Collection: At each passage, count both live and dead cells using trypan blue exclusion and a hemocytometer [20].

- Data Analysis:

- Calculate the Cumulative Population Doubling (CPD) and dead cell fraction data from the triplicate counts.

- Input these data into the TORTOISE Test software to derive the initial SCF for the cell preparation.

- Use the RABBIT Count software to determine the SCF half-life (SCF~HL~) during serial culture [20].

- Perform statistical analyses (e.g., one-way ANOVA) to assess the significance of SCF variation among different donors [20].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Materials for SCF Quantification and Culture

| Item | Function/Description | Example Use in Protocol |

|---|---|---|

| MEMα Medium | Base medium for cell culture, providing essential nutrients and salts. | Foundation for the Complete Culture Medium (CCM) used for expanding aBMSCs [20]. |

| Fetal Bovine Serum (FBS) | Critical supplement providing growth factors, hormones, and adhesion factors for cell growth. | Added at 15% to MEMα to create the CCM for supporting MSC proliferation [20]. |

| Trypsin-EDTA | Proteolytic enzyme solution used to dissociate adherent cells from the culture surface. | Harvesting adherent aBMSCs from tissue culture flasks and plates for passaging and counting [20]. |

| CD73, CD90, CD105 Antibodies | Fluorescently-labeled antibodies for detecting classic MSC surface markers via flow cytometry. | Characterizing the immunophenotype of the isolated cell population according to ISCT criteria [20]. |

| KSC Counting Software (TORTOISE Test) | Computational simulation tool for determining the stem cell fraction from serial culture data. | Analyzing live and dead cell count data to calculate the initial SCF and SCF half-life [20]. |

Inter-donor variability in the Stem Cell Fraction is a formidable, yet manageable, challenge in the biomanufacturing of cell therapies. Quantitative data confirms that this variability is significant and impacts both the starting material and its behavior during expansion processes. By adopting a structured approach that incorporates rigorous SCF quantification via KSC counting, and implementing strategic process controls based on selection, automated QbD, and rejection, manufacturers can mitigate the risks posed by variability. This leads to the production of more consistent, well-defined, and potent cell therapy products, ultimately enhancing the predictability and success of clinical treatments. Future research must continue to elucidate the biological underpinnings of this variability and refine mixing parameters and process controls to further enhance SCF stability across diverse donor populations.