Optimizing Mixing Parameters for SCF Efficiency: A Systematic Guide for Biomedical Researchers

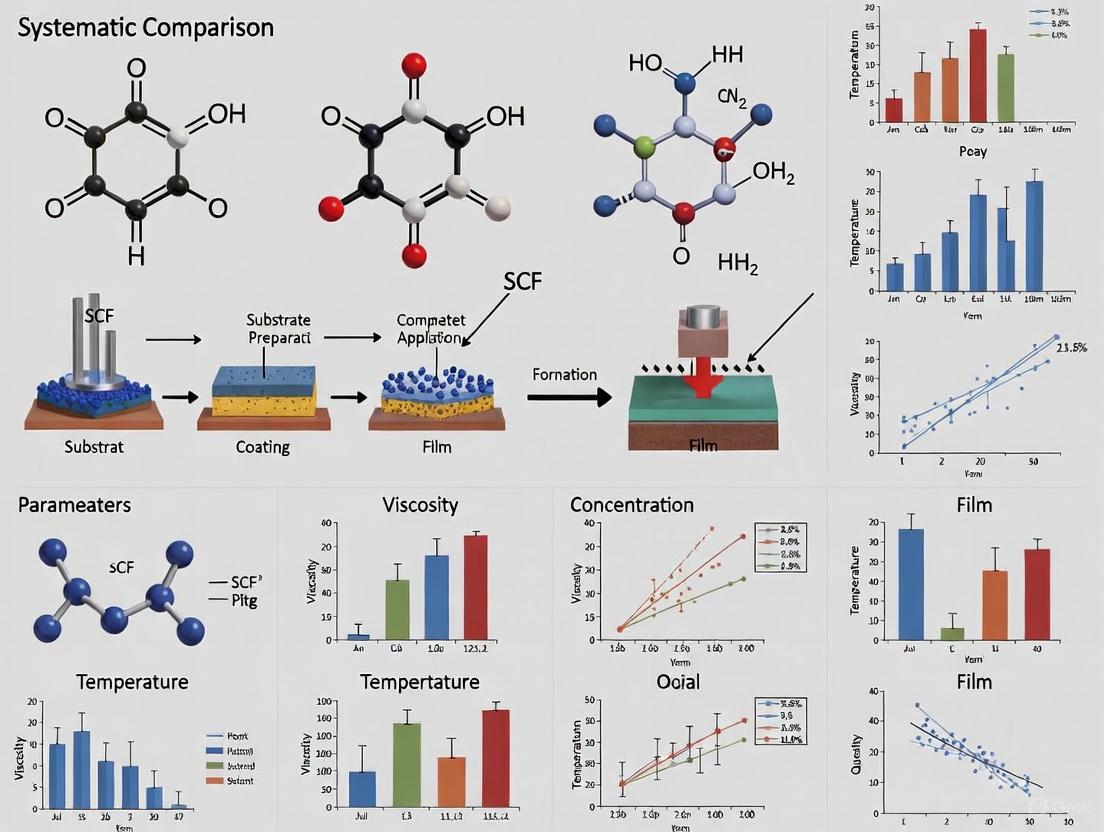

This article provides a systematic comparison of mixing parameter values and their critical impact on the efficiency and convergence of Self-Consistent Field (SCF) methods, with a specific focus on applications...

Optimizing Mixing Parameters for SCF Efficiency: A Systematic Guide for Biomedical Researchers

Abstract

This article provides a systematic comparison of mixing parameter values and their critical impact on the efficiency and convergence of Self-Consistent Field (SCF) methods, with a specific focus on applications in biomedical research and drug development. It explores the foundational principles of SCF iterations, details advanced methodological approaches for parameter selection, offers practical troubleshooting strategies for challenging systems, and establishes a framework for the rigorous validation and benchmarking of SCF performance. Designed for computational chemists, structural biologists, and pharmaceutical scientists, this guide aims to enhance the reliability and throughput of electronic structure calculations in high-throughput screening and materials discovery.

SCF Fundamentals: Core Principles and the Critical Role of Mixing Parameters

Theoretical Foundations of Self-Consistent Field Theory

Self-Consistent Field (SCF) theory forms the computational backbone for solving fundamental equations in quantum chemistry and materials physics, including Hartree-Fock (HF) theory and Kohn-Sham Density Functional Theory (KS-DFT). The core challenge addressed by SCF methodologies is the intrinsic interdependence of electronic interactions: the effective potential experienced by an electron depends on the positions of all other electrons, which themselves are influenced by this same potential. This circular dependency necessitates an iterative solution process that continues until consistency is achieved between the input and output electron densities or wavefunctions [1] [2].

Mathematically, the SCF procedure for solving the Hartree-Fock equations can be expressed as a nonlinear eigenvalue problem. The Hamiltonian operator, ( H ), depends on the electron density ( \rho(\mathbf{r}) ) and density matrix ( P(\mathbf{r}, \mathbf{r'}) ), leading to the equation: [ H[\rho(\mathbf{r}), P(\mathbf{r}, \mathbf{r'})] \phi\ell = \left(-\frac{1}{2} \Delta + V{\text{ext}} + V{\text{Har}}\rho + V{\text{x}}P\right) \phi\ell = \lambda\ell \phi\ell, \quad \ell = 1, \cdots, Ne ] where ( V{\text{ext}} ) represents the external potential, ( V{\text{Har}} ) is the Hartree potential describing electron-electron repulsion, and ( V_{\text{x}} ) is the nonlocal Fock exchange potential [2]. The Fock exchange operator is particularly computationally challenging due to its nonlocal nature, requiring sophisticated approximation strategies to make large-scale calculations feasible [2].

In practical implementations using finite basis sets such as Gaussian-type orbitals or plane waves, these continuous equations are transformed into matrix formulations. The Roothaan-Hall equations for restricted closed-shell systems take the form of a generalized eigenvalue problem: [ \mathbf{F} \mathbf{C} = \mathbf{S} \mathbf{C} \mathbf{E} ] where ( \mathbf{F} ) is the Fock matrix, ( \mathbf{S} ) is the overlap matrix of basis functions, ( \mathbf{C} ) contains the molecular orbital coefficients, and ( \mathbf{E} ) is a diagonal matrix of orbital energies [1]. The SCF iterative process aims to find a converged set of orbitals where the density matrix commutes with the Fock matrix (( \mathbf{F} \mathbf{P} \mathbf{S} - \mathbf{S} \mathbf{P} \mathbf{F} = \mathbf{0} )), signaling self-consistency [3].

Comparative Analysis of SCF Convergence Algorithms

Various algorithms have been developed to optimize the convergence behavior of SCF iterations, each with distinct strengths and computational characteristics. The choice of algorithm significantly impacts both the reliability and efficiency of electronic structure calculations, particularly for challenging systems with metallic characteristics, small HOMO-LUMO gaps, or open-shell configurations [4] [3].

Table 1: Comparison of SCF Convergence Algorithms

| Algorithm | Key Principle | Strengths | Weaknesses | Typical Applications |

|---|---|---|---|---|

| DIIS (Direct Inversion in Iterative Subspace) [3] | Extrapolation using error vectors from previous iterations | Fast convergence for well-behaved systems | May converge to false solutions; sensitive to initial guess | Default choice for most closed-shell systems |

| GDM (Geometric Direct Minimization) [3] | Energy minimization in orbital rotation space | Highly robust; proper treatment of curved geometry | Less efficient than DIIS in early iterations | Restricted open-shell; fallback when DIIS fails |

| ADIIS (Accelerated DIIS) [3] | Combines energy interpolation with DIIS | Improved stability over plain DIIS | - | Alternative to DIIS for problematic cases |

| RCA (Relaxed Constraint Algorithm) [3] | Guarantees energy decrease at each step | High stability | Less efficient | Initial iterations for difficult systems |

| Two-Level Nested SCF [2] | Decouples exchange operator optimization from density refinement | Reduces computational cost of exchange term | Implementation complexity | Large systems with hybrid functionals |

For systems exhibiting convergence difficulties, such as those with transition metals or dissociating bonds, the recommended strategy often involves combining algorithms. One effective approach uses DIIS initially to rapidly approach the solution basin, then switches to the more robust GDM algorithm for final convergence [3]. This hybrid methodology balances the aggressive early convergence of DIIS with the stability of direct minimization methods.

The efficiency of SCF calculations can be substantially improved through optimization of charge mixing parameters. Recent research demonstrates that Bayesian optimization of these parameters can achieve faster convergence than default settings, providing significant computational savings for high-throughput materials screening and molecular dynamics simulations [5]. This parameter optimization approach is particularly valuable for high-throughput materials screening and molecular dynamics simulations where multiple consecutive SCF calculations are performed.

Experimental Protocols for SCF Efficiency Research

Benchmarking Methodology for Convergence Behavior

Systematic evaluation of SCF algorithm performance requires standardized benchmarking protocols that control for key variables while measuring relevant performance metrics. The fundamental methodology involves selecting a diverse test set of molecular systems with varying electronic structure complexity, then applying each SCF algorithm with consistent convergence criteria and computational settings [6].

Test systems should include representatives from multiple chemical domains: main-group organic molecules, organometallic complexes, systems with open-shell configurations, and materials with small HOMO-LUMO gaps. For each system, researchers typically perform single-point energy calculations from consistent initial guesses while tracking (1) the number of SCF iterations until convergence, (2) the computational time required, (3) the final total energy, and (4) the evolution of convergence metrics across iterations [6] [3]. For meaningful comparisons, all calculations must employ identical basis sets, integration grids, and integral thresholds [7].

The convergence criteria must be standardized across comparisons. The ORCA quantum chemistry package, for instance, offers tiered convergence presets from "Sloppy" to "Extreme" with specific thresholds for energy change (( \text{TolE} )), density change (( \text{TolRMSP} ), ( \text{TolMaxP} )), and DIIS error (( \text{TolErr} )) [7]. For production-level geometry optimizations and frequency calculations, tighter criteria (( \text{TolE} = 10^{-8} ) to ( 10^{-9} ) a.u.) are recommended compared to single-point energy calculations (( \text{TolE} = 10^{-6} ) a.u.) [7] [3].

Table 2: Standard SCF Convergence Criteria in ORCA (TightSCF Settings)

| Convergence Metric | Threshold Value | Physical Significance |

|---|---|---|

| TolE (Energy Change) | ( 1 \times 10^{-8} ) | Maximum change in total energy between cycles |

| TolRMSP (RMS Density Change) | ( 5 \times 10^{-9} ) | Root-mean-square change in density matrix elements |

| TolMaxP (Max Density Change) | ( 1 \times 10^{-7} ) | Largest change in any density matrix element |

| TolErr (DIIS Error) | ( 5 \times 10^{-7} ) | Maximum element of the DIIS error vector |

| TolG (Orbital Gradient) | ( 1 \times 10^{-5} ) | Maximum orbital rotation gradient |

Protocol for Mixing Parameter Optimization

The efficiency of SCF calculations is strongly influenced by mixing parameters that control how the Fock or density matrix is updated between iterations. The DIIS algorithm, for instance, uses a mixing parameter (typically defaulting to 0.2) that determines the fraction of the new Fock matrix included when constructing the next guess [4]. Systematic optimization of these parameters can dramatically improve convergence rates.

The experimental protocol for mixing parameter optimization involves:

- Selecting a representative training set of molecules with diverse electronic structures

- Performing SCF calculations with varying mixing parameters (e.g., from 0.01 to 0.5)

- Measuring convergence rates for each parameter set by tracking iterations to convergence

- Applying Bayesian optimization to efficiently navigate the parameter space [5]

- Validating optimized parameters on a separate test set of molecular systems

For problematic systems, reduced mixing parameters (e.g., 0.015) with increased DIIS subspace size (e.g., 25 vectors) and delayed DIIS onset (e.g., 30 initial cycles) can significantly improve stability, albeit at the cost of slower convergence [4].

Advanced SCF Methodologies and Computational Efficiency

Two-Level Nested SCF for Exchange Operator Optimization

The two-level nested SCF approach represents an innovative strategy for handling the computational bottleneck of Fock exchange evaluation in hybrid DFT and Hartree-Fock calculations [2]. This method decouples the optimization of the exchange operator from the refinement of the electron density, creating a hierarchical iteration structure:

The outer loop focuses exclusively on optimizing the Fock exchange operator, which contributes relatively little to the total energy but is computationally expensive. Since the exchange operator evolves slowly, this loop requires only a few iterations (typically 3-5). The inner loop then converges the electron density with the exchange operator fixed, benefiting from significantly reduced computational cost per iteration [2]. This hierarchical approach confines the expensive exchange operator construction exclusively to the outer loop, dramatically improving computational efficiency for large systems.

Approximation Techniques for Exchange Operators

The computational cost of the nonlocal Fock exchange operator has motivated developing approximation techniques that maintain accuracy while reducing operational complexity. A generalized framework for constructing approximate exchange operators aims to replicate the effect of the exact operator on occupied orbitals while introducing tunable parameters for flexibility [2].

These approximation methods include:

- Low-rank decomposition of the exchange operator to reduce memory requirements

- Adaptively compressed exchange operators that maintain accuracy for occupied orbitals

- Projection-based techniques that focus computational effort on the chemically relevant occupied subspace

- Quantized tensor train (QTT) representations for efficient storage and manipulation

The performance of these approximate exchange operators has been demonstrated to achieve near-identical energies compared to exact exchange operators while providing substantial improvements in computational efficiency, particularly for large basis set calculations [2].

Essential Computational Tools for SCF Research

Table 3: Research Reagent Solutions for SCF Methodology Development

| Tool/Category | Representative Examples | Primary Function in SCF Research |

|---|---|---|

| Electronic Structure Packages | Q-Chem, ORCA, VASP, Psi4, NWChem | Provide implementation platforms for SCF algorithms with various exchange-correlation functionals and basis sets |

| SCF Convergence Algorithms | DIIS, GDM, ADIIS, RCA | Enable efficient and robust convergence of self-consistent field equations |

| Basis Sets | def2-TZVPD, cc-pVNZ, Gaussian-type orbitals, numerical atomic orbitals | Expand molecular orbitals in finite basis representation for practical computation |

| Exchange-Correlation Functionals | ωB97M-V, B97-3c, r2SCAN-3c, hybrid functionals | Define the approximation level for electron exchange and correlation effects |

| Solvation Models | CPCM-X, COSMO-RS, Generalized Born | Account for environmental effects in reduction potential and other solution-phase properties |

| Wavefunction Analysis Tools | Density matrix analysis, orbital localization, stability analysis | Diagnose convergence problems and verify physical meaningfulness of solutions |

The integration of these computational tools enables comprehensive benchmarking of SCF methodologies. For example, studies evaluating reduction potentials and electron affinities compare neural network potentials (NNPs) trained on large datasets like OMol25 against traditional DFT and semiempirical methods [6]. Such benchmarks provide valuable insights into the accuracy-efficiency tradeoffs of different electronic structure approaches, guiding researchers in selecting appropriate methods for specific applications.

For researchers investigating SCF convergence behavior, the Q-Chem package offers particularly sophisticated diagnostics and algorithm options, including DIIS error tracking, orbital gradient monitoring, and specialized methods for open-shell systems [3]. Similarly, ORCA provides detailed control over convergence thresholds and multiple algorithm combinations for challenging cases [7].

Self-Consistent Field (SCF) iteration serves as a fundamental computational algorithm in quantum chemistry and electronic structure theory, essential for solving the nonlinear eigenproblems that arise in methods like Density Functional Theory (DFT) and Hartree-Fock (HF). The efficiency and robustness of SCF calculations directly impact research productivity across scientific domains, including drug development where predicting molecular properties requires reliable quantum chemical computations. Achieving rapid and stable SCF convergence remains challenging, particularly for systems with small HOMO-LUMO gaps, transition metal complexes, and metallic systems exhibiting charge-sloshing instabilities. The interplay between three critical algorithmic components—damping parameters, preconditioners, and convergence criteria—largely determines SCF performance. This guide provides a systematic comparison of these elements, synthesizing current research and experimental data to inform efficient algorithm selection and implementation.

Core Concepts and Definitions

Damping Parameters

Damping parameters refer to the numerical factors that control the step size taken during each SCF iteration, determining how much of the newly computed potential or density is mixed with the previous iteration's result. Simple linear mixing employs a fixed damping parameter α in the update formula: ρ{k+1} = ρk + α(F[ρk] - ρk), where F represents the fixed-point map of the SCF procedure. The primary function of damping is to stabilize convergence by preventing large oscillations between iterations, especially important for challenging systems where simple undamped iterations diverge. Optimal damping selection traditionally requires manual tuning, but recent advances introduce adaptive damping algorithms that automatically determine the optimal step size at each iteration through backtracking line searches, eliminating the need for user-specified parameters and enhancing robustness for difficult systems like elongated supercells and transition-metal alloys [8].

Preconditioners

Preconditioners are mathematical operators that approximate the inverse Jacobian of the SCF fixed-point map, effectively transforming the problem to improve its numerical conditioning. They address the fundamental challenge that the convergence rate of SCF iterations depends on the spectral properties of the dielectric operator, which can be particularly ill-conditioned in metallic systems with long-range charge fluctuations known as "charge-sloshing." Different preconditioning strategies have been developed:

- Kerker Preconditioner: Specifically designed for metallic systems, this method suppresses long-wavelength charge sloshing by incorporating a simple model dielectric response in Fourier space, with the preconditioner taking the form P_Kerker(q) = q²/(q² + 4πγ̂) [9].

- Elliptic Preconditioner: This advanced approach addresses heterogeneous systems containing mixed metal/insulator/vacuum regions by solving an elliptic partial differential equation with spatially varying coefficients, providing robust convergence across diverse material types [9].

- LDOS-Based and Low-Rank Preconditioners: These modern techniques utilize the local density of states or construct low-rank approximations of the dielectric response to adaptively precondition systems with complex electronic structures [9].

Convergence Criteria

Convergence criteria define the numerical thresholds that determine when an SCF calculation has reached a sufficiently self-consistent solution. These criteria establish the target precision for the energy and wavefunction, balancing computational cost against result accuracy. Common convergence metrics include:

- Energy Change (TolE): The change in total energy between successive iterations [7]

- Density Change (TolMaxP, TolRMSP): The maximum and root-mean-square changes in the density matrix or electron density [7]

- DIIS Error (TolErr): The error vector norm in Direct Inversion in the Iterative Subspace methods [3]

- Orbital Gradient (TolG): The norm of the orbital rotation gradient [7]

Quantum chemistry packages like ORCA and Q-Chem implement predefined convergence profiles (Sloppy, Loose, Medium, Strong, Tight, VeryTight, Extreme) that bundle specific threshold values for these criteria, allowing users to select an appropriate accuracy level for their specific application [7].

Comparative Performance Analysis

Damping Schemes: Fixed vs. Adaptive

Table 1: Comparison of Damping Schemes for Challenging Molecular Systems

| Damping Scheme | Key Features | Convergence Behavior | Optimal For Systems With | Implementation Complexity |

|---|---|---|---|---|

| Fixed Damping | Constant α value; Requires manual tuning; Simple implementation | Unsystematic pattern of success/failure; Often diverges for difficult systems | Well-behaved molecular systems; Small organic molecules | Low (user-input parameter) |

| Adaptive Damping | Automatic α selection via line search; Energy minimization guarantee; Parameter-free | Robust convergence; Monotonic energy decrease; Reduced sensitivity to initial guess | Elongated supercells; Surfaces; Transition-metal alloys [8] | Medium (algorithmic implementation) |

| Optimal Damping Algorithm (ODA) | Ensures monotonic energy decrease; Strong convergence guarantees | Historically used with explicit density matrix representation | Atom-centered basis sets [8] | High (requires density matrix) |

Experimental studies demonstrate that adaptive damping algorithms significantly outperform fixed damping approaches for challenging systems. In tests conducted on elongated supercells, surfaces, and transition-metal alloys, the adaptive damping method achieved reliable convergence where fixed damping schemes exhibited unpredictable failure patterns. The key advantage of adaptive damping lies in its energy-based minimization approach, which provides theoretical convergence guarantees absent in residual-based methods. This approach constructs an inexpensive model for the SCF energy as a function of the damping parameter at each iteration, enabling automatic selection of the optimal step size without user intervention [8].

Preconditioner Performance Across System Types

Table 2: Preconditioner Efficiency for Different Electronic Structure Types

| Preconditioner Type | Metallic Systems | Insulating Systems | Mixed/Heterogeneous Systems | Implementation Requirements |

|---|---|---|---|---|

| Kerker | Highly effective; Suppresses charge-sloshing | Less effective; Can be unnecessary | Moderately effective | Fourier transforms; Parameter γ selection |

| Elliptic | Effective with proper parameterization | Good performance | Highly effective; Handles interfaces well [9] | PDE solver; Spatial coefficient functions |

| LDOS-Based | Adapts to electron density | Adapts to electron density | Highly effective; Automatic adaptation [9] | Local density of states calculation |

| Identity (No Preconditioning) | Often diverges due to charge-sloshing | May converge slowly | Unreliable convergence | None (baseline) |

The performance of preconditioners varies significantly based on system electronic structure. Kerker preconditioning specifically targets the long-wavelength divergence (charge-sloshing) prevalent in metals by damping the small-q components of the density update. For heterogeneous systems containing metal-insulator interfaces or vacuum regions, elliptic preconditioners demonstrate superior performance by incorporating spatial variation in their coefficient functions. Recent research on low-rank dielectric preconditioners shows particular promise for large-scale real-space calculations, constructing effective approximations from Krylov subspace information without excessive computational overhead [9].

Convergence Criteria Standards Across Quantum Chemistry Packages

Table 3: Standard Convergence Thresholds in Popular Quantum Chemistry Packages

| Convergence Level | TolE (Energy) | TolMaxP (Density) | TolRMSP (Density) | TolErr (DIIS) | Recommended Use Cases |

|---|---|---|---|---|---|

| Sloppy (ORCA) [7] | 3e-5 | 1e-4 | 1e-5 | 1e-4 | Preliminary scans; Initial geometry steps |

| Medium (ORCA) [7] | 1e-6 | 1e-5 | 1e-6 | 1e-5 | Standard single-point energies |

| Tight (ORCA) [7] | 1e-8 | 1e-7 | 5e-9 | 5e-7 | Transition metal complexes; Frequency calculations [7] |

| Strong (Q-Chem) [3] | - | - | - | 1e-5 | Default for single-point calculations |

| VeryTight (ORCA) [7] | 1e-9 | 1e-8 | 1e-9 | 1e-8 | High-precision properties; Benchmarking |

Different quantum chemistry packages implement convergence criteria with varying default thresholds and available options. ORCA provides particularly fine-grained control through its convergence profiles, with TightSCF settings (TolE=1e-8, TolMaxP=1e-7) recommended for transition metal complexes due to their challenging electronic structure [7]. Q-Chem employs different convergence criteria for various calculation types, with tighter thresholds (SCFCONVERGENCE=7) for geometry optimizations and vibrational analysis compared to single-point energy calculations (SCFCONVERGENCE=5) [3]. The DIIS error metric itself can be measured as either the maximum or RMS error, with Q-Chem recently switching to the maximum error as a more reliable convergence indicator [3].

Experimental Protocols and Methodologies

Benchmarking SCF Algorithm Performance

Robust evaluation of SCF algorithms requires standardized testing across diverse molecular systems with varying convergence difficulties. Experimental protocols typically involve:

System Selection: Comprehensive benchmarking should include multiple system types: (1) small organic molecules with rapid convergence; (2) systems with small HOMO-LUMO gaps exhibiting charge-sloshing; (3) transition metal complexes with localized d- or f-orbitals; and (4) elongated supercells or surface models [8] [4].

Performance Metrics: Key evaluation metrics include: (1) number of SCF iterations to convergence; (2) total CPU time; (3) success rate (percentage of systems converging); (4) memory requirements; and (5) energy conservation properties [8].

Control Parameters: Experiments should test each method across a range of parameters: for fixed damping (α=0.01 to 0.5), for DIIS (subspace size=5 to 25), and for preconditioners (varying internal parameters like γ in Kerker mixing) [4].

The adaptive damping algorithm developed by Herbst et al. follows a specific experimental methodology where at SCF step n, given a trial potential Vn, the algorithm computes a search direction δVn through preconditioned mixing, then performs a line search to find the optimal step αn that minimizes an energy model, updating the potential as V{n+1} = Vn + αnδV_n [8].

Testing Convergence Criteria Reliability

Methodologies for evaluating convergence criteria focus on determining the relationship between threshold values and result accuracy:

Reference Calculations: Ultra-tight convergence (e.g., ExtremeSCF in ORCA with TolE=1e-14) provides benchmark values for energy and properties [7].

Property Sensitivity Analysis: Testing how molecular properties (dipole moments, population analysis, vibrational frequencies) vary with convergence thresholds identifies appropriate criteria for different application types [7].

Integral Accuracy Compatibility: Ensuring that integral evaluation thresholds (Thresh, TCut) are compatible with SCF convergence criteria, as direct SCF cannot converge if integral errors exceed the convergence tolerance [7].

Visualization of SCF Methodologies

SCF Algorithm Decision Framework

This decision framework illustrates the systematic selection of SCF algorithms based on system type. Small organic molecules typically converge well with standard DIIS and medium convergence criteria, while challenging systems like transition metal complexes require more robust algorithms like Geometric Direct Minimization (GDM) or adaptive damping with tighter convergence settings. Metallic systems benefit significantly from Kerker preconditioning to address charge-sloshing instabilities, whereas mixed heterogeneous systems require advanced approaches like elliptic preconditioning [9] [4] [7].

Adaptive Damping Algorithm Workflow

The adaptive damping workflow demonstrates the automatic step size selection process that occurs each SCF iteration. After computing the standard search direction through preconditioned mixing, the algorithm constructs a simple quadratic model of the energy as a function of the damping parameter. By minimizing this model energy, it determines the optimal step size αₙ before updating the potential. This energy-based approach ensures monotonic convergence and eliminates the need for manual damping parameter selection [8].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Components for SCF Methodology Research

| Component | Function | Implementation Examples |

|---|---|---|

| DIIS Algorithm | Extrapolation method using previous steps to accelerate convergence | Standard in Q-Chem, ORCA [3] |

| Geometric Direct Minimization (GDM) | Orbital optimization with correct geometric structure | Fallback option in Q-Chem; Default for ROC-SCF [3] |

| Kerker Preconditioner | Suppresses charge-sloshing in metals | Available in plane-wave codes; Real-space implementations [9] |

| Elliptic Preconditioner | Handles heterogeneous systems | Advanced electronic structure codes [9] |

| Adaptive Damping | Automatic step size control | Research implementation [8] |

| Convergence Criteria Sets | Predefined accuracy levels | Sloppy to Extreme in ORCA [7] |

| SCF Stability Analysis | Verifies solution is true minimum | ORCA stability package [7] |

This toolkit comprises the essential algorithmic components for implementing and researching advanced SCF methodologies. DIIS (Direct Inversion in the Iterative Subspace) remains the most widely used acceleration technique, employing a least-squares minimization of error vectors from previous iterations to extrapolate toward the solution [3]. Geometric Direct Minimization provides a robust alternative that properly accounts for the non-Euclidean geometry of orbital rotation space, making it particularly valuable for restricted open-shell and difficult convergence cases [3]. Modern preconditioners like Kerker and elliptic methods address specific physical instabilities, while adaptive damping algorithms represent the cutting edge in automated parameter selection. Convergence criteria sets packaged as standardized profiles (e.g., TightSCF, VeryTightSCF) provide practical workflows for different accuracy requirements [7].

The systematic comparison of damping parameters, preconditioners, and convergence criteria reveals a complex performance landscape where optimal SCF algorithm selection depends critically on system-specific electronic structure properties. Fixed damping schemes with DIIS acceleration remain effective for well-behaved molecular systems, but challenging cases involving transition metals, metallic systems, or heterogeneous materials require more sophisticated approaches. Adaptive damping algorithms demonstrate significant advantages in robustness and automation, eliminating manual parameter tuning while ensuring monotonic energy convergence. Preconditioner selection follows a similar pattern, with system-specific choices (Kerker for metals, elliptic for heterogeneous systems) dramatically improving convergence behavior. Convergence criteria must be selected with consideration for both computational efficiency and required accuracy, with tighter thresholds necessary for property calculations and vibrational analysis. Future research directions include increased algorithm automation, improved preconditioners for complex materials, and enhanced integration of these components into black-box computational workflows suitable for high-throughput screening in drug development and materials discovery.

The Mathematical Basis of SCF Iterations and Potential Mixing

Self-consistent field (SCF) methods form the computational backbone for solving electronic structure problems within Hartree-Fock theory and Kohn-Sham density functional theory (DFT). These methods enable the determination of molecular orbitals by solving the nonlinear Schrödinger equation through an iterative process where the Hamiltonian depends on its own eigenfunctions [10]. The fundamental challenge resides in the recursive nature of the calculation: the Hamiltonian (Fock matrix) is built from the electron density, which itself is derived from the molecular orbitals that are eigenvectors of the Hamiltonian. This interdependence necessitates an iterative solution until self-consistency is achieved, where the input and output densities converge [10].

The SCF procedure is mathematically formalized through the Roothaan-Hall equation, F C = S C E, where F is the Fock matrix, C is the matrix of molecular orbital coefficients, S is the atomic orbital overlap matrix, and E is a diagonal matrix of orbital eigenenergies [10]. The Fock matrix itself is composed of several components: F = T + V + J + K, representing the kinetic energy, external potential, Coulomb, and exchange matrices, respectively [10]. The convergence behavior and efficiency of solving this equation are critically dependent on the algorithms used for density mixing and convergence acceleration, which form the primary focus of this comparative analysis.

Fundamental SCF Cycle and Convergence Challenges

The SCF cycle follows a systematic iterative procedure that begins with an initial guess and progresses toward a converged solution. The algorithm can be visualized as follows:

SCF Iteration Cycle Flowchart

This workflow illustrates the core SCF process implemented in quantum chemistry codes like PySCF, ADF, Gaussian, and SIESTA [10] [4] [11]. The cycle begins with an initial guess for the electron density or density matrix, typically constructed via superposition of atomic densities (minao), parameter-free Hückel theory, or atomic potentials (vsap) [10]. The Fock matrix is then constructed using this density, and the Kohn-Sham equations are solved to obtain new molecular orbitals and a resulting output density. The convergence is checked by comparing the input and output densities (or Hamiltonian matrices), with the process terminating when their difference falls below a predetermined tolerance [11].

Several common challenges can disrupt SCF convergence. Systems with small HOMO-LUMO gaps, such as metals or conjugated molecules, often exhibit oscillatory behavior during iterations [4]. Open-shell systems with localized d- or f-elements, as well as transition state structures with dissociating bonds, present additional difficulties due to nearly degenerate electronic configurations [4]. Convergence problems may also stem from non-physical initial conditions, including improper bond lengths, incorrect spin multiplicity, or inappropriate basis sets [4].

Mathematical Framework of Mixing Algorithms

Linear and Damping Methods

Damping represents the simplest SCF acceleration technique, historically employed by Hartree in early atomic structure calculations [12]. This approach stabilizes the SCF process by linearly mixing density (or Fock) matrices from consecutive iterations according to the formula:

Pndamped = (1 - α)Pn + αPn-1

where α represents the mixing factor (0 ≤ α ≤ 1) [12]. The Q-Chem implementation utilizes this approach through its DAMP, DP_DIIS, and DP_GDM algorithms, where the mixing coefficient is specified indirectly via the NDAMP parameter (α = NDAMP/100) [12]. Damping is particularly effective during initial SCF iterations when density matrix fluctuations are most pronounced, but it typically slows convergence in later stages once the electronic structure has stabilized [12].

DIIS (Pulay) Method

The Direct Inversion in the Iterative Subspace (DIIS) method, also known as Pulay mixing, represents a significant advancement over simple damping [10] [11]. Rather than using only the previous iteration, DIIS constructs an optimized extrapolation of the Fock matrix by minimizing the norm of the residual vector [\textbf{F},\textbf{PS}] using information from multiple previous iterations [10]. The mathematical formulation involves finding optimal coefficients ci for the linear combination:

FDIIS = Σ ciFi

subject to the constraint Σ ci = 1, by minimizing ⟨ΔR|ΔR⟩ where ΔR = [F,PS] [4]. Key adjustable parameters in DIIS implementations include:

- N: The number of DIIS expansion vectors (default typically 10), where higher values (e.g., 25) enhance stability while lower values produce more aggressive convergence [4]

- Cyc: The number of initial SCF iterations before DIIS commences (default typically 5), allowing initial equilibration through simpler mixing [4]

- Mixing: The fraction of computed Fock matrix used in constructing the next guess (default typically 0.2), with lower values (e.g., 0.015) improving stability for problematic cases [4]

Broyden Methods

Broyden's method represents a quasi-Newton approach that updates an approximation to the inverse Jacobian matrix using information from previous iterations [13]. Unlike DIIS, which minimizes the residual norm directly, Broyden methods employ a secant update approach to approximate the Jacobian of the nonlinear SCF system [13]. The mathematical formulation can be expressed through the update formula:

J(m+1) = J(m) + (|ΔR(m)⟩ - J(m)|Δx(m)⟩)⟨Δx(m)| / ⟨Δx(m)|Δx(m)⟩

where Δx represents the change in the input vector (density) and ΔR represents the change in the residual [13]. Multiple variants exist, including:

- Johnson's algorithm (BEDM2): Utilizes multiple-step recursive equations with weighted minimization of both the initial Jacobian guess and subsequent updates [13]

- Eyert's algorithm (BEDM1): Employs a simpler formulation without recursion, potentially requiring fewer total iterations [13]

Experimental comparisons using silicon atom electronic structure calculations demonstrated that Eyert's BEDM1 algorithm achieved convergence with fewer iterations than Johnson's BEDM2 approach [13].

Comparative Analysis of Mixing Algorithms

Performance Across Chemical Systems

Table 1: Algorithm Performance Across System Types

| System Type | Recommended Algorithm | Key Parameters | Typical Iteration Count |

|---|---|---|---|

| Small molecules, insulators | DIIS/Pulay (default) | Mixing=0.2, History=5-10 | 10-20 [11] |

| Metallic systems | Broyden | Weight=0.1-0.3, History=5-10 | 15-30 [11] |

| Open-shell transition metals | DIIS with damping | N=25, Cyc=30, Mixing=0.015 [4] | 30-50+ [4] |

| Small HOMO-LUMO gap systems | Level shifting + DIIS | LevelShift=0.1-0.5 [10] | 20-40 [10] |

| f-element systems | Mixed algorithm | nmix=10, ca=0.05 [14] | 20-40 [14] |

The performance of mixing algorithms exhibits significant dependence on the electronic structure of the system under investigation. For well-behaved molecular systems with substantial HOMO-LUMO gaps, DIIS/Pulay mixing typically provides the most rapid convergence, making it the default in codes like PySCF and SIESTA [10] [11]. Metallic systems with near-degenerate frontier orbitals often benefit from Broyden's method, which can better handle the delicate convergence requirements of these systems [11]. For particularly challenging cases such as localized f-element compounds, FEFF's SCF implementation recommends an initial period of simple mixing before activating Broyden's method (nmix=10, ca=0.05) [14].

Parameter Optimization Studies

Table 2: Optimal Parameter Values for SCF Algorithms

| Algorithm | Parameter | Default Value | Optimal Range | Effect of Increasing |

|---|---|---|---|---|

| DIIS | N (expansion vectors) | 10 [4] | 15-25 (difficult cases) [4] | Enhanced stability |

| Mixing factor | 0.2 [4] | 0.015-0.1 (difficult) [4] | Slower but more stable | |

| Cycle start (Cyc) | 5 [4] | 20-30 (difficult) [4] | More initial equilibration | |

| Broyden | History steps | 2 [11] | 5-10 [13] | Better convergence, more memory |

| Mixing weight | 0.25 [11] | 0.1-0.4 [11] | Higher = more aggressive | |

| Damping | NDAMP | 75 [12] | 50-90 [12] | Higher = stronger damping |

| MAXDPCYCLES | 3 [12] | 5-20 (problematic) [12] | More iterations with damping |

Experimental optimization of SCF parameters reveals critical trade-offs between stability and convergence speed. For the DIIS algorithm, increasing the number of expansion vectors (N=25) and decreasing the mixing factor (Mixing=0.015) significantly enhances stability for problematic systems at the cost of slower convergence [4]. Similarly, delaying the onset of DIIS through higher Cyc values (e.g., 30) allows the system to approach self-consistency through simpler mixing before applying aggressive acceleration [4].

In Broyden methods, increasing the history length generally improves convergence but requires additional memory resources [13]. Comparative studies between Johnson's and Eyert's Broyden implementations for silicon atom calculations demonstrated that Eyert's algorithm (BEDM1) required fewer total iterations, though the implementation details significantly impacted overall computational efficiency [13].

Experimental Protocols and Methodologies

Benchmarking SCF Algorithm Performance

Systematic comparison of SCF mixing algorithms requires carefully controlled computational experiments. The following protocol, adapted from multiple sources, provides a robust methodology for evaluating algorithm performance:

System Selection: Choose diverse test systems representing different electronic structure challenges:

Initialization: Employ consistent initial guess strategies across all tests, with

minao(minimal basis superposition) oratom(superposition of atomic densities) typically providing the most balanced starting point [10]Convergence Criteria: Utilize standardized tolerance values:

Performance Metrics: Track multiple convergence indicators:

- Total SCF iteration count

- Computational time per iteration

- Convergence trajectory (monotonic vs. oscillatory)

- Final energy accuracy relative to reference values

This methodology enables direct comparison between mixing algorithms while controlling for system-specific factors that might influence convergence behavior.

Protocol for Parameter Optimization

Determining optimal mixing parameters for specific chemical systems follows an iterative procedure:

Parameter Optimization Workflow

For oscillatory convergence, decrease mixing weights (e.g., Mixing=0.015-0.09) or increase damping factors [4]. For slow but monotonic convergence, increase mixing weights (e.g., SCF.Mixer.Weight=0.3-0.8) or employ more aggressive DIIS settings [11]. For true divergence, implement stronger damping (NDAMP=50-90) or level shifting (LevelShift=0.1-0.5), particularly in the early SCF cycles [12] [10].

Table 3: Research Reagent Solutions for SCF Methodology

| Tool/Software | Primary Mixing Algorithms | Specialized Features | Typical Applications |

|---|---|---|---|

| PySCF [10] | DIIS, SOSCF, Damping, Level shifting | Second-order SCF (SOSCF), stability analysis | Molecular systems, Python integration |

| ADF [4] | DIIS, MESA, LISTi, EDIIS, ARH | Advanced algorithms for difficult cases | Transition metals, open-shell systems |

| Q-Chem [12] | DIIS, GDM, Damping, DP_DIIS | Combined damping-DIIS algorithms | Organic molecules, spectroscopy |

| SIESTA [11] | Pulay, Broyden, Linear | Hamiltonian or density mixing | Periodic systems, metallic clusters |

| FEFF [14] | Broyden, Simple mixing | f-element convergence protocols | X-ray spectroscopy, solids |

| Gaussian [15] | GEDIIS, DIIS | Composite methods, solvent models | Organic chemistry, drug design |

The selection of computational tools significantly influences the available mixing algorithms and their implementation details. PySCF offers a flexible Python environment with comprehensive SCF controls, including the unique second-order SCF (SOSCF) solver for quadratic convergence [10]. ADF provides specialized algorithms like MESA and LISTi specifically designed for challenging systems such as transition metal complexes [4]. SIESTA enables switching between density matrix and Hamiltonian mixing, with Broyden often outperforming for metallic and magnetic systems [11].

The mathematical foundation of SCF iterations encompasses diverse mixing algorithms, each with distinct strengths across various chemical systems. DIIS/Pulay methods generally provide optimal performance for well-behaved molecular systems, while Broyden techniques excel for metallic and magnetic materials. Challenging cases involving f-elements or open-shell transition metals often require specialized protocols combining initial damping with advanced acceleration. This systematic comparison reveals that parameter optimization remains system-dependent, with stability generally favored over aggressive convergence in production calculations. Future methodology development will likely focus on adaptive algorithms that automatically adjust mixing parameters based on real-time convergence behavior, further reducing the need for researcher intervention in SCF calculations.

High-Throughput Screening (HTS) stands as a foundational pillar in contemporary drug discovery, enabling researchers to rapidly test hundreds of thousands of chemical compounds against biological targets. However, the immense data generated by these screens presents significant computational challenges that can only be addressed through robust and automated Self-Consistent Field (SCF) algorithms. SCF theory provides a powerful framework for predicting the equilibrium morphology of polymeric systems by iteratively computing space-dependent polymer density and associated potential fields from chain statistics propagators until self-consistent conditions are satisfied. In the context of HTS data analysis, SCF methods enable researchers to navigate complex data landscapes characterized by technical variations, positional effects, and assay artifacts that frequently lead to false positives and negatives in drug repositioning efforts.

The pressing need for advanced SCF methodologies emerges from the growing complexity of modern screening initiatives. As noted in research on computational drug repositioning, publicly available HTS data from resources like PubChem Bioassay and ChemBank contain inherent variability stemming from both technological sources (batch, plate, and positional effects) and biological factors (non-selective binders) [16]. These challenges are further compounded by the trend toward ultra-HTS (uHTS) assay miniaturization, where submicroliter fluid handling introduces additional complexity in data standardization and interpretation [17]. Within this landscape, robust SCF algorithms become indispensable for extracting meaningful biological signals from increasingly noisy and complex screening data, ultimately accelerating the discovery of novel therapeutics for conditions ranging from cancer to neurodegenerative diseases.

Key Challenges in HTS Data Analysis

Technical and Biological Variability

The analysis of HTS data confronts multiple sources of variability that can compromise screening outcomes if not properly addressed. Technical artifacts represent a fundamental challenge, with batch effects, plate positioning (row or column biases), and day-to-day operational variations introducing significant noise into screening results [16]. Research examining the PubChem CDC25B dataset (AID 368) revealed substantial variation in z'-factors—a common measure of assay quality—across different run dates, with compounds screened in March 2006 showing markedly lower z'-factors than those run in August and September of the same year [16]. Such technical variability can profoundly impact activity scores and outcomes, potentially leading to both false positive and false negative results in drug repositioning efforts.

Beyond technical considerations, biological complexity introduces additional challenges for HTS data analysis. The presence of non-selective binders, compound aggregation, and target heterogeneity can all confound screening results [16] [18]. This biological noise is particularly problematic in phenotypic screening approaches, which aim to produce disease-relevant phenotypes but often struggle to distinguish true targets from off-target effects. As noted in research on cancer therapeutics screening, "there remains a pressing need for more relevant phenotypic assays" that can effectively highlight targets rather than off-targets [18]. These technical and biological challenges collectively underscore the necessity for sophisticated computational approaches like SCF that can normalize data and extract meaningful patterns from inherently noisy HTS datasets.

Data Completeness and Metadata Limitations

The utility of publicly available HTS data for computational repositioning depends critically on data completeness and the availability of comprehensive metadata. Significant differences exist between major screening repositories in terms of the annotation provided with screening results. For instance, while the Broad ChemBank database includes raw datasets with batch, plate, row, and column annotations for each screened compound along with replicate fluorescence readings, the PubChem Bioassay database typically lacks these critical metadata elements [16]. The PubChem CDC25B dataset, as originally available through the public database, contained no information on batch, plate, or within-plate position for each screened compound, making it impossible to investigate or correct for important technical sources of variation.

This metadata gap presents a substantial barrier to effective secondary analysis of HTS data. Without plate-level annotation, researchers cannot conduct essential quality control assessments, evaluate positional effects, or implement appropriate normalization strategies to address technical variability [16]. Even when data is available, differences in assay protocols, measurement techniques, and activity thresholds across screening centers complicate comparative analyses and data integration. These limitations highlight the critical need for standardized data reporting practices in HTS research and robust computational methods capable of handling heterogeneous and incomplete datasets in drug discovery workflows.

SCF Theory and Algorithmic Advancements

Fundamental SCF Equations for Polymeric Systems

Self-Consistent Field theory provides a mathematical framework for modeling the behavior of inhomogeneous polymeric systems through a set of self-consistent equations that describe the interaction between polymer density distributions and potential fields. For diblock copolymer films, the free energy in the grand canonical ensemble takes the form:

[ \frac{F}{kbT} = -e^{\mu}Q + \rhoc \int dr \left[ \chi N \phiA(r) \phiB(r) + \frac{1}{2} \kappa N \left( \phiA(r) + \phiB(r) - 1 \right)^2 \right] - \rhoc \int dr \left[ \omegaA(r) \phiA(r) + \omegaB(r) \phiB(r) \right] + \rhoc \int dr \frac{H(r)}{N} \left[ \LambdaA \phiA(r) + \LambdaB \phiB(r) \right] ]

where (Q) represents the partition function of a single copolymer chain in the mean field, (\phiA(r)) and (\phiB(r)) are local concentrations of A and B segments, (\chi) is the Flory-Huggins parameter quantifying segment incompatibility, and (\kappa) is the inverse isothermal compressibility that enforces incompressibility [19]. The fields experienced by A and B segments are given by:

[ \frac{\omegaA(r)}{N} = \chi \phiB(r) + \kappa \left( \phiA(r) + \phiB(r) - 1 \right) + \Lambda_A H(r) ]

[ \frac{\omegaB(r)}{N} = \chi \phiA(r) + \kappa \left( \phiA(r) + \phiB(r) - 1 \right) + \Lambda_B H(r) ]

These equations are solved iteratively with the chain propagators (q(r,s)) and (q^\dagger(r,1-s)), which satisfy the modified diffusion equation:

[ \frac{\partial q(r,s)}{\partial s} = \Delta q(r,s) - \omega(r) q(r,s) ]

with initial conditions (q(r,0) = 1) and (q^\dagger(r,1) = 1) [19]. The local segment concentrations are then calculated from these propagators, closing the self-consistent loop.

Adaptive Discretization for Enhanced Accuracy

Traditional SCF calculations face significant accuracy-efficiency trade-offs dependent on the numerical methods employed for system discretization and equation-solving. Conventional approaches using uniform finite-difference grids often struggle with sharp interfaces and boundary conditions, particularly in systems like polymer brushes where grafting ends are fixed using Dirac delta functions as initial conditions [19]. These challenges necessitate much finer contour discretization compared to free chains, demanding substantial additional computational resources.

Recent algorithmic innovations address these limitations through adaptive discretization schemes that dynamically enhance resolution in regions influenced by external forces or boundary conditions. This approach strategically increases spatial discretization where external forces are present and refines contour discretization at grafting points, achieving significantly higher accuracy with minimal additional computational cost [19]. For complex three-dimensional polymeric systems—such as block copolymer films with cylindrical or spherical morphologies or particle-grafted chain polymer brushes with angular-dependent morphologies—this adaptive method enables studies that were previously computationally prohibitive. The implementation of such advanced discretization strategies represents a crucial step toward making robust SCF calculations feasible for the complex systems encountered in HTS data analysis and materials design.

Table 1: Comparison of SCF Numerical Methods

| Method Type | Spatial Discretization | Contour Handling | Best For | Limitations |

|---|---|---|---|---|

| Spectral Methods | Fourier series expansion | No discretization needed | Periodic systems with known symmetry | Requires prior morphology knowledge |

| Pseudo-Spectral Methods | Switches between Fourier/real-space | Requires discretization | Balance of accuracy & efficiency | Limited for non-periodic boundaries |

| Real-Space Methods | Finite difference grids | Requires discretization | Complex systems, symmetry breaking | Computationally expensive in 3D |

| Adaptive Real-Space | Non-uniform finite difference | Adaptive refinement | Systems with sharp interfaces/boundaries | Implementation complexity |

Experimental Framework for SCF Efficiency Analysis

Systematic Protocol for Mixing Parameter Optimization

A rigorous experimental framework is essential for systematically evaluating SCF efficiency across different parameter configurations. The following protocol outlines a comprehensive approach for mixing parameter optimization in polymeric systems:

System Preparation: Begin with an incompressible melt of asymmetric AB diblock copolymer molecules with degree of polymerization N, confined between two flat surfaces. The majority block A should occupy volume fraction f of each chain, with both blocks sharing statistical segment length b [19].

Parameter initialization: Initialize the simulation with reasonable guesses for the potential fields ( \omegaA(r) ) and ( \omegaB(r) ) based on the expected morphology and interaction parameters.

Propagator solution: Solve the modified diffusion equation for the chain propagators ( q(r,s) ) and ( q^\dagger(r,1-s) ) using the current field estimates, employing appropriate numerical methods (spectral, real-space, or adaptive real-space) based on system characteristics [19].

Density calculation: Calculate new local segment concentrations ( \phiA(r) ) and ( \phiB(r) ) from the propagators using the single-chain partition function Q and chemical potential μ.

Field update: Update the fields using the new density estimates according to the self-consistent equations, mixing old and new fields using appropriate algorithms to ensure convergence [19].

Convergence check: Evaluate whether the fields and densities have reached self-consistency within a specified tolerance. If not, return to step 3 with the updated fields.

Parameter exploration: Systematically vary mixing parameters including spatial discretization, contour discretization, iteration limits, and mixing algorithms to evaluate their impact on convergence speed and solution accuracy.

This protocol enables direct comparison of SCF algorithmic efficiency across different numerical approaches and parameter spaces, providing quantitative metrics for optimization.

Performance Metrics and Evaluation Criteria

The assessment of SCF algorithm performance requires multiple quantitative metrics that capture both computational efficiency and solution accuracy:

Convergence rate: Measure the number of iterations required to reach self-consistency, defined as the point where the mean absolute change in fields between iterations falls below a predetermined threshold (e.g., ( 10^{-6} )).

Computational resource utilization: Track memory usage, CPU time, and parallelization efficiency across different algorithm implementations and discretization schemes.

Numerical accuracy: Evaluate accuracy by comparing computed free energies, density profiles, and morphological features against analytical solutions or highly refined benchmark simulations.

Spatial resolution capability: Assess the algorithm's ability to resolve sharp interfaces, boundary layers, and localized features without numerical instabilities or excessive computational cost.

Scalability: Analyze performance with increasing system size and complexity to determine practical limits and identify potential bottlenecks in real-world applications.

These metrics collectively provide a comprehensive framework for evaluating SCF algorithm performance and guiding selection of appropriate numerical methods for specific HTS data analysis applications.

Comparative Analysis of SCF Methodologies

Quantitative Performance Assessment

The evaluation of different SCF methodologies reveals significant variation in computational performance and accuracy across algorithmic approaches. Recent research demonstrates that adaptive real-space methods achieve notably improved accuracy in polymer brush systems with minimal additional computational cost compared to uniform discretization approaches [19]. In simulations of diblock copolymer films, adaptive discretization successfully resolved sharp interfacial regions that caused instabilities in standard real-space methods, while maintaining computational times within practical limits for high-throughput applications.

Table 2: SCF Algorithm Performance Comparison

| Algorithm Type | Convergence Speed | Memory Requirements | 3D Capability | Accuracy at Interfaces | Implementation Complexity |

|---|---|---|---|---|---|

| Spectral | Fast (when symmetry known) | Moderate | Limited | High for periodic systems | Low (for symmetric systems) |

| Standard Real-Space | Slow to moderate | High | Good | Low to moderate | Moderate |

| Pseudo-Spectral | Moderate | Moderate | Fair | Moderate | High |

| Adaptive Real-Space | Moderate to fast | Adaptive (lower overall) | Excellent | High | High |

For HTS applications where computational efficiency must be balanced against solution accuracy, adaptive SCF methods present a particularly compelling approach. By concentrating computational resources where they are most needed—at interfaces, near boundaries, and in regions of high field gradient—these algorithms provide the robustness required for automated processing of large screening datasets without excessive computational overhead. This characteristic makes them ideally suited for integration into HTS pipelines where hundreds or thousands of simulations may be required to fully characterize compound activity across different assay conditions and biological targets.

Integration with HTS Data Processing Workflows

The effective integration of SCF methodologies into HTS data processing requires careful consideration of computational workflow design and implementation specifics. Successful integration involves multiple stages:

Data preprocessing and normalization: Before SCF analysis, HTS data must undergo rigorous preprocessing to address technical artifacts. This includes applying normalization methods such as z-score, percent inhibition, or median-based approaches to correct for plate-based effects and other sources of systematic variation [16].

Quality control assessment: Implement automated quality control metrics such as z'-factors, signal-to-background ratios, and coefficient of variation calculations to identify potential assay artifacts or data quality issues that could compromise subsequent SCF analysis [16].

Algorithm selection: Choose appropriate SCF numerical methods based on dataset characteristics—spectral methods for data with periodic structures or known symmetry, real-space methods for complex or non-periodic systems, and adaptive approaches for datasets with sharp interfaces or boundary effects [19].

High-performance computing implementation: Leverage parallel computing architectures to enable simultaneous processing of multiple screening plates or conditions, significantly reducing turnaround time for large-scale HTS campaigns.

Result validation and interpretation: Implement automated validation protocols to ensure SCF results correspond to physically meaningful states rather than numerical artifacts, incorporating statistical measures to assess result reliability and reproducibility.

This integrated approach ensures that SCF methodologies effectively address the specific challenges of HTS data analysis while maintaining computational efficiency appropriate for large-scale screening environments.

Table 3: Key Research Reagent Solutions for HTS and SCF Implementation

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Public HTS Databases | PubChem Bioassay, ChemBank | Repository of screening results & metadata | Source of primary screening data for drug repositioning [16] |

| SCF Algorithm Codes | 1D-3D SCF code (publicly available) | Simulation of polymeric systems | Modeling polymer morphology & interactions [19] |

| Quality Control Metrics | Z'-factor, signal-to-background ratio | Assessment of assay quality & reliability | Identifying technical artifacts in HTS data [16] |

| Normalization Methods | Z-score, percent inhibition, median polish | Correction of technical variability | Standardizing HTS data across plates/batches [16] |

| Fluid Handling Systems | Submicroliter dispensers | Miniaturized assay implementation | Enabling uHTS in 1536-well formats [17] |

Visualization of SCF Workflows and Relationships

HTS-SCF Integrated Analysis Pipeline

SCF Algorithm Decision Framework

The integration of robust and automated Self-Consistent Field methodologies into High-Throughput Screening pipelines represents a critical advancement in addressing the pervasive challenges of technical variability, data complexity, and analytical reproducibility in drug discovery. The development of adaptive SCF algorithms that dynamically optimize discretization strategies specifically for regions influenced by external forces or boundary effects demonstrates particularly promising potential for enhancing both computational efficiency and solution accuracy in HTS applications [19]. These algorithmic innovations, coupled with rigorous experimental frameworks for systematic parameter optimization, establish a foundation for more reliable and interpretable screening outcomes.

Looking forward, the ongoing miniaturization of HTS assays toward submicroliter volumes and the growing complexity of biological screening models will further intensify the need for sophisticated computational approaches like SCF [17]. The continued refinement of these methodologies—including enhanced parallelization for high-performance computing environments, improved convergence algorithms for challenging systems, and tighter integration with experimental data streams—will play an essential role in unlocking the full potential of HTS for drug repositioning and novel therapeutic discovery. By addressing both the technical challenges of HTS data analysis and the computational limitations of traditional SCF approaches, these advances promise to accelerate the translation of screening results into clinically impactful therapeutics across a broad spectrum of human diseases.

Linking SCF Efficiency to Drug Discovery Pipelines and Biomolecular Simulation

The efficiency of the Self-Consistent Field (SCF) method is a critical determinant of performance across multiple domains within computational drug discovery. In quantum chemistry, SCF algorithms solve the fundamental electronic structure problem, forming the basis for Hartree-Fock and Density Functional Theory calculations essential for molecular modeling [4]. Concurrently, in pharmaceutical engineering, Supercritical Fluid (SCF) technology represents a green processing method for nanomedicine development, where mixing parameters between drug solutions and supercritical carbon dioxide directly impact nanoparticle properties [20]. This guide provides a systematic comparison of SCF efficiency research, examining parameter optimization strategies and performance outcomes across biomolecular simulation and drug processing applications to inform researcher methodology selection.

Comparative Analysis of SCF Performance Metrics

SCF Convergence Acceleration Methods in Electronic Structure Calculations

The SCF method for electronic structure calculations employs various convergence acceleration algorithms with distinct performance characteristics. The following table compares key acceleration methods based on implementation complexity, stability, and computational cost:

Table 1: Performance comparison of SCF convergence acceleration methods in electronic structure calculations

| Method | Implementation Complexity | Convergence Stability | Computational Cost | Ideal Use Cases |

|---|---|---|---|---|

| DIIS | Moderate | Moderate (can oscillate in difficult systems) | Low to Moderate | Standard systems with reasonable HOMO-LUMO gaps |

| MESA | Low | High | Low | Systems with small HOMO-LUMO gaps, metallic systems |

| LISTi | Moderate | High | Moderate | Open-shell systems, transition metal complexes |

| EDIIS | Moderate | High | Moderate | Difficult systems where DIIS fails |

| ARH | High | Very High | High | Extremely challenging cases (e.g., dissociating bonds) |

Direct inversion in the iterative subspace (DIIS) represents the most widely used acceleration approach, though its performance depends heavily on parameter tuning. Research indicates that adjusting DIIS parameters can significantly impact convergence behavior. For challenging systems, reducing the mixing parameter to 0.015 (from default 0.2) and increasing DIIS expansion vectors to 25 (from default 10) enhances stability despite increased iteration count [4]. The augmented Roothaan-Hall (ARH) method provides a robust alternative through direct minimization of the total energy as a function of the density matrix using a preconditioned conjugate-gradient approach, though at higher computational expense [4].

Machine Learning Approaches for Supercritical Fluid Process Optimization

In pharmaceutical processing, machine learning methods have demonstrated remarkable performance in predicting drug solubility in supercritical CO₂, a critical SCF efficiency metric:

Table 2: Performance comparison of ML models for predicting drug solubility in supercritical CO₂

| Model Architecture | R² Score | RMSE | Key Advantages | Limitations |

|---|---|---|---|---|

| Ensemble (XGBR + LGBR + CATr) | 0.9920 | 0.08878 | Captures complex non-linear relationships | Requires large datasets for training |

| XGBoost Regression (XGBR) | Not reported | Not reported | Handles missing values effectively | Parameter tuning complexity |

| Light Gradient Boosting (LGBR) | Not reported | Not reported | Fast training speed | May overfit on small datasets |

| CatBoost Regression (CATr) | Not reported | Not reported | Excellent categorical data handling | Higher memory usage |

Advanced ensemble frameworks combining multiple machine learning regressors with bio-inspired optimization algorithms have achieved exceptional predictive accuracy for pharmaceutical solubility in supercritical CO₂, with an ensemble of Extreme Gradient Boosting Regression, Light Gradient Boosting Regression, and CatBoost Regression optimized by the Hippopotamus Optimization Algorithm reaching R² = 0.9920 and RMSE = 0.08878 [21]. These models successfully capture the complex non-linear relationships between thermodynamic conditions and drug solubility that challenge traditional empirical and semi-empirical methods.

Experimental Protocols for SCF Efficiency Research

Protocol 1: SCF Convergence Testing for Electronic Structure Methods

Objective: Systematically evaluate SCF convergence acceleration methods for biomolecular systems with small HOMO-LUMO gaps or open-shell configurations.

Materials and Setup:

- Molecular system coordinates (properly optimized with realistic bond lengths and angles)

- Quantum chemistry software (e.g., ADF, Gaussian, Q-Chem)

- Computational resources appropriate for system size

Procedure:

- Initial Setup: Verify atomistic system realism with proper bond lengths and angles. Confirm atomic coordinates use correct units (typically Ångströms) [4].

- Electronic Configuration: Set correct spin multiplicity for open-shell systems. Use unrestricted formalism when necessary.

- Initial Guess Selection: Employ moderately converged electronic structure from previous calculation as initial guess when available.

- Baseline DIIS: Run standard DIIS with default parameters (Mixing=0.2, N=10, Cyc=5).

- Parameter Optimization: For non-converging systems, implement conservative parameters (N=25, Cyc=30, Mixing=0.015, Mixing1=0.09).

- Alternative Algorithms: Test MESA, LISTi, or EDIIS accelerators if DIIS fails.

- Advanced Methods: For persistently problematic systems, implement ARH method or electron smearing with finite temperature (0.01-0.05 Hartree).

- Convergence Monitoring: Track SCF error evolution across iterations, noting oscillatory behavior indicating instability.

Validation: Compare final energies and properties with known reference values where available. For novel systems, verify physical reasonableness of electron density and molecular properties [4].

Protocol 2: Machine Learning Workflow for Supercritical Solubility Prediction

Objective: Develop optimized ensemble machine learning models for predicting drug solubility in supercritical CO₂.

Materials:

- Experimental solubility dataset (temperature, pressure, molecular weight, melting point, solubility)

- Machine learning framework (Python with XGBoost, LightGBM, CatBoost libraries)

- Bio-inspired optimization algorithms (Artificial Protozoa Optimizer, Hippopotamus Optimization Algorithm)

Procedure:

- Data Collection: Compile dataset of experimental samples reflecting thermodynamic conditions and molecular properties. Example: 110 experimental samples for Rifampin, Sirolimus, Tacrolimus, and Teriflunomide [21].

- Data Preprocessing: Normalize features, handle missing values, and split data into training/testing sets.

- Model Initialization: Implement three base regressors: XGBoost Regression, Light Gradient Boosting Regression, and CatBoost Regression.

- Optimization: Apply bio-inspired optimization algorithms (APO and HOA) to hyperparameter tuning.

- Ensemble Construction: Combine optimized base models through weighted averaging or stacking ensemble methods.

- Validation: Perform k-fold cross-validation to ensure robustness and generate prediction intervals using bootstrapping.

- Interpretability Analysis: Apply SHAP and FAST sensitivity analysis to identify feature importance.

- Performance Evaluation: Calculate R², RMSE, and other relevant metrics on holdout test set.

Validation: Compare predicted versus experimental solubility values across multiple temperature and pressure conditions. Validate model transferability with novel pharmaceutical compounds not included in training data [21].

Visualization of SCF Research Workflows

SCF Convergence Optimization Pathway

SCF Convergence Optimization Pathway

Supercritical Fluid Drug Development Workflow

Supercritical Fluid Drug Development Workflow

Research Reagent Solutions for SCF Studies

Table 3: Essential research reagents and computational tools for SCF efficiency studies

| Category | Specific Tools/Reagents | Function/Purpose | Application Context |

|---|---|---|---|

| Computational Software | ADF, Gaussian, Q-Chem | Electronic structure calculations | Biomolecular simulation, SCF convergence studies |

| Machine Learning Libraries | XGBoost, LightGBM, CatBoost | Building predictive models for solubility | Supercritical fluid process optimization |

| Optimization Algorithms | Artificial Protozoa Optimizer, Hippopotamus Optimization Algorithm | Hyperparameter tuning for ML models | Enhancing prediction accuracy in SCF processes |

| Supercritical Fluids | Carbon dioxide (SCCO₂) | Environmentally friendly solvent | Nanomedicine preparation, drug particle formation |

| Pharmaceutical Compounds | Rifampin, Sirolimus, Tacrolimus, Teriflunomide | Model drugs for solubility studies | Benchmarking SCF processes for pharmaceuticals |

| Analysis Tools | SHAP, FAST sensitivity analysis | Model interpretability and feature importance | Understanding factors affecting SCF efficiency |

Discussion and Performance Outlook

The comparative analysis reveals significant differences in optimization approaches for SCF efficiency across computational chemistry and pharmaceutical processing domains. In electronic structure calculations, parameter tuning focuses on numerical stability through mixing parameters and convergence thresholds [4], while supercritical fluid process optimization employs sophisticated machine learning ensembles to capture complex thermodynamic relationships [21].

For biomolecular simulations, our findings indicate that no single SCF acceleration method dominates across all system types. DIIS with optimized parameters provides the best performance for most standard systems, while MESA offers advantages for metallic systems with small HOMO-LUMO gaps. ARH, despite its computational expense, delivers reliable convergence for the most challenging cases like transition states with dissociating bonds [4]. Recent advances in polarizable force fields further complicate SCF convergence requirements, as explicit treatment of polarization effects introduces additional computational complexity [22].

In pharmaceutical applications, ensemble machine learning methods have demonstrated superior performance compared to traditional empirical and semi-empirical models for predicting drug solubility in supercritical CO₂ [21] [20]. The integration of bio-inspired optimization algorithms with multiple regressors achieves exceptional predictive accuracy (R² = 0.9920), enabling more efficient design of supercritical nanomedicine production processes. These computational advances are particularly valuable given the experimental challenges in directly measuring process parameters within sealed supercritical equipment maintaining high-temperature and high-pressure environments [20].

Future directions in SCF efficiency research will likely involve greater integration between quantum mechanical modeling and machine learning approaches, with emerging hybrid quantum-classical workflows showing promise for addressing complex biomolecular interactions [23]. The ongoing development of polarizable force fields that more accurately represent electronic polarization effects will also drive continued innovation in SCF algorithms for biomolecular simulation [22].

Advanced SCF Methodologies: From Fixed-Damping to Adaptive Algorithms

Comparative Analysis of Fixed Damping vs. Adaptive Line Search Strategies

Self-Consistent Field (SCF) iterations are a fundamental computational method for solving electronic structure problems in quantum chemistry and materials science, particularly within Kohn-Sham density-functional theory (DFT) and Hartree-Fock methods. The convergence behavior of these iterations profoundly impacts the reliability and efficiency of computational workflows across diverse scientific domains, including drug development and materials design [8]. A critical parameter governing SCF convergence is the damping factor (or mixing parameter), which controls how drastically the electron density or potential updates between iterations.

Traditionally, SCF simulations employ fixed damping, where a constant damping parameter is preselected based on user experience or heuristic rules. While computationally straightforward, this approach often requires manual tuning and suffers from reliability issues with challenging systems. In response, adaptive line search strategies have emerged as robust alternatives that automatically optimize the damping parameter at each SCF step [8] [24].

This guide provides a systematic comparison of these competing approaches, framing the analysis within broader research efforts to optimize mixing parameters for enhancing SCF efficiency and robustness, particularly for high-throughput computational environments.

Theoretical Foundations and Algorithmic Principles

The Self-Consistent Field Problem

The SCF method aims to find the electronic ground state by solving a series of one-electron equations where the potential depends on the electron density itself, creating a nonlinear fixed-point problem. The standard approach uses damped, preconditioned iterations of the form:

$$V{next} = V{in} + \alpha P^{-1}(V{out} - V{in})$$

Here, $V{in}$ and $V{out}$ represent input and output potentials, $P$ is a preconditioner, and $\alpha$ is the damping parameter controlling step size [8]. The central challenge lies in selecting $\alpha$ to ensure stable, monotonic convergence to the ground state.

Fixed Damping Approach

The fixed damping approach selects a constant $\alpha$ value prior to simulation initiation. This selection often relies on:

- Empirical wisdom from similar chemical systems

- Heuristic rules implemented in high-throughput frameworks

- Trial-and-error preliminary calculations

While simple to implement, this method suffers from significant limitations. Optimal $\alpha$ values vary substantially between systems, and suboptimal choices can lead to slow convergence, oscillatory behavior, or complete SCF failure, particularly in challenging systems like metals, surfaces, or transition-metal alloys [8].

Adaptive Line Search Strategies

Adaptive line search strategies determine the damping parameter dynamically during SCF iterations. The approach developed by Herbst and Levitt employs a backtracking line search based on an inexpensive, theoretically sound quadratic model of the energy as a function of the damping parameter [8] [24].

At each SCF step $n$, given a trial potential $Vn$ and search direction $\delta Vn$, the algorithm seeks an optimal step size $\alpha_n$ such that:

$$V{n+1} = Vn + \alphan \delta Vn$$

The $\alpha_n$ is selected to ensure energy decrease, providing mathematical guarantees of convergence under mild conditions [8]. This approach is fully automatic, parameter-free, and compatible with existing preconditioning and acceleration techniques like Anderson acceleration [24].