Electronic Structure Convergence: Fundamental Principles, Methods, and Practical Applications in Drug Discovery

This article provides a comprehensive guide to electronic structure convergence, a cornerstone of computational chemistry and materials science.

Electronic Structure Convergence: Fundamental Principles, Methods, and Practical Applications in Drug Discovery

Abstract

This article provides a comprehensive guide to electronic structure convergence, a cornerstone of computational chemistry and materials science. Tailored for researchers and drug development professionals, it explores the fundamental quantum mechanical principles governing electron behavior, compares traditional and modern computational methods including semiempirical and machine learning approaches, and offers practical, code-specific troubleshooting strategies for overcoming convergence failures. By validating predictions against experimental data and comparing method performance across biological and material systems, this resource bridges theory and application, empowering scientists to reliably predict molecular properties and accelerate the design of novel therapeutics and advanced materials.

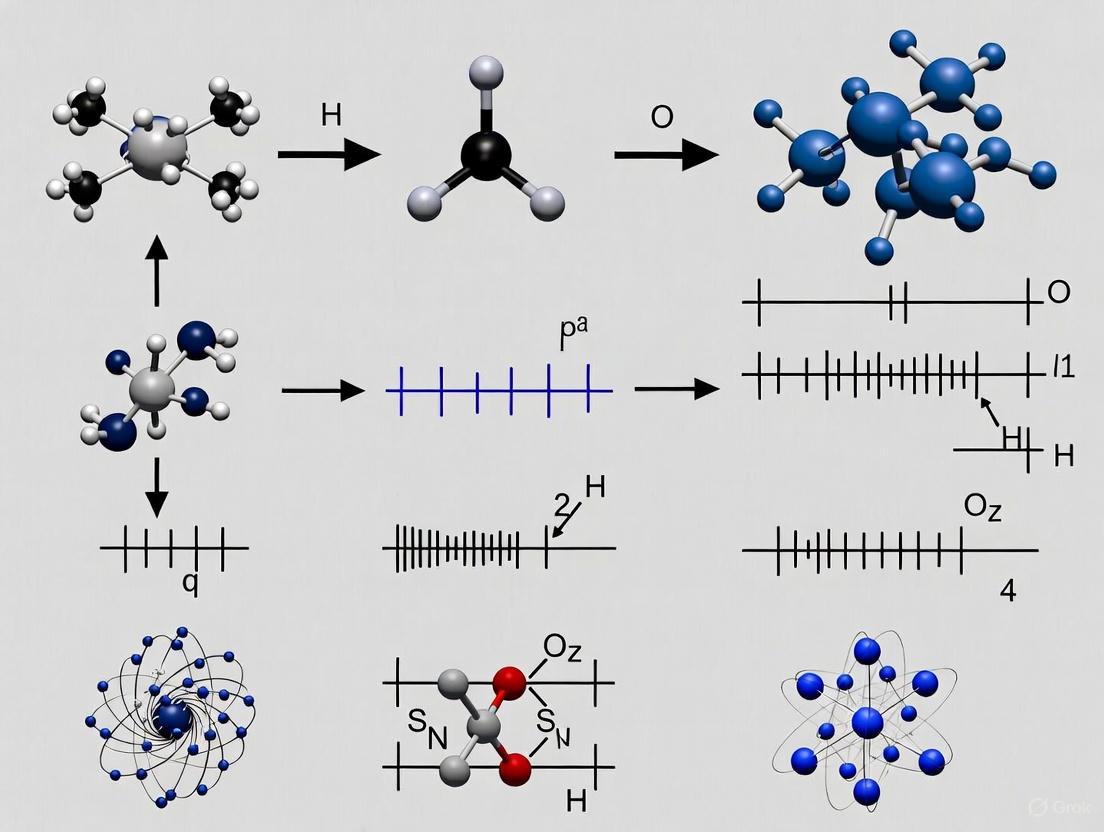

The Quantum Mechanical Bedrock: Understanding What Electronic Structure Convergence Means

The electronic structure of a system describes the arrangement and behavior of electrons within it, ultimately determining most of its physical and chemical properties. At its core, defining an electronic structure involves solving the many-body Schrödinger equation for the system's electrons under the influence of atomic nuclei. The fundamental challenge in electronic structure theory is the exponential complexity of the many-body wave function, which has driven the development of a wide range of approximation methods and computational approaches [1]. This technical guide examines the core components of electronic structure theory—wavefunctions, Hamiltonians, and the Schrödinger equation—within the critical context of electronic structure convergence, a fundamental consideration for obtaining physically meaningful and numerically accurate results in computational research.

The importance of this framework extends across multiple scientific domains. In drug development, accurate electronic structure calculations enable researchers to predict reaction pathways, understand enzyme mechanisms, and design targeted molecular therapeutics by providing insights into electronic properties, binding affinities, and reaction barriers that are inaccessible through purely experimental approaches. The journey from the fundamental quantum equations to practical computational methods requires careful attention to convergence at multiple levels, ensuring that approximations neither compromise accuracy nor impose prohibitive computational costs.

Theoretical Foundations

The Schrödinger Equation

The Schrödinger equation forms the cornerstone of quantum mechanics, providing a non-relativistic description of how quantum states evolve over time. Discovered by Erwin Schrödinger in 1926, this partial differential equation represents the quantum counterpart to Newton's second law in classical mechanics [2]. The time-dependent Schrödinger equation is written as:

[ i\hbar\frac{\partial}{\partial t}|\Psi(t)\rangle = \hat{H}|\Psi(t)\rangle ]

where (i) is the imaginary unit, (\hbar) is the reduced Planck's constant, (|\Psi(t)\rangle) is the quantum state vector of the system, and (\hat{H}) is the Hamiltonian operator [2]. For many practical applications in electronic structure theory, we utilize the time-independent Schrödinger equation:

[ \hat{H}|\Psi\rangle = E|\Psi\rangle ]

where (E) represents the energy eigenvalues corresponding to the stationary states of the system [2]. The solutions to this eigenvalue equation provide both the wavefunctions and allowed energy levels of the system.

A critical property of the Schrödinger equation is its linearity, which ensures that any linear combination of solutions is itself a solution. This principle of superposition enables the description of complex quantum states through appropriate combinations of simpler basis states [2]. Another essential mathematical property is unitarity, which guarantees the conservation of probability over time, ensuring that the total probability of finding a particle in all possible states remains constant at unity [2].

The Hamiltonian Operator

The Hamiltonian operator (\hat{H}) represents the total energy of the quantum system and determines its time evolution. For a general molecular system, the electronic Hamiltonian can be expressed in second-quantized form as:

[ \hat{H}^e = \sum{p,q} hq^p \hat{a}p^\dagger \hat{a}q + \frac{1}{2} \sum{p,q,r,s} g{r,s}^{p,q} \hat{a}p^\dagger \hat{a}q^\dagger \hat{a}r \hat{a}s ]

where (hq^p) are the one-electron integrals encompassing kinetic energy and nuclear attraction, (g{r,s}^{p,q}) are the two-electron integrals representing electron-electron repulsion, and (\hat{a}p^\dagger) and (\hat{a}q) are creation and annihilation operators respectively [1].

Each physically measurable quantity in quantum mechanics has a corresponding operator, with the eigenvalues of that operator representing the only possible values that can be observed experimentally [3]. The quantum mechanical operator for any observable is constructed from its classical mechanical expression by replacing the Cartesian momenta ({pi}) with the differential operator (-i\hbar\frac{\partial}{\partial qj}) while leaving the position coordinates (q_j) unchanged [3].

Table 1: Core Components of the Quantum Hamiltonian for Molecular Systems

| Component | Mathematical Representation | Physical Significance |

|---|---|---|

| Kinetic Energy | (-\sum{l}\frac{\hbar^2}{2ml}\frac{\partial^2}{\partial q_l^2}) | Energy from electron motion |

| Potential Energy | (\sum{l}\frac{1}{2}k(ql-ql^0)^2 + L(ql-q_l^0)) | Electron-nucleus attraction and external fields |

| Electron-Electron Interaction | (\frac{1}{2}\sum{p,q,r,s}g{r,s}^{p,q}\hat{a}p^\dagger\hat{a}q^\dagger\hat{a}r\hat{a}s) | Coulomb repulsion between electrons |

Wavefunctions and Their Interpretation

The wavefunction (\Psi) provides a complete description of a quantum system. In the position-space representation, the wavefunction (\Psi(x,t)) contains all information about the system, with the square of its absolute value (|\Psi(x,t)|^2) defining a probability density function [2]. This probabilistic interpretation, formulated by Max Born, states that (|\Psi(x,t)|^2) gives the probability per unit volume of finding a particle at position (x) and time (t) [2] [4].

For many-electron systems, wavefunctions must satisfy the Pauli exclusion principle, requiring that they be antisymmetric with respect to the exchange of any two electrons. This antisymmetry condition is naturally incorporated through the use of Slater determinants, which provide the appropriate mathematical construction for representing multi-electron wavefunctions while enforcing the indistinguishability of identical particles [1].

The Heisenberg uncertainty principle imposes fundamental limitations on the precision with which certain pairs of physical properties, such as position and momentum, can be simultaneously known [4]. This principle emerges directly from the mathematical structure of quantum mechanics and has profound implications for the interpretation of wavefunctions, which can describe the probable locations of electrons but cannot specify definite trajectories.

Electronic Structure Methods and Convergence

Fundamental Approximations

Electronic structure methods typically begin with the Born-Oppenheimer approximation, which separates nuclear and electronic motion by exploiting the significant mass difference between nuclei and electrons. This allows researchers to solve the electronic Schrödinger equation for fixed nuclear positions, dramatically simplifying the computational problem. The resulting electronic wavefunctions and energies then form the basis for determining molecular structures, properties, and reaction pathways.

For periodic solids, Bloch's theorem provides another crucial simplification by exploiting translational symmetry [5] [6]. This theorem states that the wavefunctions of a periodic system can be written as the product of a plane wave and a periodic function:

[ \psi{n\textbf{k}}(\textbf{r}) = e^{i\textbf{k} \cdot \textbf{r}} u{n\textbf{k}}(\textbf{r}) ]

where (u_{n\textbf{k}}(\textbf{r})) has the periodicity of the crystal lattice, k is a wavevector in the Brillouin zone, and (n) is the band index [5] [6]. This transformation moves the problem from real space to reciprocal space, where the Brillouin zone represents the fundamental periodicity in momentum space.

Computational Methodologies

Multiple computational approaches have been developed to solve the electronic Schrödinger equation, each with specific strengths and limitations:

Table 2: Electronic Structure Methods and Their Convergence Characteristics

| Method | Theoretical Foundation | Convergence Considerations | Typical Applications |

|---|---|---|---|

| Density Functional Theory (DFT) | Kohn-Sham equations with approximate functionals | k-point sampling, basis set size, density cutoff | Periodic solids, surfaces, large molecules [5] [6] |

| Hartree-Fock (HF) | Wavefunction theory with mean-field approximation | Basis set completeness, integral thresholds | Molecular properties, starting point for correlated methods |

| Coupled Cluster (CC) | Exponential wavefunction ansatz | Excitation level, basis set, perturbative corrections | Accurate thermochemistry, reaction barriers [1] |

| Full Configuration Interaction (FCI) | Exact solution within basis set | Exponential scaling with system size | Benchmark calculations, small systems [1] |

| Neural Network Quantum States (NNQS) | Neural network parameterization of ψ | Network architecture, training convergence, sampling efficiency | Strongly correlated systems [1] |

The QiankunNet framework represents a recent advancement that combines Transformer architectures with efficient autoregressive sampling to solve the many-electron Schrödinger equation [1]. This approach utilizes a Transformer-based wave function ansatz that captures complex quantum correlations through attention mechanisms, while employing Monte Carlo Tree Search (MCTS) for efficient sampling of electron configurations [1]. For molecular systems up to 30 spin orbitals, QiankunNet has achieved correlation energies reaching 99.9% of the FCI benchmark, demonstrating its potential for high-accuracy calculations [1].

Convergence Fundamentals

Electronic structure convergence refers to the process of systematically refining computational parameters until the results become stable within a desired tolerance. Key convergence parameters include:

- Basis set completeness: The choice and size of the basis set used to expand molecular orbitals significantly impact accuracy. Larger basis sets provide more flexibility but increase computational cost [5].

- k-point sampling: For periodic systems, integration over the Brillouin zone is approximated using a finite set of k-points [5] [6]. Denser k-point meshes are required for metals than for insulators due to the sharp features in their electronic structure near the Fermi level.

- Integration grids: Numerical integration of exchange-correlation functionals in DFT requires careful convergence with respect to grid density.

- Geometric convergence: Structural optimization must be carried out until forces on atoms and stress tensors fall below specified thresholds.

Recent trends in high-throughput computing have driven the development of automated convergence protocols, with k-point densities as high as 5,000 k-points/Å⁻³ sometimes required to achieve total energy accuracy better than 1 meV per atom for thermodynamic studies [5] [6]. Methods based on machine learning are also being explored to select optimal k-point grids tailored to specific problems [5] [6].

Electronic Structure Convergence Hierarchy

Experimental Protocols and Methodologies

First Principles Workflow

A systematic protocol for electronic structure calculations ensures proper convergence and reliable results:

System Preparation

Basis Set Selection

- Choose an appropriate basis set type (plane waves, Gaussian-type orbitals, numerical atomic orbitals)

- Perform initial calculations with a moderate basis set size

- Systematically increase basis set quality until target properties converge

Hamiltonian Parameterization

- Select the appropriate level of theory (DFT functional, Hartree-Fock, correlated method)

- For DFT, choose exchange-correlation functional based on system properties

- For wavefunction methods, determine the reference wavefunction and correlation treatment

Self-Consistent Field (SCF) Procedure

- Construct initial density matrix or electron density

- Solve the Kohn-Sham or Hartree-Fock equations iteratively

- Employ convergence acceleration techniques (DIIS, density mixing) if needed

- Verify SCF convergence through energy and density changes

Property Evaluation

- Calculate electronic energy, forces, and stresses

- Determine electronic properties (band structure, density of states, molecular orbitals)

- Evaluate response properties (polarizabilities, vibrational frequencies)

For the specific case of the QiankunNet framework, the methodology incorporates several innovative steps [1]:

Physics-Informed Initialization: The neural network parameters are initialized using truncated configuration interaction solutions, providing a principled starting point for variational optimization [1].

Autoregressive Sampling: The wavefunction amplitude for each electron configuration is sampled using a layer-wise Monte Carlo Tree Search that naturally enforces electron number conservation [1].

Variational Optimization: The energy expectation value is minimized with respect to the neural network parameters using stochastic gradient descent [1].

Parallel Evaluation: Local energy evaluation is implemented in parallel using a compressed Hamiltonian representation to reduce memory requirements [1].

Convergence Assessment Protocol

A rigorous convergence assessment protocol is essential for producing reliable electronic structure results:

Basis Set Convergence

- Perform calculations with systematically increasing basis set size

- Plot target properties (energy, forces, band gaps) versus basis set quality

- Apply extrapolation techniques where appropriate

k-Point Convergence for Periodic Systems

Geometric Convergence

- Optimize atomic positions until maximum forces fall below threshold (typically 0.01 eV/Å)

- For cell optimization, ensure stress tensor components are sufficiently small

- Verify that the final structure corresponds to a true minimum through vibrational analysis

Methodological Convergence

- Compare results across different theoretical approaches (e.g., DFT vs. wavefunction methods)

- Assess the impact of including higher-order excitations in correlated methods

- For DFT, evaluate sensitivity to exchange-correlation functional choice

Convergence Assessment Workflow

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for Electronic Structure Research

| Tool Category | Representative Examples | Primary Function | Convergence Considerations |

|---|---|---|---|

| DFT Codes | VASP, Quantum ESPRESSO, ABINIT | Periodic system calculations | k-point sampling, plane-wave cutoff, SCF mixing parameters [5] [6] |

| Quantum Chemistry Packages | Gaussian, PySCF, CFOUR | Molecular electronic structure | Basis set choice, integral thresholds, correlation treatment level |

| Wavefunction-Based Codes | NECI, MRCC, BAGEL | Correlated electron methods | Active space selection, excitation levels, perturbative corrections |

| Neural Network Quantum State Frameworks | QiankunNet, NetKet | Neural network representation of ψ | Network architecture, sampling efficiency, training convergence [1] |

| Electronic Structure Analysis | VESTA, VMD, ChemCraft | Visualization and property extraction | Isosurface values, plotting parameters |

Results and Data Presentation

Quantitative Comparison of Method Performance

Systematic benchmarking of electronic structure methods provides crucial guidance for selecting appropriate computational approaches:

Table 4: Performance Benchmarks of Electronic Structure Methods for Molecular Systems

| Method | Basis Set | System Size (Spin Orbitals) | Energy Accuracy (% FCI) | Computational Cost Scaling |

|---|---|---|---|---|

| Hartree-Fock | cc-pVTZ | 10-50 | 80-95% | N³-N⁴ |

| DFT (Hybrid Functionals) | cc-pVTZ | 10-1000 | 90-99% | N³-N⁴ |

| CCSD | cc-pVTZ | 10-50 | 95-99% | N⁶ |

| CCSD(T) | cc-pVTZ | 10-30 | 99-99.9% | N⁷ |

| DMRG | cc-pVDZ | 20-100 | 99-99.9% | System-dependent |

| QiankunNet | STO-3G | 10-30 | 99.9% | Polynomial [1] |

| FCI (Reference) | cc-pVDZ | 10-18 | 100% | Exponential |

The data demonstrate that the recently developed QiankunNet framework achieves remarkable accuracy, recovering 99.9% of the FCI correlation energy for molecular systems up to 30 spin orbitals [1]. This performance surpasses conventional second-quantized neural network quantum state approaches, which may struggle to achieve chemical accuracy for challenging systems like N₂ [1].

k-Point Convergence Data

For periodic systems, k-point convergence represents a critical consideration:

Table 5: k-Point Convergence in Periodic DFT Calculations

| System Type | Recommended Initial k-Point Grid | High-Accuracy Grid | Energy Convergence Threshold |

|---|---|---|---|

| Insulators/Semiconductors | 4×4×4 | 8×8×8 | 1 meV/atom |

| Metals | 8×8×8 | 16×16×16 | 1 meV/atom |

| Surfaces/Slab Models | 4×4×1 | 8×8×1 | 5 meV/atom |

| 1D Nanostructures | 1×1×8 | 1×1×16 | 1 meV/atom |

| Molecular Crystals | 2×2×2 | 4×4×4 | 1 meV/atom |

Recent high-throughput computational studies indicate that k-point densities as high as 5,000 k-points/Å⁻³ may be necessary to guarantee total energy accuracy better than 1 meV per atom across diverse crystal structures with varying unit cell sizes and shapes [5] [6]. For metallic systems, additional considerations include the Fermi surface topology and the possible need for tetrahedron integration methods or Gaussian smearing to accelerate convergence [5] [6].

The rigorous definition of electronic structure through wavefunctions, Hamiltonians, and the Schrödinger equation provides the theoretical foundation for understanding and predicting the behavior of electrons in matter. The path from fundamental quantum principles to practical computational methods requires careful attention to convergence at multiple levels, including basis set completeness, k-point sampling, and methodological approximations. Recent advances in neural network quantum states, particularly transformer-based frameworks like QiankunNet, offer promising avenues for addressing strongly correlated systems that challenge conventional electronic structure methods [1].

For researchers in drug development and materials design, a thorough understanding of electronic structure convergence principles is essential for producing reliable computational results. The systematic protocols and benchmarking data presented in this guide provide a foundation for making informed decisions about computational parameters and method selection. As electronic structure theory continues to evolve, with machine learning approaches playing an increasingly prominent role, the fundamental importance of wavefunctions, Hamiltonians, and the Schrödinger equation remains undiminished, continuing to illuminate the quantum mechanical nature of matter at the atomic scale.

The Self-Consistent Field (SCF) method is the fundamental computational algorithm for solving the electronic structure problem in quantum chemistry within the Hartree-Fock (HF) and Kohn-Sham density functional theory (KS-DFT) frameworks [7] [8]. It provides a practical approach to approximate the solution of the many-electron Schrödinger equation by transforming it into a nonlinear eigenvalue problem that must be solved iteratively [9]. At its core, the SCF approach treats electrons as independent particles moving in an average field created by all other electrons, effectively implementing a mean-field approximation [8] [9].

The mathematical foundation of the SCF method is expressed through the Roothaan-Hall equations (for restricted closed-shell systems) and the Pople-Nesbet-Berthier equations (for unrestricted open-shell systems) [8]. These formulations yield a generalized eigenvalue problem:

F C = S C E [7]

In this equation, F represents the Fock matrix (or Kohn-Sham matrix in DFT), C is the matrix of molecular orbital coefficients, S is the atomic orbital overlap matrix, and E is a diagonal matrix of orbital energies [7]. The Fock matrix itself is constructed from several components:

F = T + V + J + K [7]

where T is the kinetic energy matrix, V is the external potential, J is the Coulomb matrix representing electron-electron repulsion, and K is the exchange matrix [7]. The critical challenge arises from the fact that both J and K depend on the density matrix P, which itself is constructed from the occupied molecular orbitals (P = Cₒcc Cₒccᵀ), creating a nonlinear problem that must be solved self-consistently [7] [8].

The SCF Iterative Algorithm: Workflow and Core Implementation

The SCF method follows a well-defined iterative procedure to determine the electronic structure consistently. The complete workflow, including the essential acceleration techniques, can be visualized as follows:

Figure 1: The complete SCF iterative workflow with convergence acceleration

Initial Guess Generation

The iterative process begins with generating an initial guess for the density matrix or molecular orbitals. The quality of this initial guess significantly impacts convergence behavior [7]. Several systematic approaches have been implemented in quantum chemistry packages:

- 'minao' guess: Uses a superposition of atomic densities by projecting minimal basis functions onto the orbital basis set [7]

- 'atom' guess: Employes spin-restricted atomic HF calculations with spherically averaged fractional occupations [7]

- 'huckel' guess: Implements a parameter-free Hückel method based on atomic HF calculations to build a Hückel-type matrix [7]

- '1e' guess: The one-electron (core) guess ignores all interelectronic interactions, typically performing poorly for molecular systems [7]

- 'chk' guess: Reads orbitals from a previous calculation checkpoint file, enabling restarts [7]

Core Iteration Procedure

Once initialized, the SCF method proceeds through these fundamental steps [7] [9]:

- Construct the Fock/Kohn-Sham matrix using the current density matrix

- Solve the generalized eigenvalue problem F C = S C E to obtain new molecular orbitals and energies

- Form a new density matrix from the occupied molecular orbitals: Pμν = ΣCμiCνi

- Check for convergence by comparing the change in energy and density matrix to predefined thresholds

- Repeat until self-consistency is achieved

The self-consistency requirement arises because the Fock matrix depends on the density matrix through the Coulomb and exchange terms, which in turn depends on the molecular orbitals obtained by diagonalizing the Fock matrix [7] [8].

Convergence Challenges and Acceleration Techniques

SCF calculations frequently encounter convergence difficulties, particularly for systems with small HOMO-LUMO gaps, open-shell configurations, transition metals, and dissociating bonds [10]. Several mathematical techniques have been developed to address these challenges.

Convergence Acceleration Algorithms

Table 1: SCF Convergence Acceleration Methods

| Method | Principle | Applications | Advantages | Limitations |

|---|---|---|---|---|

| DIIS (Direct Inversion in Iterative Subspace) [7] [11] | Extrapolates Fock matrix by minimizing norm of [F,PS] commutator | General purpose, default in many codes | Fast convergence for well-behaved systems | May diverge for difficult cases |

| EDIIS/ADIIS [7] | Energy-based DIIS variants | Problematic systems | Improved stability | More computationally expensive |

| SOSCF (Second-Order SCF) [7] | Uses orbital Hessian for quadratic convergence | Systems with small gaps | Rapid convergence near solution | Higher memory requirements |

| Damping [7] | Mixes old and new Fock matrices: Fnew = αFold + (1-α)Fnew | Initial iterations, oscillating solutions | Stabilizes early iterations | Slows convergence |

| Level Shifting [7] [10] | Artificially increases virtual orbital energies | Systems with small HOMO-LUMO gaps | Prevents occupation flipping | Affects properties involving virtual orbitals |

| Smearing [7] [10] | Uses fractional occupations with temperature broadening | Metallic systems, near-degeneracies | Improves convergence | Alters total energy, requires careful parameter choice |

Advanced Preconditioning Techniques

For particularly challenging systems, advanced preconditioning techniques can significantly improve convergence by addressing the ill-conditioning of the SCF Jacobian [11]:

- Kerker Preconditioner: Specifically designed for metallic systems with "charge sloshing" issues, suppressing problematic small-q modes: PKerker(q) = q²/(q² + 4πẑ) [11]

- Elliptic Preconditioner: Solves an elliptic PDE with spatially varying coefficients, effective for heterogeneous systems containing metal/insulator/vacuum regions [11]

- LDOS-Based Preconditioner: Uses the local density of states to approximate the susceptibility operator, adapting to different material characteristics [11]

The relationships between these acceleration techniques and their application points within the SCF workflow are visualized below:

Figure 2: Diagnostic and acceleration technique selection guide for SCF convergence problems

Experimental Protocols for Challenging Systems

Protocol for Open-Shell Transition Metal Complexes

Transition metal complexes with localized d- or f-electrons present significant SCF convergence challenges [10]. The following protocol provides a systematic approach:

Initial Setup

Initial Guess Generation

SCF Procedure

- Apply moderate damping (mixing = 0.05-0.1) for initial 10-20 cycles [7] [10]

- Use level shift of 0.1-0.3 Hartree to prevent flipping between nearly degenerate states [7]

- Enable DIIS acceleration after initial equilibration cycles (start cycle 5-10) [7]

- Increase DIIS subspace dimension to 15-25 vectors for improved stability [10]

Fallback Strategies

Protocol for Systems with Small HOMO-LUMO Gaps

Systems with vanishing band gaps (metals, narrow-gap semiconductors, or transition states) require specialized treatment:

Initialization

- Use Hückel guess or superposition of atomic potentials [7]

- Consider fractional initial occupations for near-degenerate orbitals

Convergence Acceleration

Parameter Tuning

The Scientist's Toolkit: Essential Computational Reagents

Table 2: Essential Research Reagent Solutions for SCF Calculations

| Reagent Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Initial Guess Generators | MINAO, SAP, Hückel, VSAP [7] | Provides starting point for SCF iteration | Determines initial convergence behavior; system-specific selection critical |

| Density Mixers | Linear mixing, Pulay DIIS, EDIIS, ADIIS [7] [11] | Combines density/Fock matrices from previous iterations | Acceleration of convergence; DIIS is standard approach |

| Preconditioners | Kerker, Elliptic, LDOS-based [11] | Improves conditioning of SCF update equations | Essential for metallic systems and charge sloshing |

| Occupancy Smearers | Fermi-Dirac, Gaussian, Methfessel-Paxton [7] [10] | Applies fractional occupations to near-degenerate states | Systems with small gaps; smearing width is key parameter |

| Orbital Optimizers | Second-Order SCF (SOSCF), CIAH method [7] | Uses orbital Hessian for improved convergence | Final convergence stages; higher computational cost |

| Stability Analyzers | Internal/external stability analysis [7] | Checks if solution corresponds to minimum | Post-SCF validation; detects saddle points |

Advanced Methodologies and Emerging Approaches

Stability Analysis and Solution Validation

Even after apparent SCF convergence, the obtained wavefunction may not represent the true ground state [7]. Stability analysis is essential for validating solutions:

- Internal stability: Checks whether the solution is a minimum within the same variational space (e.g., RHF → RHF) [7]

- External stability: Tests if energy can be lowered by expanding to a larger variational space (e.g., RHF → UHF) [7]

- Implementation: Construct the electronic Hessian matrix and diagonalize to identify negative eigenvalues indicating instabilities [7]

Novel Mathematical Formulations

Recent research has introduced innovative perspectives on the SCF method:

- Online PCA Approach: Reformulates SCF as principal component analysis for non-stationary time series, where distribution and principal components mutually update until equilibrium [12]

- Tangent-Angle Matrix Framework: Measures errors between subspaces via canonical angle tangents, providing precise local and asymptotic contraction factors [11]

- Stochastic SCF Methods: Employ trace estimators and Krylov-subspace approximations to avoid full diagonalization for large systems [11]

These emerging approaches offer promising directions for addressing persistent convergence challenges in complex electronic structure calculations, particularly for systems with strong correlation, metallic character, or large scale.

The accurate prediction of a molecule's electronic structure is a cornerstone of computational chemistry, with broad implications for drug discovery, materials science, and catalysis. This prediction hinges on the convergence between calculated properties and physical reality, a process governed by two fundamental concepts: electron correlation and the HOMO-LUMO gap. Electron correlation describes the intricate, non-random interactions between electrons in a quantum system, accounting for their Coulombic repulsion. The energy associated with these interactions, termed the correlation energy, is formally defined as the difference between the exact, non-relativistic energy of a system and its energy calculated at the Hartree-Fock (HF) limit, where electrons move in an average field of others [13] [14]. The HOMO-LUMO gap is the energy difference between the Highest Occupied Molecular Orbital (HOMO) and the Lowest Unoccupied Molecular Orbital (LUMO). This gap is a critical indicator of a molecule's stability, reactivity, and optoelectronic properties [15].

The HF method, while recovering approximately 99% of the total energy, fails to describe the instantaneous correlation of electron motion due to its mean-field approximation. This omission is chemically significant, as the missing ~1% of energy is on the order of chemical reaction energies [16] [14]. Electron correlation is often categorized into:

- Static Correlation: Crucial for systems with (near-)degenerate ground states, requiring a multi-configurational description.

- Dynamical Correlation: Accounts for the correlated movement of electrons due to their instantaneous repulsion, which is missing in a single Slater determinant [13].

The HOMO-LUMO gap is deeply intertwined with these correlation effects. The energies of the frontier orbitals (HOMO and LUMO) are not single-particle energies but are influenced by the correlated electron field. The gap provides information on a molecule's excitation energy, chemical hardness, and its propensity to participate in charge transfer, directly influencing properties like redox potentials and spectroscopic behavior [17] [18] [15]. The convergence of calculated electronic properties towards their true values therefore depends critically on the accurate treatment of both the HOMO-LUMO gap and electron correlation.

Computational Methodologies and Protocols

Accurately capturing electron correlation and predicting the HOMO-LUMO gap require a hierarchy of computational methods, each with specific protocols.

Post-Hartree-Fock Correlation Methods

To overcome the limitations of HF theory, several post-Hartree-Fock methods have been developed to incorporate electron correlation [13] [14].

- Configuration Interaction (CI): The CI wavefunction is constructed as a linear combination of the HF ground-state Slater determinant with excited-state determinants. The coefficients are variationally optimized to minimize the energy.

- Protocol: The molecular orbitals from an HF calculation are used to generate excited configurations (e.g., single, double excitations). A Hamiltonian matrix is constructed and diagonalized to obtain the CI wavefunction and energy. Full CI is exact within the chosen basis set but is computationally prohibitive for all but the smallest systems.

- Møller-Plesset Perturbation Theory: This is a non-variational approach that treats electron correlation as a perturbation to the HF Hamiltonian. The second-order correction (MP2) is widely used for its favorable cost-to-accuracy ratio.

- Coupled Cluster (CC): This method forms an exponential ansatz of the wavefunction (e.g., CCSD(T)) and is considered the "gold standard" for single-reference systems, providing high accuracy at a greater computational cost than MP2.

- Density Functional Theory (DFT): DFT fundamentally differs from wavefunction-based methods. It applies a correlation correction to a single Slater determinant by using an exchange-correlation (XC) functional, which is a function of the electron density. The accuracy of a DFT calculation is entirely determined by the quality of its XC functional [16].

Table 1: Comparison of Electronic Structure Methods for Energy and HOMO-LUMO Gap Calculation.

| Method | Theoretical Foundation | Handles Electron Correlation? | Computational Cost | Typical Application |

|---|---|---|---|---|

| Hartree-Fock (HF) | Wavefunction Theory | No (Only Fermi correlation) | Low | Starting point for post-HF methods |

| Density Functional Theory (DFT) | Density Functional Theory | Yes (Approximate via XC functional) | Low to Medium | Large systems, geometry optimization, screening |

| Møller-Plesset 2nd Order (MP2) | Wavefunction Theory | Yes (Perturbative) | Medium | Dynamical correlation for small/medium systems |

| Coupled Cluster (CCSD(T)) | Wavefunction Theory | Yes (High accuracy) | Very High | Benchmark calculations for small molecules |

| Configuration Interaction (CI) | Wavefunction Theory | Yes (Variational) | Very High (Full CI) | Multi-reference systems, benchmark studies |

High-Throughput Workflow for HOMO-LUMO Gap Prediction

Recent advances leverage machine learning (ML) to predict the HOMO-LUMO gap for high-throughput screening, circumventing the cost of direct quantum mechanical (QM) calculations [17] [19] [20]. The following workflow, synthesized from recent studies, details this protocol.

Diagram 1: High-throughput HOMO-LUMO gap prediction workflow.

Detailed Experimental Protocol:

- Dataset Curation: A large and diverse set of molecules is collected, represented as SMILES (Simplified Molecular Input Line Entry System) strings. For example, the "Ring Vault" dataset contains over 200,000 cyclic molecules [17].

- 3D Conformer Generation and Optimization: SMILES strings are converted into 3D molecular structures. Tools like

Auto3D(combined with the AIMNet2 model) orRDKitare used to generate and optimize the lowest-energy conformations [17]. - Quantum Mechanical (QM) Calculation on a Subset:

- A statistically significant subset of molecules (e.g., 10-20% of the dataset) is selected for QM calculation to generate training data.

- Level of Theory: Common methods include:

- Density Functional Theory (DFT): For high accuracy, functionals like ωB97M-D3(BJ) with a def2-TZVPP basis set are used. Single-point calculations are performed in multiple charge states (-1, 0, +1) to derive properties like ionization potential (IP) and electron affinity (EA) [17].

- Extended Tight-Binding (xTB): Methods like GFN2-xTB offer a faster, semi-empirical alternative for large-scale calculations, often with Boltzmann averaging over multiple conformers [19].

- Calculated Properties: The key outputs are HOMO and LUMO energies (from which the gap is derived), vertical IP, vertical EA, and redox potentials. Solvation effects can be incorporated using implicit solvation models like SMD [17].

- Featurization: Molecular structures are converted into numerical features (descriptors) for ML models. This can be:

- Machine Learning Model Training and Validation:

- The featurized dataset is split into training, validation, and test sets.

- Multiple ML models are trained and compared. State-of-the-art approaches use 3D-enhanced models like AIMNet2, Graph Attention Networks (GAT), and ensemble methods like Gradient Boosting Regression [17] [19]. The QMugs model is an example trained specifically on drug-like molecules [20].

- Model performance is evaluated using metrics like R² (coefficient of determination) and MAE (Mean Absolute Error). The AIMNet2 model, for instance, has achieved R² > 0.95 for key electronic properties [17].

- High-Throughput Prediction: The trained and validated model is used to predict the HOMO-LUMO gaps and other electronic properties for the entire molecular database, enabling rapid virtual screening.

Applications in Scientific Research

The convergence of accurate electron correlation treatment and HOMO-LUMO gap prediction is critical in several cutting-edge research domains.

Rational Drug Design

In drug discovery, the HOMO-LUMO gap is a vital descriptor for predicting the reactivity and stability of potential drug candidates. A study on designing Scutellarein derivatives for triple-negative breast cancer exemplifies this. Researchers used Density Functional Theory (DFT) to compute the Frontier Molecular Orbitals (HOMO and LUMO) of the derivatives. They then used the energy gap, along with other quantum mechanical descriptors, to approximate chemical reactivity and stability before conducting molecular docking and dynamics simulations. This integrated computational approach helps identify derivatives with optimal binding affinity and metabolic stability early in the discovery process [18].

Functional Materials Discovery

The high-throughput screening framework outlined in Section 2.2 is powerfully applied to materials science. The "Ring Vault" dataset and associated ML models enable the rapid identification of cyclic molecules with tailored electronic properties for organic electronics. By predicting properties like HOMO-LUMO gap, ionization potential, and redox potentials, researchers can fine-tune materials for applications in organic photovoltaics (OPVs), organic light-emitting diodes (OLEDs), and organic field-effect transistors (OFETs). Strategic ring modifications allow for the adjustment of π-conjugation and molecular packing, which are crucial for charge transport in organic semiconductors [17].

Table 2: Key Computational Tools for Electronic Structure and HOMO-LUMO Gap Prediction.

| Tool / Resource Name | Type / Category | Primary Function in Research |

|---|---|---|

| ORCA [17] | Quantum Chemistry Software | Performing ab initio and DFT calculations to obtain benchmark HOMO/LUMO energies and correlation energies. |

| AIMNet2 [17] | Machine Learning Interatomic Potential | A 3D-enhanced ML model for fast and accurate prediction of molecular properties, including HOMO-LUMO gaps, after being fine-tuned on QM data. |

| Auto3D [17] | Computational Chemistry Tool | Automatically generating low-energy 3D molecular conformations from SMILES strings, which is critical for 3D-based ML models and QM calculations. |

| RDKit [19] | Cheminformatics Library | Calculating 2D molecular descriptors, handling molecular data, and performing basic conformer generation for featurization in ML pipelines. |

| QMugs HOMO-LUMO Model [20] | Pre-trained ML Model | A specialized model trained on drug-like molecules from the ChEMBL database, allowing for direct prediction of HOMO-LUMO gaps for pharmaceutical compounds. |

| GFN2-xTB [19] | Semi-empirical Quantum Method | Rapidly computing electronic structures and HOMO-LUMO gaps for large molecular datasets or as a pre-screening step before higher-level DFT. |

The journey toward convergence in electronic structure calculations is navigated by mastering two key physical concepts: the HOMO-LUMO gap and electron correlation. The HOMO-LUMO gap serves as an accessible and computationally tractable proxy for critical molecular properties like stability and reactivity. However, its accurate prediction, particularly for molecules with complex electronic structures, is intrinsically linked to the sophisticated treatment of electron correlation, whether through advanced wavefunction-based methods, density functional approximations, or machine-learned potentials. The emergence of high-throughput workflows that combine robust QM calculations on subsets of data with powerful 3D-enhanced ML models represents a paradigm shift. This synergy is breaking down computational barriers, enabling the rational design of next-generation therapeutic agents and functional materials by providing researchers with an unprecedented view of the electronic landscape.

The pursuit of accurate and computationally tractable methods for solving the electronic Schrödinger equation represents a central challenge in quantum chemistry and condensed matter physics. This whitepaper details the historical development and theoretical foundations of two pivotal methodologies: the Hartree-Fock (HF) method and Density Functional Theory (DFT). Framed within broader research on electronic structure convergence principles, this analysis traces the conceptual evolution from the wavefunction-based approach of HF to the electron density-based formalism of DFT. For researchers and drug development professionals, understanding this progression is essential, as these methods form the computational backbone for predicting molecular properties, reaction mechanisms, and drug-target interactions in silico. The transition from HF to DFT was driven by the need to balance electronic correlation effects with computational efficiency, a trade-off that continues to inform the selection of electronic structure methods in applied research.

The Hartree-Fock Method: Foundations and Limitations

Historical Context and Theoretical Formulation

The Hartree-Fock method originated in the late 1920s, shortly after the discovery of the Schrödinger equation, as one of the first ab initio approaches for approximating the wavefunction and energy of a quantum many-body system [9]. The method is named for Douglas Hartree and Vladimir Fock. Hartree's initial method, termed the self-consistent field (SCF) approach, treated electrons as moving in an average field created by the nucleus and other electrons but lacked a proper quantum mechanical treatment of electron antisymmetry [9]. In 1930, Slater and Fock independently recognized this deficiency and introduced the key insight that the many-electron wavefunction must be antisymmetric with respect to the exchange of any two electrons, in accordance with the Pauli exclusion principle [9]. This led to the representation of the wavefunction as a Slater determinant—a determinant of one-particle orbitals that automatically satisfies the antisymmetry requirement [9] [21].

The HF method approximates the exact N-body wavefunction of a system with a single Slater determinant for fermions [9]. For a closed-shell system, the spatial Hartree-Fock equation takes the form of an eigenvalue problem:

[ f(\mathbf{r1})\psii(\mathbf{r1}) = \epsilonj\psii(\mathbf{r1}) ]

Here, ( f(\mathbf{r1}) ) is the Fock operator, an effective one-electron operator, ( \psii(\mathbf{r1}) ) is a spatial orbital, and ( \epsilonj ) is the corresponding orbital energy [21]. The Fock operator,

[ f(1) = h(1) + \sum{a}^{N/2}2Ja(1) - K_a(1) ]

comprises five major simplifications [9] [21]:

- The Born-Oppenheimer approximation is assumed.

- Relativistic effects are typically neglected.

- The solution is expressed in a finite basis set.

- The wavefunction is described by a single Slater determinant.

- The mean-field approximation is used, where each electron experiences the average field of the others.

The terms in the Fock operator are: ( h(1) ) (the one-electron core Hamiltonian encoding kinetic energy and nuclear attraction), ( Ja(1) ) (the Coulomb operator representing the classical electrostatic repulsion with electron ( a )), and ( Ka(1) ) (the non-local Exchange operator arising from the antisymmetry of the wavefunction, with no classical analogue) [21]. The HF equations are solved iteratively via the Self-Consistent Field (SCF) procedure, where initial guess orbitals are progressively refined until the energy and wavefunction stop changing significantly [9] [21].

Key Limitations and the Electron Correlation Problem

Despite its theoretical importance, the Hartree-Fock method possesses a critical limitation: it neglects electron correlation, specifically Coulomb correlation [9] [22]. While the method fully accounts for Fermi correlation (exchange) via the Slater determinant, the mean-field approximation means that the instantaneous, correlated motion of electrons is not captured [9] [21]. Each electron moves in a static average field, ignoring the fact that electrons actively avoid each other due to Coulomb repulsion. Consequently, the Hartree-Fock energy is always an upper bound to the true ground-state energy, and the method is "insufficiently accurate to make accurate quantitative predictions" for many chemical properties [22]. This inadequacy manifests in its poor description of dispersion forces (London dispersion), reaction barrier heights, and bond dissociation energies [9] [23].

The Rise of Density Functional Theory

Theoretical Foundations: The Hohenberg-Kohn Theorems

Density Functional Theory emerged as a conceptually different approach to the many-electron problem, bypassing the complex N-electron wavefunction in favor of the electron density, ( n(\mathbf{r}) ), a simple function of only three spatial coordinates [24]. The theoretical foundation of DFT rests on two fundamental theorems proved by Hohenberg and Kohn in 1964 [24] [25]:

- The First Hohenberg-Kohn Theorem establishes that the ground-state electron density ( n0(\mathbf{r}) ) uniquely determines the external potential ( V{\text{ext}}(\mathbf{r}) ) (up to an additive constant), and hence all properties of the ground state, including the many-body wavefunction [24]. This eliminates the need for the 3N-dimensional wavefunction.

- The Second Hohenberg-Kohn Theorem provides a variational principle for the energy. It states that a universal energy functional ( E[n] ) exists, and that the correct ground-state density minimizes this functional [24].

While these theorems confirm the existence of an exact DFT, they provide no guidance on constructing the energy functional, particularly its crucial but unknown exchange-correlation (XC) term, ( E_{\text{XC}}[n] ), which must contain all non-classical electron interaction effects and the difference between the kinetic energy of the real system and a fictitious non-interacting system [24].

The Kohn-Sham Ansatz: Mapping to a Non-Interacting System

The practical breakthrough that transformed DFT from a formal theory into a widely applicable computational tool was the Kohn-Sham ansatz introduced in 1965 [25]. Kohn and Sham proposed replacing the original, interacting system with a fictitious system of non-interacting electrons that experiences a modified potential, chosen such that its ground-state density equals that of the real, interacting system [24] [25]. This mapping decomposes the total energy functional into tractable components:

[ E[n] = Ts[n] + E{\text{ext}}[n] + E{\text{H}}[n] + E{\text{XC}}[n] ]

Here, ( Ts[n] ) is the kinetic energy of the non-interacting reference system, ( E{\text{ext}}[n] ) is the electron-nuclear attraction energy, ( E{\text{H}}[n] ) is the classical Hartree (electrostatic) energy, and ( E{\text{XC}}[n] ) is the exchange-correlation functional, which captures all remaining, many-body effects [24]. The Kohn-Sham equations, which resemble one-electron Schrödinger equations, are derived variationally:

[ \left[ -\frac{1}{2} \nabla^2 + V{\text{ext}}(\mathbf{r}) + V{\text{H}}(\mathbf{r}) + V{\text{XC}}(\mathbf{r}) \right] \phii(\mathbf{r}) = \epsiloni \phii(\mathbf{r}) ]

In these equations, ( V{\text{XC}}(\mathbf{r}) = \frac{\delta E{\text{XC}}[n]}{\delta n(\mathbf{r})} ) is the exchange-correlation potential, and the Kohn-Sham orbitals ( \phii(\mathbf{r}) ) are used to construct the density: ( n(\mathbf{r}) = \sum{i=1}^N |\phi_i(\mathbf{r})|^2 ) [23] [24]. Like the HF equations, the Kohn-Sham equations must be solved self-consistently because the potentials depend on the density.

Comparative Analysis: Hartree-Fock vs. Density Functional Theory

The table below summarizes the core distinctions between the Hartree-Fock method and Density Functional Theory.

Table 1: A comparative analysis of the Hartree-Fock method and Density Functional Theory.

| Feature | Hartree-Fock (HF) Method | Density Functional Theory (DFT) |

|---|---|---|

| Fundamental Variable | Many-electron wavefunction, ( \Psi ) | Electron density, ( n(\mathbf{r}) ) |

| Theoretical Foundation | Variational principle applied to a Slater determinant | Hohenberg-Kohn theorems and Kohn-Sham ansatz |

| Treatment of Exchange | Exact, non-local exchange via ( K ) operator | Approximate, via ( E_{\text{XC}}[n] ) functional |

| Treatment of Correlation | Neglects Coulomb correlation entirely | Approximate, via ( E_{\text{XC}}[n] ) functional |

| Computational Cost | Formally ( O(N^4) ) due to 4-index integrals | Formally ( O(N^3) ), similar to HF for a given system |

| Scalability | Less efficient for large systems | More efficient, enabling larger system studies |

| Typical Accuracy | Qualitative; overestimates band gaps, poor for dispersion | Can be highly accurate with modern functionals |

| Key Limitation | Missing electron correlation | Unknown exact ( E_{\text{XC}}[n] ) |

The Functional Hierarchy and DFT in Modern Drug Discovery

The Ladder of Approximations

The accuracy of a DFT calculation is entirely dictated by the choice of the exchange-correlation functional ( E_{\text{XC}}[n] ). Over decades, a hierarchy of approximations, often visualized as "Jacob's Ladder," has been developed [23] [25]:

- Local Density Approximation (LDA): The simplest functional, where the energy density depends only on the local value of the density ( n(\mathbf{r}) ). It is exact for a uniform electron gas but often inadequate for molecules [23].

- Generalized Gradient Approximation (GGA): Improves upon LDA by including the gradient of the density ( \nabla n(\mathbf{r}) ) to account for inhomogeneities. Examples include PBE and BLYP. GGA functionals are widely used for molecular property calculations and hydrogen-bonded systems [23].

- Meta-GGA: Incorporates the kinetic energy density or the Laplacian of the density in addition to the density and its gradient, providing better accuracy for atomization energies and molecular systems [25].

- Hybrid Functionals: Mix a fraction of exact (Hartree-Fock) exchange with GGA or meta-GGA exchange and correlation. The famous B3LYP functional is a prime example. Hybrids like B3LYP and PBE0 are widely used for studying reaction mechanisms and molecular spectroscopy with high accuracy [23] [26] [25].

Applications in Drug Design and Formulation Science

DFT has become an indispensable tool in pharmaceutical research, enabling precision design at the molecular level and accelerating the drug development pipeline [23] [26]. Key applications include:

- Drug-Receptor Interaction Modeling: DFT is used to study the interactions between potential drug molecules (ligands) and their biological targets (receptors). By calculating electronic properties and binding energies, researchers can predict binding affinity and selectivity [26].

- Reaction Mechanism Elucidation: DFT allows researchers to model and replicate the transition states between drugs and their targets, helping to design mechanism-based inhibitors and understand drug metabolism [26].

- Solid Dosage Form Optimization: In formulation science, DFT clarifies the electronic driving forces behind API–excipient co-crystallization. It can predict reactive sites using Fukui functions, guiding the design of co-crystals with enhanced stability and solubility [23].

- Nanocarrier Design: For nanodelivery systems, DFT enables precise calculation of intermolecular interactions (e.g., van der Waals forces, π-π stacking) to optimize carrier surface properties and improve targeting efficiency [23].

Table 2: Key Research Reagent Solutions in Computational Electronic Structure Studies.

| Reagent / Computational Tool | Function in Research |

|---|---|

| Gaussian (Software Package) | A widely used computational chemistry software suite that implements a broad range of quantum chemical methods, including HF, post-HF, and DFT with numerous functionals [25]. |

| Basis Sets | Sets of mathematical functions (e.g., Gaussian-type orbitals) used to expand the molecular orbitals in HF or the Kohn-Sham orbitals in DFT. The choice affects the accuracy and cost of the calculation [9] [23]. |

| B3LYP Functional | A highly popular hybrid exchange-correlation functional in DFT, known for providing good accuracy for a wide range of molecular properties, including geometries and reaction energies [26] [25]. |

| PBE Functional | A popular Generalized Gradient Approximation (GGA) functional, often used in solid-state physics and chemistry for its general reliability without empirical parameters [23]. |

| COSMO Solvation Model | An implicit solvation model that approximates the solvent as a continuous dielectric medium, allowing for more realistic calculations of molecules in solution, which is critical for drug design [23]. |

Workflow and Convergence

The following diagram illustrates the self-consistent field procedure central to both Hartree-Fock and Kohn-Sham Density Functional Theory calculations.

The historical progression from the Hartree-Fock method to the foundations of Density Functional Theory marks a paradigm shift in computational electronic structure theory. The journey began with Hartree-Fock, which provided a robust but incomplete mean-field framework based on the N-electron wavefunction. Its critical failure to account for electron correlation spurred the development of post-Hartree-Fock methods, but these were often computationally prohibitive. The seminal work of Hohenberg, Kohn, and Sham established DFT as a formally exact and computationally efficient alternative, recasting the problem in terms of the electron density. While the accuracy of modern DFT calculations hinges on the approximation used for the unknown exchange-correlation functional, the development of increasingly sophisticated functionals has cemented DFT's role as a cornerstone method in fields ranging from materials science to drug discovery. For researchers engaged in electronic structure convergence principles, this evolution underscores a continuous effort to balance theoretical rigor with practical computational efficiency, a endeavor that continues to drive methodological innovation today.

Computational Arsenal: From Ab Initio to Machine Learning for Robust Convergence

The prediction of molecular and material properties from first principles relies on solving the electronic Schrödinger equation. This task is fundamentally challenging due to the exponential scaling of the quantum many-body problem. Consequently, the field of computational chemistry has developed a diverse hierarchy of electronic structure methods, each representing a different balance between computational cost and predictive accuracy. Understanding these trade-offs is essential for the rational design of simulations in fields ranging from drug development to materials science and catalysis.

This hierarchy spans from highly efficient but approximate semi-empirical methods to systematically improvable, high-accuracy wavefunction-based approaches. The "cost" of a calculation can be measured in terms of computational time, memory, and disk space requirements, which typically scale as a power of the system size (e.g., O(N⁴) for Hartree-Fock, up to O(e^N) for Full Configuration Interaction). "Accuracy" refers to the method's ability to reproduce experimental observables or exact theoretical results. The selection of an appropriate method is therefore a critical step, dictated by the specific scientific question, the size of the system, and the available computational resources. This guide provides an in-depth analysis of this hierarchy, supported by quantitative benchmarks and practical protocols for method selection.

The Methodological Hierarchy: From Force Fields to Coupled Cluster

The landscape of electronic structure methods can be visualized as a pyramid, with the most computationally affordable but approximate methods at the base, and the most accurate but prohibitively expensive methods at the apex. The following diagram illustrates this hierarchy and the logical pathways for method selection based on the target accuracy and available resources.

Figure 1: The hierarchy of electronic structure methods, arranged from low-cost/lower-accuracy to high-cost/high-accuracy. Arrows indicate a typical path for increasing the level of theory in pursuit of greater accuracy.

Categorization of Methods by Cost and Accuracy

Electronic structure methods can be broadly categorized by their theoretical foundation and associated computational scaling. The following table summarizes the key characteristics of the primary methods.

Table 1: Overview of Primary Electronic Structure Methods and Their Characteristics

| Method Class | Representative Methods | Typical Scaling | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Force Fields & Semi-Empirical | HF-3c, PBEh-3c, ωB97X-3c [27] | O(N²) to O(N³) | Very fast; can handle very large systems (millions of atoms) [28]; good for geometry optimization and MD. | Low accuracy; parameters are system-dependent; poor for electron correlation. |

| Density Functional Theory | PBE, B3LYP, M08-HX, ωB97X [29] [27] | O(N³) | Good balance of cost and accuracy for many properties; widely used. | Not systematically improvable; can fail for dispersion, charge transfer, and strongly correlated systems [30]. |

| Wavefunction-Based Post-Hartree-Fock | MP2, CCSD, CCSD(T) [31] [30] | O(N⁵) to O(N⁷) and higher | Systematically improvable (e.g., the "gold standard" CCSD(T)) [31]; high accuracy for energies and properties. | Very high computational cost; limited to small molecules and active spaces. |

| Multireference Methods | CASSCF, CASPT2 [31] | O(e^N) | Essential for bond breaking, diradicals, and excited states with strong correlation. | Extremely high cost; requires expert knowledge to define active space. |

| Specialized & Composite Methods | DMRG, Embedding (QM/MM) [32] [30] | Varies | Allows high-accuracy treatment of a subsystem (e.g., CCSD(T)) embedded in a lower-level environment (e.g., DFT or point charges) [30]. | Complexity of setup; potential errors at the interface between regions. |

Quantitative Performance Benchmarks

The theoretical scaling of methods is informative, but practical decisions require benchmarking against reliable reference data. The following tables summarize the quantitative accuracy of various methods for two critical chemical properties: spin-state energetics in transition metal complexes and redox potentials in quinones.

Table 2: Benchmarking DFT and Wavefunction Methods for Spin-State Energetics (SSE17 Benchmark Set) [31]

| Method | Mean Absolute Error (kcal/mol) | Maximum Error (kcal/mol) | Performance Assessment |

|---|---|---|---|

| CCSD(T) | 1.5 | -3.5 | Gold standard performance. |

| Double-Hybrid DFT (PWPB95-D3, B2PLYP-D3) | < 3 | < 6 | Very good, recommended for transition metal systems. |

| CASPT2 | > 4 | > 10 | Less accurate than CCSD(T) for this property. |

| Common Hybrid DFT (B3LYP*-D3, TPSSh-D3) | 5 - 7 | > 10 | Poor performance; not recommended for spin-state energetics. |

Table 3: Performance of Computational Workflows for Predicting Redox Potentials of Quinones [29]

| Computational Workflow | RMSE (V) vs. Experiment | Key Finding | Recommended Use |

|---|---|---|---|

| DFT (PBE) // Gas-Phase OPT | 0.072 | Least accurate functional tested. | Not recommended for high accuracy. |

| DFT (PBE) // Gas-Phase OPT + Implicit Solvation SPE | ~0.050 (30% error reduction) | Significant improvement with solvation. | Good cost-to-accuracy ratio for screening. |

| DFT (PBE) // Full Implicit Solvation OPT+SPE | ~0.052 | No added value over gas-phase optimization. | Not cost-effective. |

| Low-Level (SEQM/DFTB) OPT + DFT SPE | Comparable to high-level DFT | Equipollent accuracy at significantly lower cost. | Ideal for high-throughput virtual screening. |

Experimental Protocols for Method Assessment

To ensure reliable and reproducible results, a structured protocol for evaluating and applying electronic structure methods is essential. The following section outlines detailed methodologies for two common scenarios: high-throughput screening and high-accuracy surface chemistry modeling.

Protocol 1: High-Throughput Screening of Electroactive Compounds

This protocol, derived from a study on quinone-based energy storage materials, is designed for efficiently screening thousands to millions of candidate molecules [29].

- Initial Molecular Representation: Begin with a SMILES string for each candidate molecule. This is a text-based representation of the 2D molecular structure.

- Geometry Generation and Initial Optimization:

- Use a SMILES interpreter to generate a 2D molecular structure.

- Convert the 2D structure to a 3D geometry.

- Perform an initial geometry optimization using a fast force field (FF) method like OPLS3e to identify a low-energy 3D conformer.

- Quantum Chemical Geometry Optimization:

- Refine the FF-optimized geometry using a faster, lower-level quantum method. The protocol indicates that optimizations can be performed in the gas-phase using methods like:

- Semi-Empirical Quantum Mechanics (SEQM)

- Density Functional Tight Binding (DFTB)

- Refine the FF-optimized geometry using a faster, lower-level quantum method. The protocol indicates that optimizations can be performed in the gas-phase using methods like:

- High-Fidelity Single-Point Energy Calculation:

- Using the geometry from the previous step, perform a single-point energy (SPE) calculation with a higher-level method, typically Density Functional Theory (DFT).

- Crucially, this SPE must include an implicit solvation model (e.g., the Poisson-Boltzmann model, PBF) to account for the electrostatic effects of the solvent. This step is identified as critical for achieving accuracy in predicting properties like redox potentials.

- Descriptor Calculation and Calibration: Calculate the target property (e.g., redox potential from the reaction energy, ΔEᵣₓₙ) and calibrate the results against a small set of experimental measurements to define a linear regression. The study found that using ΔEᵣₓₙ as a descriptor was sufficient, as including zero-point energy and entropic effects to obtain ΔGᵣₓₙ provided only marginal improvements from a high-throughput screening perspective [29].

The workflow for this protocol is visualized below.

Figure 2: Computational workflow for high-throughput screening of molecular properties, balancing accuracy and computational cost [29].

Protocol 2: High-Accuracy Framework for Surface Adsorption Enthalpies

For problems requiring high accuracy, such as predicting adsorption enthalpies on ionic surfaces, a multi-level embedding approach is necessary. The autoSKZCAM framework achieves CCSD(T)-level accuracy at a cost approaching DFT [30].

- System Preparation:

- Model the ionic surface (e.g., MgO(001), TiO₂) using a finite cluster model.

- Place the cluster within an embedding environment of point charges to represent the long-range electrostatic potential of the extended crystal lattice.

- Generate candidate adsorption configurations for the adsorbate molecule on the surface.

- Divide-and-Conquer Energetics: The adsorption enthalpy (H_ad) is partitioned into multiple contributions, each computed with an appropriate method:

- Periodic DFT Calculation: A full periodic DFT calculation is performed for the adsorbate-surface system to obtain a baseline energy and geometry.

- Embedded Correlated Calculation: A higher-level correlated wavefunction theory (cWFT) method, such as CCSD(T), is applied to the chemically relevant part of the system (the adsorbate and its immediate surface environment), which is embedded in the field of point charges. This captures the strong electron correlation effects that DFT may miss.

- Energy Decomposition: The total Had is computed as Had = E(DFT) + ΔE(cWFT), where ΔE(cWFT) is the energy correction from the high-level method. This effectively uses DFT to capture the broad energetics and the cWFT correction to add the necessary accuracy.

- Configuration Validation: The automated nature of the framework allows for the computation of Had for multiple adsorption configurations. The most stable configuration is identified as the one with the most negative Had value that also agrees with experimental data.

- Benchmarking against Experiment: The final predicted H_ad values are compared against a diverse set of experimental measurements (e.g., from temperature-programmed desorption) to validate the framework's accuracy across different types of adsorption (physisorption and chemisorption).

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

In computational chemistry, the "research reagents" are the software, algorithms, and computational resources that enable the simulations. The following table details key components of the modern computational chemist's toolkit.

Table 4: Essential Computational "Reagents" for Electronic Structure Studies

| Toolkit Component | Function | Example Implementations / Notes |

|---|---|---|

| Low-Cost Composite Methods | Provides a good cost-to-accuracy ratio for geometry optimizations and large-system calculations. | HF-3c, PBEh-3c, ωB97X-3c; use small basis sets with empirical corrections [27]. |

| Implicit Solvation Models | Mimics the effect of a solvent on a solute molecule without explicitly modeling solvent molecules, drastically reducing cost. | Poisson-Boltzmann (PBF), Polarizable Continuum Model (PCM); critical for predicting solution-phase properties like redox potentials [29]. |

| Embedding Environments | Allows for high-accuracy calculation on a small "active" region embedded in a lower-level treatment of the larger environment. | Point charge embedding for ionic materials [30]; QM/MM hybrid interfaces [32]. |

| GPU-Accelerated Quantum Chemistry | Leverages graphical processing units (GPUs) to dramatically speed up quantum chemistry calculations. | TeraChem software package; enables fast DFT and low-cost method calculations on consumer-grade hardware [27]. |

| Automated Workflow & Interface Frameworks | Streamlines complex multi-step simulations and facilitates communication between dynamics and electronic structure codes. | SHARC for nonadiabatic dynamics; autoSKZCAM for automated surface chemistry with cWFT [32] [30]. |

| Error Mitigation Strategies | Reduces the impact of noise and errors in calculations, particularly important for near-term quantum computations. | Density matrix purification (e.g., McWeeny purification); active-space reduction [33]. |

The hierarchy of electronic structure methods provides a versatile toolkit for computational research, but no single method is universally superior. The choice of method is a deliberate trade-off. High-throughput virtual screening campaigns for molecular discovery can leverage low-level geometry optimizations followed by DFT single-point calculations with implicit solvation to achieve remarkable efficiency and accuracy [29]. Conversely, resolving complex chemical phenomena on surfaces or in transition metal complexes often necessitates high-accuracy, multi-level frameworks like autoSKZCAM that deliver CCSD(T)-quality results by focusing expensive resources where they are most needed [31] [30].

The future of the field lies in the continued development of intelligent, automated workflows that seamlessly combine different levels of theory. Furthermore, the emergence of new computing paradigms, such as quantum computing for the simulation of strongly correlated systems, is poised to extend this hierarchy beyond classical limitations [34]. By understanding the fundamental trade-offs outlined in this guide, researchers can make informed decisions to effectively and reliably harness computational power for scientific discovery.

Density Functional Theory (DFT) stands as a cornerstone computational method in physics, chemistry, and materials science for investigating the electronic structure of many-body systems. This whitepaper delineates the theoretical underpinnings of DFT, grounded in the Hohenberg-Kohn theorems and the Kohn-Sham equations, which simplify the intractable many-electron problem into a tractable single-electron problem. We present structured protocols for performing robust DFT simulations, including the selection of exchange-correlation functionals and basis sets, convergence parameter optimization, and the integration of machine learning for accelerated discovery. The document further demonstrates DFT's versatile applications through quantitative tables and case studies, spanning the design of nanomaterials, biomolecular adsorption on two-dimensional materials, and drug development. Finally, we explore emerging trends such as automated uncertainty quantification and the synergy between DFT and high-accuracy coupled-cluster methods, framing these advancements within the ongoing research on achieving electronic structure convergence.

Density Functional Theory (DFT) is a computational quantum mechanical modelling method used to investigate the electronic structure of many-body systems, primarily atoms, molecules, and condensed phases. Its versatility and favorable balance between computational cost and accuracy have cemented its status as a workhorse in condensed-matter physics, computational physics, and computational chemistry [35]. The fundamental principle of DFT is that all properties of a many-electron system can be determined by using functionals—functions that accept another function as input and output a single number—of the spatially dependent electron density. This approach reduces the problem of solving for a complex many-body wavefunction, which depends on 3N spatial coordinates for N electrons, to solving for the electron density, which depends on only three spatial coordinates [35].

The formal foundation of DFT is built upon the Hohenberg-Kohn (HK) theorems. The first HK theorem demonstrates that the ground-state electron density uniquely determines the external potential and, consequently, all properties of the system. The second HK theorem defines an energy functional for the system and proves that the ground-state electron density minimizes this energy functional [35]. While revolutionary, the original HK formalism did not provide a practical way to compute the total energy. This was solved by the introduction of the Kohn-Sham (KS) equations, which reformulate the problem as a set of single-electron equations for a fictitious system of non-interacting electrons that generate the same density as the real, interacting system [35]. The total energy functional in the Kohn-Sham scheme can be expressed as:

[ E[n] = Ts[n] + E{ext}[n] + EH[n] + E{xc}[n] ]

Here, ( Ts[n] ) is the kinetic energy of the non-interacting electrons, ( E{ext}[n] ) is the energy from the external potential, ( EH[n] ) is the Hartree energy from classical electron-electron repulsion, and ( E{xc}[n] ) is the exchange-correlation energy, which encapsulates all many-body quantum effects. The central challenge in DFT is finding accurate and efficient approximations for ( E_{xc}[n] ), as the exact functional form remains unknown. Common approximations include the Local Density Approximation (LDA) and more sophisticated Generalized Gradient Approximations (GGA) and hybrid functionals [35].

Computational Methodology and Protocols

Best-Practice DFT Protocols

A critical step in any DFT calculation is the selection of an appropriate computational protocol that balances accuracy and efficiency. Best practices have evolved significantly, moving away from outdated but popular choices like B3LYP/6-31G*, which is known to suffer from inherent errors such as missing London dispersion effects and a strong basis set superposition error (BSSE) [36]. Modern alternatives are more robust, accurate, and sometimes even computationally cheaper. The following decision tree outlines a systematic approach for selecting a computational model.

For most day-to-day applications on molecular systems, a multi-level procedure is recommended to achieve an optimal balance between accuracy and computational cost [36]. The workflow below illustrates a robust protocol for computing a key material property like the bulk modulus, integrating uncertainty quantification.

Table 1: Key Research Reagent Solutions in Computational DFT

| Component | Type/Example | Primary Function | Technical Notes |

|---|---|---|---|

| Exchange-Correlation Functional | GGA (e.g., PBE), Meta-GGA (e.g., SCAN), Hybrid (e.g., B3LYP) | Approximates quantum mechanical exchange & correlation energies | Hybrid functionals mix in Hartree-Fock exchange for improved accuracy [36]. |

| Pseudopotential | Norm-conserving, Ultrasoft, PAW | Replaces core electrons and nucleus to reduce computational cost | Projector Augmented-Wave (PAW) potentials are often recommended for accuracy [37]. |

| Plane-Wave Basis Set | Cutoff Energy (e.g., 500 eV) | Expands the Kohn-Sham wavefunctions | A higher cutoff increases accuracy and computational cost [37]. |

| k-Point Sampling | Monkhorst-Pack grid | Samples the Brillouin zone for periodic systems | A denser grid is needed for metals and complex unit cells [37]. |

| Dispersion Correction | D3, D4, vdW-DF | Corrects for missing long-range van der Waals interactions | Critical for biomolecules and non-covalent interactions [35] [38]. |

| Solvation Model | COSMO, SMD, PCM | Models the effect of a solvent environment | Essential for simulating biochemical reactions and catalysis [36]. |

Optimization of Convergence Parameters

A major challenge in achieving predictive DFT calculations is the selection of numerical convergence parameters, primarily the plane-wave energy cutoff and k-point sampling. Traditionally set by manual benchmarking, this process is being revolutionized by automated approaches using uncertainty quantification (UQ). Research shows that the total energy and derived properties like the equilibrium lattice constant and bulk modulus depend on these parameters, and their errors can be decomposed into systematic and statistical components [37].

An automated UQ approach involves computing the energy surface E(V, ϵ, κ) over a range of volumes (V), energy cutoffs (ϵ), and k-points (κ). Linear decomposition techniques can then represent the systematic and statistical error in this multi-dimensional space, enabling the construction of an error phase diagram. This allows for the implementation of a fully automated tool that requires users to input only a target precision (e.g., 1 meV/atom for fitting machine learning potentials), rather than specific convergence parameters. This method has been shown to reduce computational costs by more than an order of magnitude while guaranteeing precision, which is crucial for high-throughput studies and generating reliable data for machine learning [37].

Furthermore, efficiency can be enhanced by optimizing the self-consistent field (SCF) cycle itself. For instance, using Bayesian optimization to tune charge mixing parameters can significantly reduce the number of SCF iterations needed for convergence, leading to substantial time savings in DFT simulations [39].

Applications in Materials and Biomolecules

DFT in Nanomaterials and Energy Materials

The application of DFT in nanomaterials science has been profound, enabling researchers to model, understand, and predict material properties at a quantum mechanical level. DFT is extensively used to elucidate the electronic, structural, and catalytic attributes of various nanomaterials, such as 2D materials, nanoparticles, and porous frameworks [40]. For example, DFT calculations can predict the band gap of a novel quantum dot or the adsorption energy of a reactant on a catalytic nanoparticle surface, guiding the rational design of materials with enhanced performance.